Intel Optane And 3D XPoint Updates From IDF

by Billy Tallis on August 16, 2016 2:00 PM EST

At Intel Developer Forum this week in San Francisco, Intel is sharing a few more details about its plans for their Optane SSDs using 3D XPoint memory.

The next milestone in 3D XPoint's journey to being a real product will be a cloud-based testbed for Optane SSDs. Intel will be giving enterprise customers free remote access to systems equipped with Optane SSDs so that they can benchmark how their software runs with 3D Xpoint-based storage and optimize it to take better advantage of the faster storage. By offering cloud-based access before even sampling Optane SSDs, Intel can keep 3D XPoint out of the hands of their competitors longer and perhaps make better use of limited supply while still enabling the software ecosystem to begin preparing for the revolution Intel is planning. However, this won't do much for customers who want to integrate and validate Optane SSDs with their existing hardware platforms and deployments.

The cloud-based Optane testbed will be available by the end of the year, suggesting that we might not be seeing any Optane SSDs in the wild this year. But at the same time, the testbed would only be worth providing if its performance characteristics are going to be pretty close to that of the final Optane SSD products. Having announced the Optane testbed like this, Intel will probably be encouraging their partners to share their performance findings with the public, so we should at least get some semi-independent testing results in a few months time.

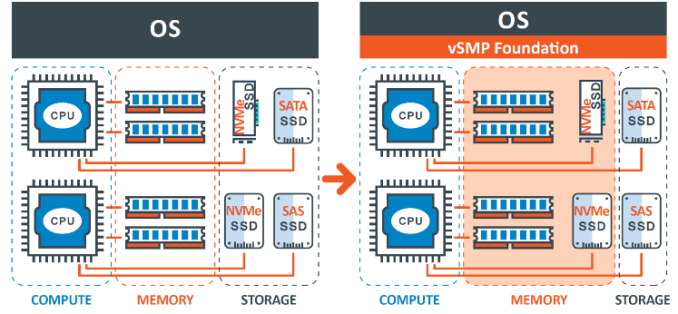

In the meantime, Intel and ScaleMP will be demonstrating a use that Optane SSDs will be particularly well-suited for. ScaleMP's vSMP Foundation software family provides virtualization solutions for high performance computing applications. One of their specialities is providing VMs with far more virtual memory than the host system has DRAM, by transparently using NVMe SSDs—or even the DRAM and NVMe storage of other systems connected via Infiniband—to cache what doesn't fit in local DRAM. The latency advantages of 3D XPoint will make Optane SSDs far better swap devices than any flash-based SSDs, and the benefits should still be apparent even when some of that 3D XPoint memory is at the far end of an Infiniband link.

ScaleMP and Intel have previously demonstrated that flash-based NVMe SSDs can be used as a cost-effective alternative to building a server with extreme amounts of DRAM, and with a performance penalty that can be acceptably small. With Optane SSDs that performance penalty should be significantly smaller, widening the range of applications that can make use of this strategy.

Intel will also be demonstrating Optane SSDs used to provide read caching for cloud application or database servers running on Open Compute hardware platforms.

36 Comments

View All Comments

Cogman - Wednesday, August 17, 2016 - link

@beginner99, You would still want to have some main memory dedicated to currently executing applications. But I could certainly see it being useful for things like phones since they are usually running few apps concurrently (so moving non-active apps into swap makes sense).mkozakewich - Wednesday, August 17, 2016 - link

It would act as vastly expanded memory in devices that would otherwise only have 2 GB of RAM, and it might even mean we could see devices with 1 GB of active RAM that depend on a few GB of XPoint, but we will definitely have real RAM.Cogman - Wednesday, August 17, 2016 - link

It still wouldn't be create for main memory. Applications write very frequently. Every time they call a new method or allocate space for a variable, they are modifying memory (and very frequently those values in the variables change).You would still want some memory for the currently running applications, but maybe you could save some space and not have so much (so have 512mb of main memory and 4gb of swap, for example).

lilmoe - Tuesday, August 16, 2016 - link

As I understand it, this is non-consumer tech. Not yet at least. Consumers are well served with current gen NVMe SSDs, probably more so than they need.This ain't replacing DRAM in the Enterprise either.

CaedenV - Wednesday, August 17, 2016 - link

exactly right. My understanding is that this is for read-heavy enterprise storage. Things like large databases or maybe even Netflix where you have TBs of relatively static storage that several users need to access at a moments notice. Things that need to stay in active memory, but there is too much of it to practically store in DRAM.Morawka - Tuesday, August 16, 2016 - link

Less endurance? anything will have less endurance than RAM because RAM's endurance is unlimited because it is volatile.. When RAM loses power, that's it, the data is gone. When Xpoint loses power, the data is still there, even for decades. It's speed is 4x to 8x slower, sure, but endurance is not really going to be a issue for at least 10-15 years if intel's numbers hold up.ddriver - Tuesday, August 16, 2016 - link

Slow non-volatile memory is not a competitor, much less a substitute for fast volatile memory. Data loss is not a big danger, since enterprise machines have backup power and can flush caches to permanent storage on impending power failure, thus avoiding data loss completely.Intel is stepping up with the fictional hype after it became evident the previous round of hype was all words with no weight to them.

lilmoe - Tuesday, August 16, 2016 - link

This ain't about "speed", it's about latency, in which DRAM is far more than 8x more responsive.beginner99 - Wednesday, August 17, 2016 - link

I disagree. RAM needs orders of magnitude more endurance than an SSD and it's also usually a part you keep longer than Mobo or CPU. SSDs are now at like 3000 write cycles. Anything fro RAM would need millions if not billions of write cycles.Cogman - Wednesday, August 17, 2016 - link

Easily billions.I had an old game, black and white, which measured number of functions called in part of its stats gathering. It was often in the 10's of millions. Each of those function calls is a write to memory and a likely write to the same sector of memory.

Every function call, every variable allocation, these will result in a memory update. In a GCed application, every minor GC will result in 10s to 100s of megabytes of data being written.. (woopee for moving garbage collectors).

Software writes and reads data like a beast. It is part of the reason why CPUs have 3 levels of cache on them.