JEDEC: DDR5 to Double Bandwidth Over DDR4, NVDIMM-P Specification Due Next Year

by Anton Shilov on April 3, 2017 9:00 AM EST

JEDEC made two important announcements about the future of DRAM and non-volatile DIMMs for servers last week. Development of both is proceeding as planned and JEDEC intends to preview them in the middle of this year and publish the final specifications sometimes in 2018.

Traditionally each new successive DRAM memory standard aims for consistent jumps: doubling the bandwidth per pin, reducing power consumption by dropping Vdd/Vddq voltage, and increasing the maximum capacity of memory ICs (integrated circuits). DDR5 will follow this trend and JEDEC last week confirmed that it would double the bandwidth and density over DDR4, improve performance, and power efficiency.

Given that official DDR4 standard covers chips with up to 16 Gb capacity and with up to 2133-3200 MT/s data rate per pin, doubling that means 32 Gb ICs with up to 4266-6400 MT/s data rate per pin. If DDR5 sustains 64-bit interface for memory modules, we will see single-sided 32 GB DDR5-6400 DIMMs with 51.2 GB/s bandwidth in the DDR5 era. Speaking of modules, it is interesting to note that among other things DDR5 promises “a more user-friendly interface”, which probably means a new retention mechanism or increased design configurability.

Samsung's DDR4 memory modules. Image for illustrative purposes only.

Part of the DDR5 specification will be improved channel use and efficiency. Virtually all modern random access memory sub-systems are single-channel, dual-channel or multi-channel, but actual memory bandwidth of such systems does not increase linearly with the increase of the number of channels (i.e., channel utilization decreases). Part of the problem is the fact that host cores fight for DRAM bandwidth, and memory scheduling is a challenge for CPU and SoC developers. Right now we do not know how DRAM developers at JEDEC plan to address the memory channel efficiency problem on the specification level, but if they manage to even partly solve the problem, that will be a good news. Host cores will continue to fight for bandwidth and memory scheduling will remain important, but if channel utilization increases it could mean both performance and power advantages. Keep in mind that additional memory channels mean additional DRAM ICs and a significant increase in power consumption, which is important for mobile DRAM subsystems, but it is also very important for servers.

JEDEC plans to disclose more information about the DDR5 specification at its Server Forum event in Santa Clara on June 19, 2017, and then publish the spec in 2018. It is noteworthy that JEDEC published the DDR4 specification in September 2012, whereas large DRAM makers released samples of their DDR4 chips/modules a little before that. Eventually, Intel launched the world’s first DDR4-supporting platforms in 2014, two years after the standard was finalized. If DDR5 follows the same path, we will see systems using the new type of DRAM in 2020 or 2021.

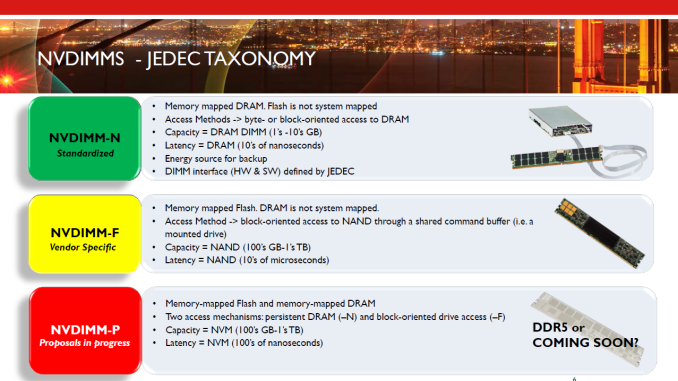

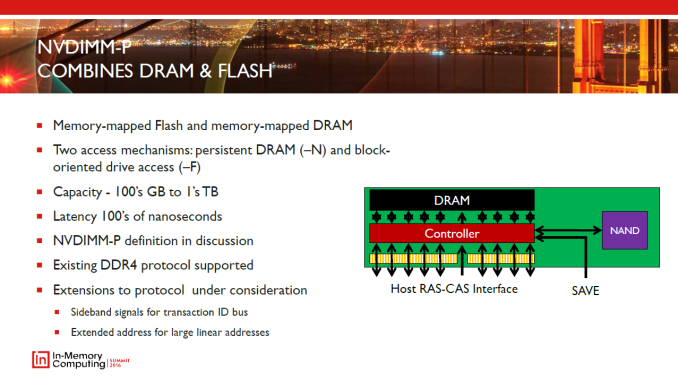

Another specification that JEDEC plans to finalize in 2018 is the NVDIMM-P that will enable high-capacity memory modules featuring persistent memory (flash, 3D XPoint, new types of storage-class memory, etc.) and DRAM. The capacity of today’s NVDIMM-Ns is limited to the capacity of regular server DRAM modules, but the NVDIMM-P promises to change that and increase capacities of modules to hundreds of GBs or even to TBs. The NVDIMM-P is currently a work in progress and we are going to learn more about the tech in June.

Related Reading

- GDDR5X Standard Finalized by JEDEC: New Graphics Memory up to 14 Gbps

- JEDEC Publishes HBM2 Specification as Samsung Begins Mass Production of Chips

- SK Hynix Lays Out Plans for 2017: 10nm-Class DRAM, 72-Layer 3D NAND

- Micron 2017 Roadmap Detailed: 64-layer 3D NAND, GDDR6 Getting Closer, & CEO Retiring

Source: JEDEC

38 Comments

View All Comments

SharpEars - Monday, April 3, 2017 - link

Hello latency my old friend...ImSpartacus - Monday, April 3, 2017 - link

Remember the faster your clock speeds, the more clocks you can give to latency without impacting actual latency figures.close - Tuesday, April 4, 2017 - link

Latency increases when you count clock cycles. But in real time (seconds) you will have the same 8-9ns latency just as it is for years now.If you increase the frequency those same 8-9ns will translate to more clock cycles. Double the frequency, double the number of clocks until those 9ns pass. Nothing to worry about.

willis936 - Monday, April 3, 2017 - link

AMD is crying tears of joy.vladx - Monday, April 3, 2017 - link

Except Optane will dominate just like GSync does.valinor89 - Monday, April 3, 2017 - link

On entusiast builds maybe, but like SSD I doubt this will be mainstream any time soon if it ever is.Consider that just new we are seeing SSD as a comodity in the mainstream space.

MajGenRelativity - Monday, April 3, 2017 - link

I can see DDR5 becoming more important for low-cost devices, but I would like to see adoption of HBM. As a side note, I haven't seen anything about it, I'll ask the question:What is the latency of HBM?

I know GDDR5 has relatively high latency compared to DDR4, but I don't know where HBM falls.

MrSpadge - Monday, April 3, 2017 - link

All current DRAM is using the same DRAM cells which limit the absolute latency measured in ns. It has stayed about the same since >15 years. What's changing is how many clocks those ~10 ns are, plus latency improvements in the controller.MajGenRelativity - Monday, April 3, 2017 - link

Oh okDanNeely - Monday, April 3, 2017 - link

There've been some gains. DDR4 uses a 16x bus multiplier (vs SDRAM) and 16x parallelism to feed it. Top of the line DDR4-4266 runs the ram itself at 266mhz, twice as fast as SDRAM (topped out at PC-133). DDR3-3100 has the ram itself running considerably faster at 387MHz; DDR2 apparently got at least as high as 1250 which puts the ram at 312MHz.Those numbers suggest that there's still a good potential for faster DDR4 ram in the future, because it appears the limiting factor in it is the speed the data bus will run at not the underlying ram feeding it; and the existence of DDR5 itself suggests that memory engineers are confident of being able to push the data busses themselves considerably faster than they currently are (because without doing so performance would actually regress vs DDR4).