JEDEC: DDR5 to Double Bandwidth Over DDR4, NVDIMM-P Specification Due Next Year

by Anton Shilov on April 3, 2017 9:00 AM EST

JEDEC made two important announcements about the future of DRAM and non-volatile DIMMs for servers last week. Development of both is proceeding as planned and JEDEC intends to preview them in the middle of this year and publish the final specifications sometimes in 2018.

Traditionally each new successive DRAM memory standard aims for consistent jumps: doubling the bandwidth per pin, reducing power consumption by dropping Vdd/Vddq voltage, and increasing the maximum capacity of memory ICs (integrated circuits). DDR5 will follow this trend and JEDEC last week confirmed that it would double the bandwidth and density over DDR4, improve performance, and power efficiency.

Given that official DDR4 standard covers chips with up to 16 Gb capacity and with up to 2133-3200 MT/s data rate per pin, doubling that means 32 Gb ICs with up to 4266-6400 MT/s data rate per pin. If DDR5 sustains 64-bit interface for memory modules, we will see single-sided 32 GB DDR5-6400 DIMMs with 51.2 GB/s bandwidth in the DDR5 era. Speaking of modules, it is interesting to note that among other things DDR5 promises “a more user-friendly interface”, which probably means a new retention mechanism or increased design configurability.

Samsung's DDR4 memory modules. Image for illustrative purposes only.

Part of the DDR5 specification will be improved channel use and efficiency. Virtually all modern random access memory sub-systems are single-channel, dual-channel or multi-channel, but actual memory bandwidth of such systems does not increase linearly with the increase of the number of channels (i.e., channel utilization decreases). Part of the problem is the fact that host cores fight for DRAM bandwidth, and memory scheduling is a challenge for CPU and SoC developers. Right now we do not know how DRAM developers at JEDEC plan to address the memory channel efficiency problem on the specification level, but if they manage to even partly solve the problem, that will be a good news. Host cores will continue to fight for bandwidth and memory scheduling will remain important, but if channel utilization increases it could mean both performance and power advantages. Keep in mind that additional memory channels mean additional DRAM ICs and a significant increase in power consumption, which is important for mobile DRAM subsystems, but it is also very important for servers.

JEDEC plans to disclose more information about the DDR5 specification at its Server Forum event in Santa Clara on June 19, 2017, and then publish the spec in 2018. It is noteworthy that JEDEC published the DDR4 specification in September 2012, whereas large DRAM makers released samples of their DDR4 chips/modules a little before that. Eventually, Intel launched the world’s first DDR4-supporting platforms in 2014, two years after the standard was finalized. If DDR5 follows the same path, we will see systems using the new type of DRAM in 2020 or 2021.

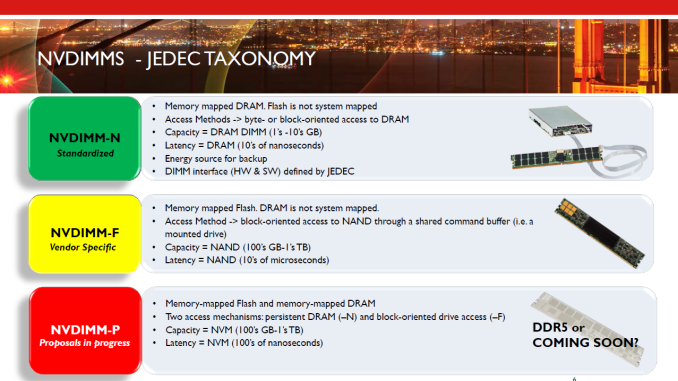

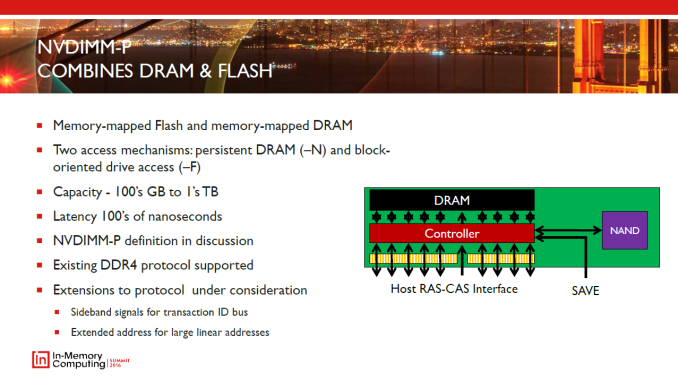

Another specification that JEDEC plans to finalize in 2018 is the NVDIMM-P that will enable high-capacity memory modules featuring persistent memory (flash, 3D XPoint, new types of storage-class memory, etc.) and DRAM. The capacity of today’s NVDIMM-Ns is limited to the capacity of regular server DRAM modules, but the NVDIMM-P promises to change that and increase capacities of modules to hundreds of GBs or even to TBs. The NVDIMM-P is currently a work in progress and we are going to learn more about the tech in June.

Related Reading

- GDDR5X Standard Finalized by JEDEC: New Graphics Memory up to 14 Gbps

- JEDEC Publishes HBM2 Specification as Samsung Begins Mass Production of Chips

- SK Hynix Lays Out Plans for 2017: 10nm-Class DRAM, 72-Layer 3D NAND

- Micron 2017 Roadmap Detailed: 64-layer 3D NAND, GDDR6 Getting Closer, & CEO Retiring

Source: JEDEC

38 Comments

View All Comments

close - Tuesday, April 4, 2017 - link

After retrieving the first word you have pipelining to improve the latency. First word latency is the same 9ns but it drops significantly for retrieving 4 words (~10ns) or 8 words (~12ns).zodiacfml - Monday, April 3, 2017 - link

Not holding my breath. HBM memory is coming to AMD's APUs while Intel announced to utilize such for Kaby Lake. Impact of RAM speeds will be less crucialLolimaster - Monday, April 3, 2017 - link

Make new DIMMs in socket form, so just place "packages" of ram on top of the mobo, instead of this slot mess. Even if you got an SSD you can crash the PC with a small hit to the side of a tower PC.With so much density you won't need more than 2 "sockets" for consumer except really high end mobos.

2x16GB

2x32GB

REAL High end mobos (no gamer sh*t gizmos)

4x32GB

AMD's AM4 demonstrated how much useless space you get on regular mobos with more and more things being integrated on the cpu/SOC.

close - Tuesday, April 4, 2017 - link

I think you're mixing up the motherboard segment with the target audience. Gaming motherboards can very well be high end. The "REAL High end mobos" you're referring to are workstation motherboards. They can be just as high end and a gaming motherboard but they're simply addressed to another type of workload. And they're usually E-ATX, which can accommodate *a lot* of slots.It has nothing to do with the pricing or quality of the motherboard. In the consumer space you'll be hard pressed to find a high end motherboard that doesn't hint at gaming. This doesn't make them less "high-end".

Lolimaster - Monday, April 3, 2017 - link

We are still with the same 30 year old motherboard design. socketed ram is much more reliable than slot type of ram.close - Tuesday, April 4, 2017 - link

When you say motherboard design you mean PCB-type stuff with components, sockets, and slots mounted on? The current popular format, ATX, was introduced by Intel ~20 years ago. And while the general layout is similar, components on mobos have come and gone. But the ones that stayed remained the same for a reason.In case you're wondering Intel also introduced the BTX format 10-12 years ago. The supported memory is still DIMM but I guess you can already tell how popular it was. It takes up very little space and has no reliability issues.

Also lol@socketed RAM... Everybody knows bolt-on and screw in memory are better o_O.

CaedenV - Tuesday, April 4, 2017 - link

@JEDEC, Intel and others,As a consumer I don't really want or need faster RAM. It is time to remove the ram/storage barier and move to something like HBM. Even if it is technically slower; the ability to simply flag software and files on and off rather than having to spool them from storage to ram would be amazing. Plus the instant off/on possibilities of the system would be a huge game changer. RAM may still be a huge necessity in servers and heavy write intensive markets; but for my cell phone and laptop where I deal with word docs, spreadsheets, web browsers, etc there is little to no point in having an ultra-fast memory storage pool.

Pork@III - Tuesday, April 4, 2017 - link

I just wait for RAM which "thousand times faster than today proposals". Ooops this type of RAM was delayed from year 2017 to 2055.