A Timely Discovery: Examining Our AMD 2nd Gen Ryzen Results

by Ian Cutress & Ryan Smith on April 25, 2018 11:15 AM EST

Last week, we published our AMD 2nd Gen Ryzen Deep Dive, covering our testing and analysis of the latest generation of processors to come out from AMD. Highlights of the new products included better cache latencies, faster memory support, an increase in IPC, an overall performance gain over the first generation products, new power management methods for turbo frequencies, and very competitive pricing.

In our review, we had a change in some of the testing. The big differences in our testing for this review was two-fold: the jump from Windows 10 Pro RS2 to Windows 10 Pro RS3, and the inclusion of the Spectre and Meltdown patches to mitigate the potential security issues. These patches are still being rolled out by motherboard manufacturers, with the latest platforms being first in that queue. For our review, we tested the new processors with the latest OS updates and microcode updates, as well as re-testing the Intel Coffee Lake processors as well. Due to time restrictions, the older Ryzen 1000-series results were used.

Due to the tight deadline of our testing and results, we pushed both our CPU and gaming tests live without as much formal analysis as we typically like to do. All the parts were competitive, however it quickly became clear that some of our results were not aligned with those from other media. Initially we were under the impression that this was as a result of the Spectre and Meltdown (or Smeltdown) updates, as we were one of the few media outlets to go back and perform retesting under the new standard.

Nonetheless, we decided to take an extensive internal audit of our testing to ensure that our results were accurate and completely reproducible. Or, failing that, understanding why our results differed. No stone was left un-turned: hardware, software, firmware, tweaks, and code. As a result of that process we believe we have found the reason for our testing being so different from the results of others, and interestingly it opened a sizable can of worms we were not expecting.

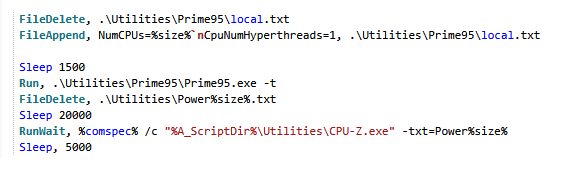

An extract from our Power testing script

What our testing identified is that the source of the issue is actually down to timers. Windows uses timers for many things, such as synchronization or ensuring linearity, and there are sets of software relating to monitoring and overclocking that require the timer with the most granularity - specifically they often require the High Precision Event Timer (HPET). HPET is very important, especially when it comes to determining if 'one second' of PC time is the equivalent to 'one second' of real-world time - the way that Windows 8 and Windows 10 implements their timing strategy, compared to Windows 7, means that in rare circumstances the system time can be liable to clock shift over time. This is often highly dependent on how the motherboard manufacturer implements certain settings. HPET is a motherboard-level timer that, as the name implies, offers a very high level of timer precision beyond what other PC timers can provide, and can mitigate this issue. This timer has been shipping in PCs for over a decade, and under normal circumstances it should not be anything but a boon to Windows.

However, it sadly appears that reality diverges from theory – sometimes extensively so – and that our CPU benchmarks for the Ryzen 2000-series review were caught in the middle. Instead of being a benefit to testing, what our investigation found is that when HPET is forced as the sole system timer, it can sometimes a hindrance to system performance, particularly gaming performance. Worse, because HPET is implemented differently on different platforms, the actual impact of enabling it isn't even consistent across vendors. Meaning that the effects of using HPET can vary from system to system, as well as the implementation.

And that brings us to the state HPET, our Ryzen 2000-series review, and CPU benchmarking in general. As we'll cover in the next few pages, HPET plays a very necessary and often very beneficial role in system timer accuracy; a role important enough that it's not desirable to completely disable HPET – and indeed in many systems this isn't even possible – all the while certain classes of software such as overclocking & monitoring software may even require it. However for a few different reasons it can also be a drain on system performance, and as a result HPET shouldn't always be used. So let's dive into the subject of hardware timers, precision, Smeltdown, and how it all came together to make a perfect storm of volatility for our Ryzen 2000-series review.

242 Comments

View All Comments

Cooe - Wednesday, April 25, 2018 - link

Chris Hook was a marketing guy through and through and was behind some of AMD's worst marketing campaigns in the history of the company. Him leaving is total non-issue in my eyes and potentially even a plus assuming they can replace him with someone that can actually run good marketing. That's always been one of AMD's most glaring weak spots.HilbertSpace - Wednesday, April 25, 2018 - link

Thanks for the great follow up article. Very informative.Aichon - Wednesday, April 25, 2018 - link

I laud with your decision to reflect default settings going forward, since the purpose of these reviews is to give your reader a sense of how these chips compare to each other in various forms of real-world usage.As to the closing question of how these settings should be reflected to readers, I think the ideal case (read: way more work than I'm actually expecting you to do) would be that you extend the Benchmarking Setup page in future reviews to include mention of any non-default settings you use, with details about which setting you chose, why you set it that way, and, optionally, why someone might want to set it differently, as well as how it might impact them. Of course, that's a LOAD of work, and, frankly, a lot of how it might impact other users in unknown workflows would be speculation, so what you end up doing should likely be less than that. But doing it that way would give us that information if we want it, would tell us how our usage might differ from yours, and, for any of us who don't want that information, would make it easy to skip past.

phoenix_rizzen - Wednesday, April 25, 2018 - link

Would be interesting to see a series of comparisons for the Intel CPU:No Meltdown, No Spectre, HPET default

No Meltdown, No Spectre, HPET forced

Meltdown, No Spectre, HPET default

Meltdown, No Spectre, HPET forced

To compare to the existing Meltdown, Spectre, HPET default/forced results.

Will be interesting to see just what kind of performance impact Meltdown/Spectre fixes really have.

Obviously, going forward, all benchmarks should be done with full Meltdown/Spectre fixes in place. But it would still be interesting to see the full range of their effects on Intel CPUs.

lefty2 - Wednesday, April 25, 2018 - link

Yes, I'd like to second this suggestion ;) . No one has done any proper analysis of the Meltdown/Spectre performance on Windows since Intel and AMD released the final microcode mitigations. (i.e post April 1st).FreckledTrout - Wednesday, April 25, 2018 - link

I agree as the timing makes this very curious. One would think this would have popped up before this review. I get this gut feeling the HPET being forced is causing a much greater penalty with the Meltdown and Spectre patches applied.Psycho_McCrazy - Wednesday, April 25, 2018 - link

Thanks to Ryan and Ian for such a deep dive into the matter and for finding out what the issue was...Even though this changes the gaming results a bit, still does not change the fact that the 2700x is a very very competent 4k gamer cpu.

Zucker2k - Wednesday, April 25, 2018 - link

You mean gpu-bottle-necked gaming? Sure!Cooe - Wednesday, April 25, 2018 - link

But to be honest, the 8700K's advantage when totally CPU limited isn't all that fantastic though either. Sure, there are still a handful of titles that put up notable 10-15% advantages, most are now well in the realm of 0-10%, with many titles now in a near dead heat which compared to the Ryzen 7 vs Kaby Lake launch situation is absolutely nuts. Hell, even when comparing the 1st Gen chips today vs then; the gaps have all shrunk dramatically with no changes in hardware and this slow & steady trend shows no signs of petering out (Zen in particular is an arch design extraordinarily ripe for software level optimizations). Whereas there were a good number of build/use scenerios where Intel was the obviously superior option vs 1st Gen Ryzen, with how much the gap has narrowed those have now shrunk into a tiny handful of rather bizarre niches.These being those first & foremost gamers whom use a 1080p 144/240Hz monitor with at least a GTX 1080/Vega 64. For most everyone with more realistic setups like 1080p 60/75Hz with a mid-range card or a high end card paired with 1440p 60/144Hz (or 4K 60Hz), the Intel chip is going to have all of no gaming performance advantage whatsoever, while being slower to a crap ton slower than Ryzen 2 in any sort of multi-tasking scenerio, or decently threaded workload(s). And unlike Ryzen's notable width advantage, Intel's in general single-thread perf is most often near impossible to notice without both systems side by side and a stopwatch in hand, while running a notoriously single-thread heavy load like some serious Photoshop (both are already so fast on a per-core basis that you pretty much deliberately have to seek out situations where there'll be a noticeable difference, whereas AMD's extra cores/threads & superior SMT becomes readily apparent as soon as you start opening & running more and more things concurrently. (All modern OS' are capable of scaling to as many cores/threads as you can find them).

Just my 2 cents at least. While the i7-8700K was quite compelling for a good number of use-cases vs Ryzen 1, it just.... well isn't vs Ryzen 2.

Tropicocity - Monday, April 30, 2018 - link

The thing is, any gamer (read: gamer!) looking to get a 2700x or an 8700k is very likely to be pairing it with at least a GTX 1070 and more than likely either a 1080/144, a 1444/60, or a 1440/144 monitor. You don't generally spend $330-$350/ £300+ on a CPU as a gamer unless you have sufficient pixel-pushing hardware to match with it.Those who are still on 1080/60 would be much more inclined to get more 'budget' options, such as a Ryzen 1400-1600, or an 8350k-8400.

There is STILL an advantage at 1440p, which these results do not show. At 4k, yes, the bottleneck becomes almost entirely the GPU, as we're not currently at the stage where that resolution is realistically doable for the majority.

Also, as a gamer, you shouldn't neglect the single-threaded scenario. There are a few games who benefit from extra cores and threads sure, but if you pick the most played games in the world, you'll come to see that the only thing they appreciate is clock speed and single (occasionally dual) threaded workloads. League of Legends, World of Warcraft, Fortnite, CS:GO etc etc.

The games that are played by more people globally than any other, will see a much better time being played on a Coffee Lake CPU compared to a Ryzen.

You do lose the extra productivity, you won't be able to stream at 10mbit (Twitch is capped to 6 so its fine), but you Will certainly have improvements when you're playing the game for yourself.

Don't get me wrong here; I agree that Ryzen 2 vs Coffee Lake is a lot more balanced and much closer in comparison than anything in the past decade in terms of Intel vs AMD, but to say that gamers will see "no performance advantage whatsoever" going with an Intel chip is a little too farfetched.