The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM EST

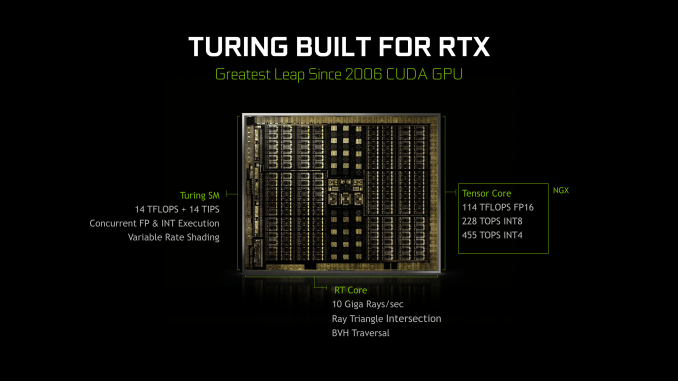

It’s been roughly a month since NVIDIA's Turing architecture was revealed, and if the GeForce RTX 20-series announcement a few weeks ago has clued us in on anything, is that real time raytracing was important enough for NVIDIA to drop “GeForce GTX” for “GeForce RTX” and completely change the tenor of how they talk about gaming video cards. Since then, it’s become clear that Turing and the GeForce RTX 20-series have a lot of moving parts: RT Cores, real time raytracing, Tensor Cores, AI features (i.e. DLSS), raytracing APIs. All of it coming together for a future direction of both game development and GeForce cards.

In a significant departure from past launches, NVIDIA has broken up the embargos around the unveiling of their latest cards into two parts: architecture and performance. For the first part, today NVIDIA has finally lifted the veil on much of the Turing architecture details, and there are many. So many that there are some interesting aspects that have yet to be explained, and some that we’ll need to dig into alongside objective data. But it also gives us an opportunity to pick apart the namesake of GeForce RTX: raytracing.

While we can't discuss real-world performance until next week, for real time ray tracing it is almost a moot point. In short, there's no software to use with it right now. Accessing Turing's ray tracing features requires using the DirectX Raytracing (DXR) API, NVIDIA's OptiX engine, or the unreleased Vulkan ray tracing extensions. For use in video games, it essentially narrows down to just DXR, which has yet to be released to end-users.

The timing, however, is better than it seems. A year or so later could mean facing products that are competitive in traditional rasterization. And given NVIDIA's traditionally strong ecosystem with developers and middleware (e.g. GameWorks), they would want to leverage high-profile games for ringing up consumer support for hybrid rendering, which is where both ray tracing and rasterization is used.

So as we've said before, with hybrid rendering, NVIDIA is gunning for nothing less than a complete paradigm shift in consumer graphics and gaming GPUs. And insofar as real time ray tracing is the 'holy grail' of computer graphics, NVIDIA has plenty of other potential motivations beyond graphical purism. Like all high-performance silicon design firms, NVIDIA is feeling the pressure of the slow death of Moore's Law, of which fixed function but versatile hardware provides a solution. And where NVIDIA compares the Turing 20-series to the Pascal 10-series, Turing has much more in common with Volta, being in the same generational compute family (sm_75 and sm_70), an interesting development as both NVIDIA and AMD have stated that GPU architecture will soon diverge into separate designs for gaming and compute. Not to mention that making a new standard out of hybrid rendering would hamper competitors from either catching up or joining the market.

But real time ray tracing being what it is, it was always a matter of time before it became feasible, either through NVIDIA or another company. DXR, for its part, doesn't specify the implementations for running its hardware accelerated layer. What adds to the complexity is the branding and marketing of the Turing-related GeForce RTX ecosystem, as well as the inclusion of Tensor Core accelerated features that are not inherently part of hybrid rendering, but is part of a GPU architecture that has now made its way to consumer GeForce.

For the time being though, the GeForce RTX cards are not released yet, and we can’t talk about any real-world data. Nevertheless, the context of hybrid rendering and real time ray tracing is central to Turing and to GeForce RTX, and it will remain so as DXR is eventually released and consumer-relevant testing methodology is established for it. In light of these factors, as well as Turing information we’ve yet to fully analyze, today we’ll focus on the Turing architecture and how it relates to real-time raytracing. And be sure to stay tuned for the performance review next week!

111 Comments

View All Comments

willis936 - Sunday, September 16, 2018 - link

Also in case there's anyone else in the signal integrity business reading this: does it bug anyone else that eye diagrams are always heatmaps without a colorbar legend? When I make eye diagrams I put a colorbar legend in to signify hits per mV*ps area. The only thing I see T&M companies do is specify how many samples are in the eye diagram but I don't think that's enough for easy apples to apples comparisons.Manch - Sunday, September 16, 2018 - link

No, bc heatmaps are std.willis936 - Monday, September 17, 2018 - link

A heatmap would still be used. The color alone has no meaning unless you know how many hits there are total. Even that is useless if you want to build a bathtub. The colorbar would actually tell you how many hits are in a region. This applies to all heatmaps.casperes1996 - Sunday, September 16, 2018 - link

Wow... I just started a computer science education recently, and I was recently tasked with implementing an effecient search algorithm that works on infinitely long data streams. I made it so it first checks for an upper boundary in the array, (and updates the lower boundary based on the upper one) and then does a binary search on that subarray. I feel like there's no better time to read this article since it talks about the BVH. I felt so clever when I read it and thought "That sounds a lot like a binary search" before the article then mentioned it itself!ballsystemlord - Sunday, September 16, 2018 - link

You made only 1 typo! Great job!"In any case, as most silicon design firms hvae leapfrogging design teams,"

Should be "have":

"In any case, as most silicon design firms have leapfrogging design teams,"

There is one more problem (stray 2 letter word), in your article, but I forgot were it was. Sorry.

Sherlock - Monday, September 17, 2018 - link

The fact that Microsoft has released a Ray Tracing specific API seems to suggest that the next XBox will support it. And considering AMD is the CPU/GPU partner for the next gen XBox - it seems highly likely that the next gen AMD GPU's will have dedicated Ray Tracing hardware as well. I expect meaningful use of these hardware feature only once the next gen console hardware is released - which is due in the next 2-3 years. RTX seems a wasteful expenditure for the end-consumer now. The only motivation for NVidia to release this now is so that consumers don't feel as they are behind the curve against AMD. This gives some semblance to the rumros that Nvidia will release a "GTX" line and expect it to be their volume selling product - with the RTX as proof-of-concept for early adoptersbebby - Monday, September 17, 2018 - link

Very good point from Sherlock. I also believe that Sony and Microsoft will be the ones defining what kind of hardware features will be used and which not.In general, with Moore's Law slowing down, progress gets slower and the incremental improvements are minimal. With the result that there is less competition, prices go up and there is not any more any "wow" effect coming with a new GPU. (last time I had this was with the 470gtx)

My disappointment lies with the power consumption. Nvidia should focus more on power consumption rather than performance if they ever want to have a decent market share in tablets/phablets.

levizx - Monday, September 17, 2018 - link

Actually the efficiency increased only 18% not 23%. 150% / 127% - 1 = 18.11%, you can't just 50% - 27% = 23%, the efficiency increase is compared to "without optimization" i.e. 127%rrinker - Monday, September 17, 2018 - link

91 comments (as I type this) and most of them are arguing over "boo hoo, it's too expensive" Well, if it's too expensive - don't buy it. Why complain? Oh yeah, because Internet. This is NOT just the same old GPU, just a little faster - this is something completely different, or at least, something with significant differences to the current cop of traditional GPUs. There's no surprise that it's going to be more expensive - if you are shocked at the price then you really MUST be living under a rock. The first new ANYTHING is always premium priced - there is no competition, it's a unique product, and there is a lot of development costs involved. CAN they sell it for less? Most likely, but their job is not to sell it for the lowest possible profit, it's to sell it for what the market will bear. Simple as that. Don't like it, don;t buy it. Absolutely NO ONE needs the latest and greatest on launch day. I won't be buying one of these, I do nothing that would benefit from the new features. Maybe in a few years, when everyone has raytracing, and the games I want to play require it - then I'll buy a card like this. Griping about pricing on something you don't need - priceless.eddman - Tuesday, September 18, 2018 - link

... except this is the first time we've had such a massive price jump in the past 18 years. Even 8800 series, which according to jensen was the biggest technology jump before 20 series, launched at about the same MSRP as the last gen.It does HYBRID, partial, limited ray-tracing, and? How does that justify such a massive price jump? If these cards are supposed to be the replacements for pascals, then they are damn overpriced COMPARED to them. This is not how generational pricing is supposed to be.

If these are supposed to be a new category, then why name them like that? Why not go with 2090 Ti, 2090, or something along those lines.

Since they haven't done that and considering they left and right compare these cards to pascal cards even in regular rasterized games, then I have to conclude they consider them generational replacements.