The NVIDIA Turing GPU Architecture Deep Dive: Prelude to GeForce RTX

by Nate Oh on September 14, 2018 12:30 PM EST

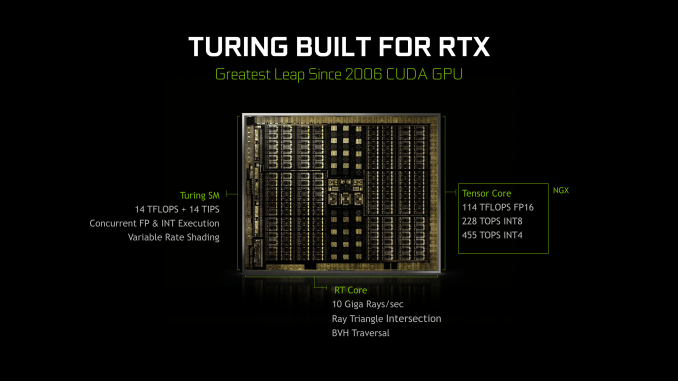

It’s been roughly a month since NVIDIA's Turing architecture was revealed, and if the GeForce RTX 20-series announcement a few weeks ago has clued us in on anything, is that real time raytracing was important enough for NVIDIA to drop “GeForce GTX” for “GeForce RTX” and completely change the tenor of how they talk about gaming video cards. Since then, it’s become clear that Turing and the GeForce RTX 20-series have a lot of moving parts: RT Cores, real time raytracing, Tensor Cores, AI features (i.e. DLSS), raytracing APIs. All of it coming together for a future direction of both game development and GeForce cards.

In a significant departure from past launches, NVIDIA has broken up the embargos around the unveiling of their latest cards into two parts: architecture and performance. For the first part, today NVIDIA has finally lifted the veil on much of the Turing architecture details, and there are many. So many that there are some interesting aspects that have yet to be explained, and some that we’ll need to dig into alongside objective data. But it also gives us an opportunity to pick apart the namesake of GeForce RTX: raytracing.

While we can't discuss real-world performance until next week, for real time ray tracing it is almost a moot point. In short, there's no software to use with it right now. Accessing Turing's ray tracing features requires using the DirectX Raytracing (DXR) API, NVIDIA's OptiX engine, or the unreleased Vulkan ray tracing extensions. For use in video games, it essentially narrows down to just DXR, which has yet to be released to end-users.

The timing, however, is better than it seems. A year or so later could mean facing products that are competitive in traditional rasterization. And given NVIDIA's traditionally strong ecosystem with developers and middleware (e.g. GameWorks), they would want to leverage high-profile games for ringing up consumer support for hybrid rendering, which is where both ray tracing and rasterization is used.

So as we've said before, with hybrid rendering, NVIDIA is gunning for nothing less than a complete paradigm shift in consumer graphics and gaming GPUs. And insofar as real time ray tracing is the 'holy grail' of computer graphics, NVIDIA has plenty of other potential motivations beyond graphical purism. Like all high-performance silicon design firms, NVIDIA is feeling the pressure of the slow death of Moore's Law, of which fixed function but versatile hardware provides a solution. And where NVIDIA compares the Turing 20-series to the Pascal 10-series, Turing has much more in common with Volta, being in the same generational compute family (sm_75 and sm_70), an interesting development as both NVIDIA and AMD have stated that GPU architecture will soon diverge into separate designs for gaming and compute. Not to mention that making a new standard out of hybrid rendering would hamper competitors from either catching up or joining the market.

But real time ray tracing being what it is, it was always a matter of time before it became feasible, either through NVIDIA or another company. DXR, for its part, doesn't specify the implementations for running its hardware accelerated layer. What adds to the complexity is the branding and marketing of the Turing-related GeForce RTX ecosystem, as well as the inclusion of Tensor Core accelerated features that are not inherently part of hybrid rendering, but is part of a GPU architecture that has now made its way to consumer GeForce.

For the time being though, the GeForce RTX cards are not released yet, and we can’t talk about any real-world data. Nevertheless, the context of hybrid rendering and real time ray tracing is central to Turing and to GeForce RTX, and it will remain so as DXR is eventually released and consumer-relevant testing methodology is established for it. In light of these factors, as well as Turing information we’ve yet to fully analyze, today we’ll focus on the Turing architecture and how it relates to real-time raytracing. And be sure to stay tuned for the performance review next week!

111 Comments

View All Comments

Yojimbo - Saturday, September 15, 2018 - link

Of course he meant giga as in billion. Of course it's simple. But where did you see "gigarays/sec" before? You didn't. So as far as you know, it is an NVIDIA-made term.He said it that way because it's easier to talk about it that way, and because NVIDIA is making a marketing term out of it. And he introduced it with a joke.

edzieba - Saturday, September 15, 2018 - link

'Gigs' is an SI prefix. You put it in front of any unit to indicate 10^9 of that unit.eddman - Saturday, September 15, 2018 - link

Why does it even matter?! As I pointed out, I meant to write "rays/sec". I forgot to take out the giga part after copy/paste.I'm pretty sure a lot of ray tracing developers have uttered the words "giga rays" before jensen.

markiz - Monday, September 17, 2018 - link

Well car makers are also using kilowatts for engines. Bastards.Yojimbo - Saturday, September 15, 2018 - link

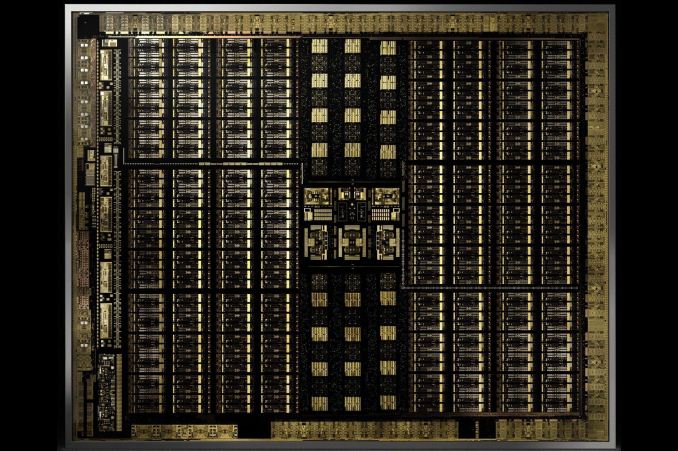

Where do you get these die area estimates from?edzieba - Saturday, September 15, 2018 - link

If you compare scaled die shots of Turing to Pascal (GP102), isolate an SM, and assume each CUDA core occupies the same die area, then the combination of Tensor and RT cores takes around 24% die area. This is more of an upper bound, as the CUDA cores are likely to be larger due to the concurrent execution capability, there is more space in the SM taken by memory, and more uncore taken by the NVLink interfacesYojimbo - Saturday, September 15, 2018 - link

Where are these die shots? Paste them? I think that people are looking at schematics, not die shots.edzieba - Saturday, September 15, 2018 - link

Nvidia posted them (surprisingly correctly scaled) as part of the Turing unveil presentation.Yojimbo - Saturday, September 15, 2018 - link

Can you link me to them and to an analysis of them?808Hilo - Sunday, September 16, 2018 - link

So far 10% better than 1080ti and 15% over 1080. Really not worth it. Better spend money on MB, new chip, ram and fast SSD if coming from an older PC.