The NVIDIA GeForce RTX 2080 Ti & RTX 2080 Founders Edition Review: Foundations For A Ray Traced Future

by Nate Oh on September 19, 2018 5:15 PM EST- Posted in

- GPUs

- Raytrace

- GeForce

- NVIDIA

- DirectX Raytracing

- Turing

- GeForce RTX

Meet The New Future of Gaming: Different Than The Old One

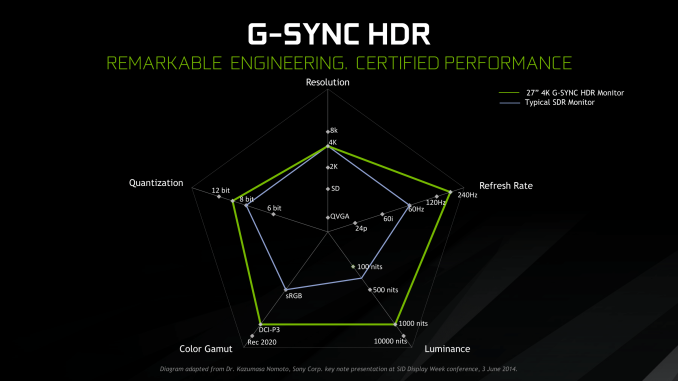

Up until last month, NVIDIA had been pushing a different, more conventional future for gaming and video cards, perhaps best exemplified by their recent launch of 27-in 4K G-Sync HDR monitors, courtesy of Asus and Acer. The specifications and display represented – and still represents – the aspired capabilities of PC gaming graphics: 4K resolution, 144 Hz refresh rate with G-Sync variable refresh, and high-quality HDR. The future was maxing out graphics settings on a game with high visual fidelity, enabling HDR, and rendering at 4K with triple-digit average framerate on a large screen. That target was not achievable by current performance, at least, certainly not by single-GPU cards. In the past, multi-GPU configurations were a stronger option provided that stuttering was not an issue, but recent years have seen both AMD and NVIDIA take a step back from CrossFireX and SLI, respectively.

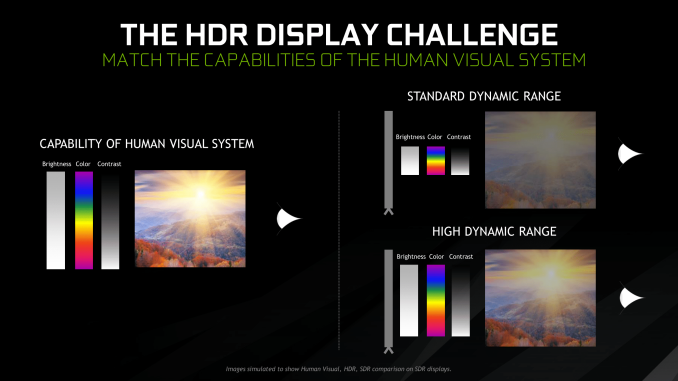

Particularly with HDR, NVIDIA expressed a qualitative rather than quantitative enhancement in the gaming experience. Faster framerates and higher resolutions were more known quantities, easily demoed and with more intuitive benefits – though in the past there was the perception of 30fps as cinematic, and currently 1080p still remains stubbornly popular – where higher resolution means more possibility for details, higher even framerates meant smoother gameplay and video. Variable refresh rate technology soon followed, resolving the screen-tearing/V-Sync input lag dilemma, though again it took time to catch on to where it is now – nigh mandatory for a higher-end gaming monitor.

For gaming displays, HDR was substantively different than adding graphical details or allowing smoother gameplay and playback, because it meant a new dimension of ‘more possible colors’ and ‘brighter whites and darker blacks’ to gaming. Because HDR capability required support from the entire graphical chain, as well as high-quality HDR monitor and content to fully take advantage, it was harder to showcase. Added to the other aspects of high-end gaming graphics and pending the further development of VR, this was the future on the horizon for GPUs.

But today NVIDIA is switching gears, going to the fundamental way computer graphics are modelled in games today. Of the more realistic rendering processes, light can be emulated as rays that emit from their respective sources, but computing even a subset of the number of rays and their interactions (reflection, refraction, etc.) in a bounded space is so intensive that real time rendering was impossible. But to get the performance needed to render in real time, rasterization essentially boils down 3D objects as 2D representations to simplify the computations, significantly faking the behavior of light.

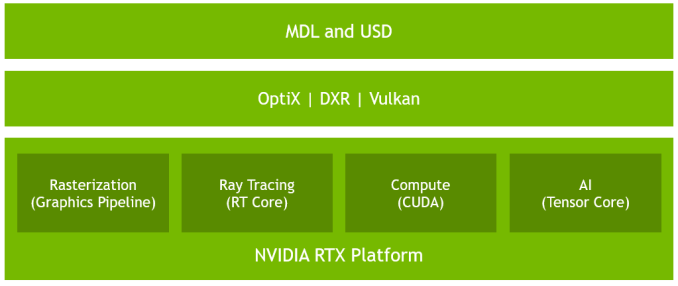

It’s on real time ray tracing that NVIDIA is staking its claim with GeForce RTX and Turing’s RT Cores. Covered more in-depth in our architecture article, NVIDIA’s real time ray tracing implementation takes all the shortcuts it can get, incorporating select real time ray tracing effects with significant denoising but keeping rasterization for everything else. Unfortunately, this hybrid rendering isn’t orthogonal to the previous concepts. Now, the ultimate experience would be hybrid rendered 4K with HDR support at high, steady, and variable framerates, though GPUs didn’t have enough performance to get to that point under traditional rasterization.

There’s a still a performance cost incurred with real time ray tracing effects, except right now only NVIDIA and developers have a clear idea of what it is. What we can say is that utilizing real time ray tracing effects in games may require sacrificing some or all three of high resolution, ultra high framerates, and HDR. HDR is limited by game support more than anything else. But the first two have arguably minimum performance standards when it comes to modern high-end gaming on PC – anything under 1080p is completely unpalatable, and anything under 30fps or more realistically 45 to 60fps hurts the playability. Variable refresh rate can mitigate the latter and framedrops are temporary, but low resolution is forever.

Ultimately, the real time ray tracing support needs to be implemented by developers via a supporting API like DXR – and many have been working hard on doing so – but currently there is no public timeline of application support for real time ray tracing, Tensor Core accelerated AI features, and Turing advanced shading. The list of games with support for Turing features - collectively called the RTX platform - will be available and updated on NVIDIA's site.

337 Comments

View All Comments

imaheadcase - Wednesday, September 19, 2018 - link

Because bluray players played movies from the start, delivered what they promised from the start even if cost a lot? Duh.PopinFRESH007 - Thursday, September 20, 2018 - link

They played DVDs from the start. Your statement is falseimaheadcase - Thursday, September 20, 2018 - link

Umm nope its true.Spunjji - Friday, September 21, 2018 - link

Yeah, there was media available at launch. Also Blu-Ray provided a noticeable jump in both quality AND resolution over DVD. RTX provides maybe the first and definitely not the second.V900 - Wednesday, September 19, 2018 - link

And it’s clear that you didn’t read the article, or skimmed it at best, if you’re claiming that “the two technologies have not even seen the real light of day”.The tools are out there, developers are working with them, and not only are there many games on the way that support them, there are games out now that use RTX.

Let me quote from the review:

“not only was the feat achieved but implemented, and not with proofs-of-concept but with full-fledged AA and AAA games. Today is a milestone from a purely academic view of computer graphics.”

tamalero - Wednesday, September 19, 2018 - link

Development means nothing unless they are released. As plans get cancelled, budgets gets cut and technology is replaced or converted/merged into a different standard.imaheadcase - Wednesday, September 19, 2018 - link

You just proved yourself wrong with own quote. lolGuess what? Python language is out there, lets all develop games from it! All the tools are available! Its so easy! /sarcasm

Ranger1065 - Thursday, September 20, 2018 - link

V900 shillage stench.PopinFRESH007 - Wednesday, September 19, 2018 - link

Just like those HD-DVD adopters, Laser Disc adopters, BetaMax adopters. V900 is pointing out that early adopters accept a level of risk in adopting new technology to enjoy cutting-edge stuff. This is no different that Bluray or DVDs when they came out. People who buy RTX cards have "WORKING TECH" and will have few options to use it just like the 2nd wave of Bluray players. The first Bluray player actually never had a movie released for it and it cost $3800."The first consumer device arrived in stores on April 10, 2003: the Sony BDZ-S77, a $3,800 (US) BD-RE recorder that was made available only in Japan.[20] But there was no standard for prerecorded video, and no movies were released for this player."

Even 3 years after that when they actually had a standard studios would produce movies for the players that were out cost over $1000 and there was a whopping 7 titles that were available. Similar to RTX being the fastest cards available for current technology, those Bluray players also played DVDs (gasp).

imaheadcase - Wednesday, September 19, 2018 - link

Again, the point is bluray WORKED out of the box even if expensive. This doesn't even have any way to even test the other stuff.. You are literally buying something for a FPS boost over previous gens that is not really a big one at that. It be a different tune if lots of games already had the tech in hand by nvidia, had it in games just not enabled...but its not even available to test is silly.