The Intel 9th Gen Review: Core i9-9900K, Core i7-9700K and Core i5-9600K Tested

by Ian Cutress on October 19, 2018 9:00 AM EST- Posted in

- CPUs

- Intel

- Coffee Lake

- 14++

- Core 9th Gen

- Core-S

- i9-9900K

- i7-9700K

- i5-9600K

CPU Performance: Rendering Tests

Rendering is often a key target for processor workloads, lending itself to a professional environment. It comes in different formats as well, from 3D rendering through rasterization, such as games, or by ray tracing, and invokes the ability of the software to manage meshes, textures, collisions, aliasing, physics (in animations), and discarding unnecessary work. Most renderers offer CPU code paths, while a few use GPUs and select environments use FPGAs or dedicated ASICs. For big studios however, CPUs are still the hardware of choice.

All of our benchmark results can also be found in our benchmark engine, Bench.

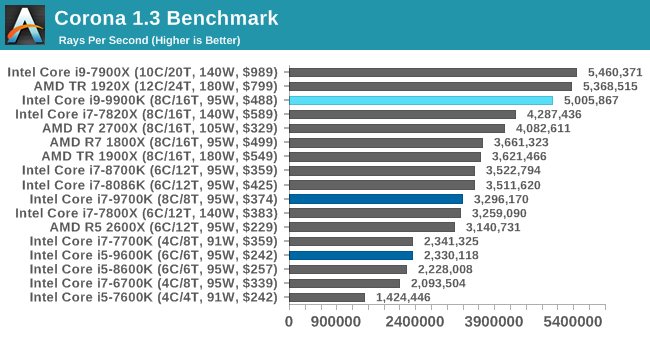

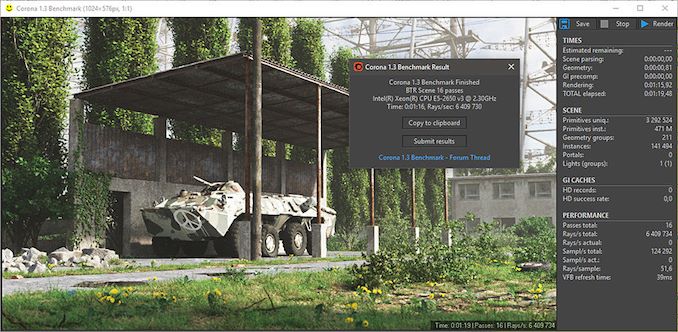

Corona 1.3: Performance Render

An advanced performance based renderer for software such as 3ds Max and Cinema 4D, the Corona benchmark renders a generated scene as a standard under its 1.3 software version. Normally the GUI implementation of the benchmark shows the scene being built, and allows the user to upload the result as a ‘time to complete’.

We got in contact with the developer who gave us a command line version of the benchmark that does a direct output of results. Rather than reporting time, we report the average number of rays per second across six runs, as the performance scaling of a result per unit time is typically visually easier to understand.

The Corona benchmark website can be found at https://corona-renderer.com/benchmark

Corona is a fully multithreaded test, so the non-HT parts get a little behind here. The Core i9-9900K blasts through the AMD 8-core parts with a 25% margin, and taps on the door of the 12-core Threadripper.

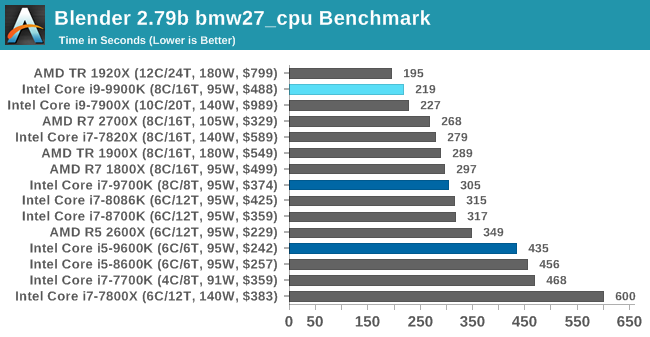

Blender 2.79b: 3D Creation Suite

A high profile rendering tool, Blender is open-source allowing for massive amounts of configurability, and is used by a number of high-profile animation studios worldwide. The organization recently released a Blender benchmark package, a couple of weeks after we had narrowed our Blender test for our new suite, however their test can take over an hour. For our results, we run one of the sub-tests in that suite through the command line - a standard ‘bmw27’ scene in CPU only mode, and measure the time to complete the render.

Blender can be downloaded at https://www.blender.org/download/

Blender has an eclectic mix of requirements, from memory bandwidth to raw performance, but like Corona the processors without HT get a bit behind here. The high frequency of the 9900K pushes it above the 10C Skylake-X part, and AMD's 2700X, but behind the 1920X.

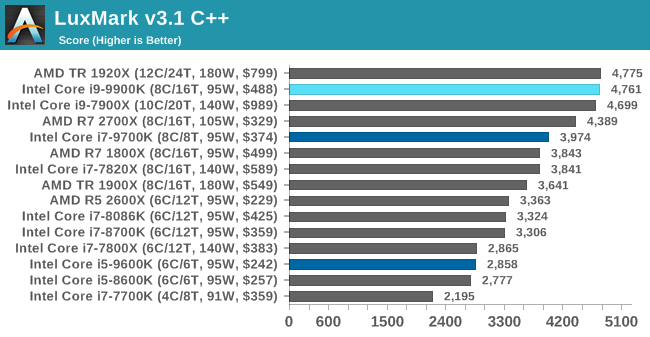

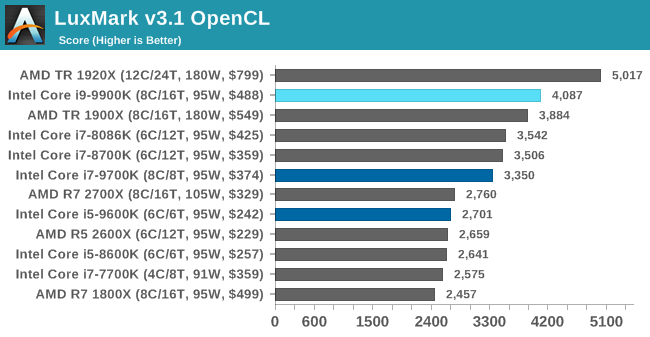

LuxMark v3.1: LuxRender via Different Code Paths

As stated at the top, there are many different ways to process rendering data: CPU, GPU, Accelerator, and others. On top of that, there are many frameworks and APIs in which to program, depending on how the software will be used. LuxMark, a benchmark developed using the LuxRender engine, offers several different scenes and APIs.

Taken from the Linux Version of LuxMark

In our test, we run the simple ‘Ball’ scene on both the C++ and OpenCL code paths, but in CPU mode. This scene starts with a rough render and slowly improves the quality over two minutes, giving a final result in what is essentially an average ‘kilorays per second’.

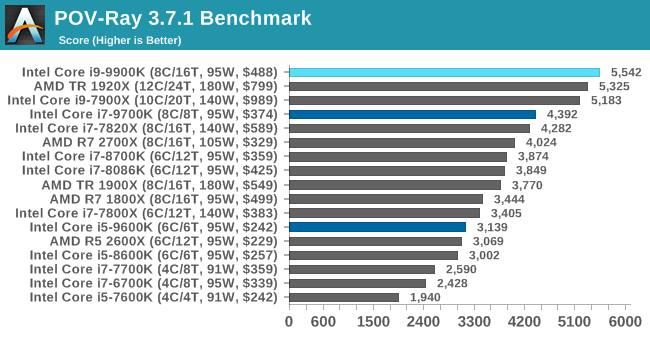

POV-Ray 3.7.1: Ray Tracing

The Persistence of Vision ray tracing engine is another well-known benchmarking tool, which was in a state of relative hibernation until AMD released its Zen processors, to which suddenly both Intel and AMD were submitting code to the main branch of the open source project. For our test, we use the built-in benchmark for all-cores, called from the command line.

POV-Ray can be downloaded from http://www.povray.org/

274 Comments

View All Comments

3dGfx - Friday, October 19, 2018 - link

game developers like to build and test on the same machinemr_tawan - Saturday, October 20, 2018 - link

> game developers like to build and test on the same machineOh I thought they use remote debugging.

12345 - Wednesday, March 27, 2019 - link

Only thing I can think of as a gaming use for those would be to pass through a gpu each to several VMs.close - Saturday, October 20, 2018 - link

@Ryan, "There’s no way around it, in almost every scenario it was either top or within variance of being the best processor in every test (except Ashes at 4K). Intel has built the world’s best gaming processor (again)."Am I reading the iGPU page wrong? The occasional 100+% handicap does not seem to be "within variance".

daxpax - Saturday, October 20, 2018 - link

if you noticed 2700x is faster in half benchmarks for games but they didnt include itnathanddrews - Friday, October 19, 2018 - link

That wasn't a negative critique of the review, just the opposite in fact: from the selection of benchmarks you provided, it is EASY to see that given more GPU power, the new Intel chips will clearly outperform AMD most of the time - generally with average, but specifically minimum frames. From where I'm sitting - 3570K+1080Ti - I think I could save a lot of money by getting a 2600X/2700X OC setup and not miss out on too many fpses.philehidiot - Friday, October 19, 2018 - link

I think anyone with any sense (and the constraints of a budget / missus) will be stupid to buy this CPU for gaming. The sensible thing to do is to buy the AMD chip that provides 99% of the gaming performance for half the price (even better value when you factor in the mobo) and then to plough that money into a better GPU, more RAM and / or a better SSD. The savings from the CPU alone will allow you to invest a useful amount more into ALL of those areas. There are people who do need a chip like this but they are not gamers. Intel are pushing hard with both the limitations of their tech (see: stupid temperatures) and their marketing BS (see: outright lies) because they know they're currently being held by the short and curlies. My 4 year old i5 may well score within 90% of these gaming benchmarks because the limitation in gaming these days is the GPU. Sorry, Intel, wrong market to aim at.imaheadcase - Saturday, October 20, 2018 - link

I like how you said limitations in tech and point to temps, like any gamer cares about that. Every game wants raw performance, and the fact remains intel systems are still easier to go about it. The reason is simple, most gamers will upgrade from another intel system and use lots of parts from it that work with current generation stuff.Its like the whole Gsync vs non gsync. Its a stupid arguement, its not a tax on gsync when you are buying the best monitor anyways.

philehidiot - Saturday, October 20, 2018 - link

Those limitations affect overclocking and therefore available performance. Which is hardly different to much cheaper chips. You're right about upgrading though.emn13 - Saturday, October 20, 2018 - link

The AVX 512 numbers look suspicious. Both common sense and other examples online suggest that AVX512 should improve performance by much less than a factor 2. Additionally, AVX-512 causes varying amounts of frequency throttling; so you;re not going to get the full factor 2.This suggests to me that your baseline is somehow misleading. Are you comparing AVX512 to ancient SSE? To no vectorization at all? Something's not right there.