AMD Rome Second Generation EPYC Review: 2x 64-core Benchmarked

by Johan De Gelas on August 7, 2019 7:00 PM ESTBetter Core in Zen 2

Just in case you have missed it, in our microarchitecture analysis article Ian has explained in great detail why AMD claims that its new Zen2 is significantly better architecture than Zen1:

- a different second-stage branch predictor, known as a TAGE predictor

- doubling of the micro-op cache

- doubling of the L3 cache

- increase in integer resources

- increase in load/store resources

- support for two AVX-256 instructions per cycle (instead of having to combine two 128 bit units).

All of these on-paper improvements show that AMD is attacking its key markets in both consumer and enterprise performance. With the extra compute and promised efficiency, we can surmise that AMD has the ambition to take the high-performance market back too. Unlike the Xeon, the 2nd gen EPYC does not declare lower clocks when running AVX2 - instead it runs on a power aware scheduler that supplies as much frequency as possible within the power constraints of the platform.

Users might question, especially with Intel so embedded in high performance and machine learning, why AMD hasn't gone with an AVX-512 design? As a snap back to the incumbent market leader, AMD has stated that not all 'routines can be parallelized to that degree', as well as a very clear signal that 'it is not a good use of our silicon budget'. I do believe that we may require pistols at dawn. Nonetheless, it will be interesting how each company approaches vector parallelisation as new generations of hardware come out. But as it stands, AMD is pumping its FP performance without going full-on AVX-512.

In response to AMD's claims of an overall 15% IPC increase for Zen 2, we saw these results borne out of our analysis of Zen 2 in the consumer processor line, which was released last month. In our analysis, Andrei checked and found that it is indeed 15-17% faster. Along with the performance improvements, there have been also security hardening updates, improved virtualization support, and new but proprietary instructions for cache and memory bandwidth Quality of Service (QoS). (The QoS features seem very similar to what Intel has introduced in Broadwell/Xeon E5 version 4 and Skylake - AMD is catching up in that area).

Rome Layout: Simple Makes It a Lot Easier

When we analyzed AMD's first generation of EPYC, one of the big disadvantages was the complexity. AMD had built its 32-core Naples processors by enabling four 8-core silicon dies, and attaching each one to two memory channels, resulting in a non-uniform memory architecutre (NUMA). Due to this 'quad NUMA' layout, a number of applications saw quite a few NUMA balancing issues. This happened in almost every OS, and in some cases we saw reports that system administrators and others had to do quite a bit optimization work to get the best performance out of the EPYC 7001 series.

The New 2nd Gen EPYC, Rome, has solved this. The CPU design implements a central I/O hub through which all communications off-chip occur. The full design uses eight core chiplets, called Core Complex Dies (CCDs), with one central die for I/O, called the I/O Die (IOD). All of the CCDs communicate with this this central I/O hub through dedicated high-speed Infinity Fabric (IF) links, and through this the cores can communicate to the DRAM and PCIe lanes contained within, or other cores.

The CCDs consist of two four-core Core CompleXes (1 CCD = 2 CCX). Each CCX consist of a four cores and 16 MB of L3 cache, which are at the heart of Rome. The top 64-core Rome processors overall have 16 CCX, and those CCX can only communicate with each other over the central I/O die. There is no inter-chiplet CCD communication.

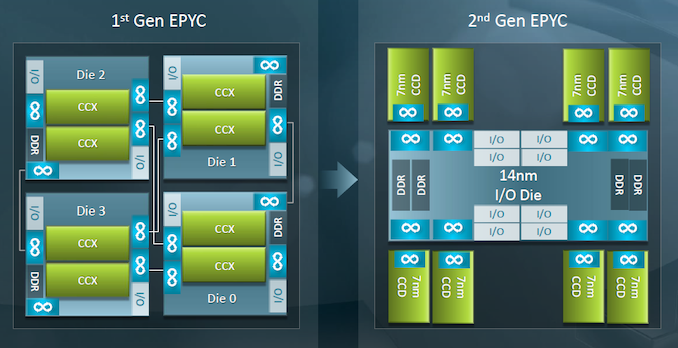

This is what this diagram shows. On the left we have Naples, first Gen EPYC, which uses four Zepellin dies each connected to the other with an IF link. On the right is Rome, with eight CCDs in green around the outside, and a centralized IO die in the middle with the DDR and PCIe interfaces.

As Ian reported, while the CCDs are made at TSMC, using its latest 7 nm process technology. The IO die by contrast is built on GlobalFoundries' 14nm process. Since I/O circuitry, especially when compared to caching/processing and logic circuitry, is notoriously hard to scale down to smaller process nodes, AMD is being clever here and using a very mature process technology to help improve time to market, and definitely has advantages.

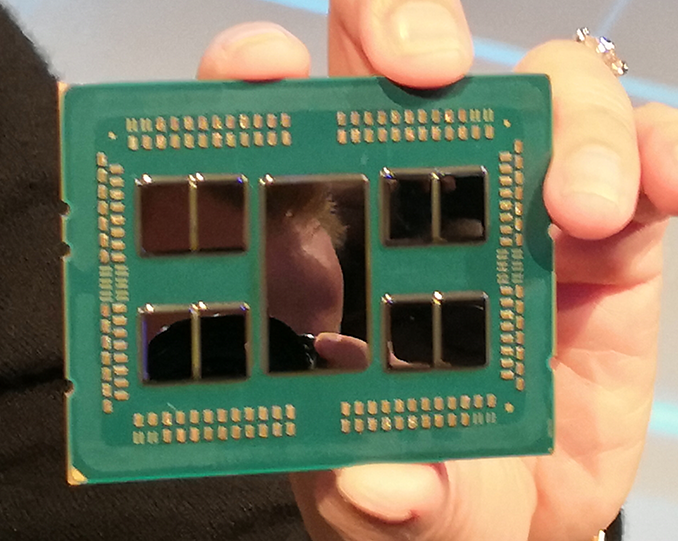

This topology is clearly visible when you take off the hood.

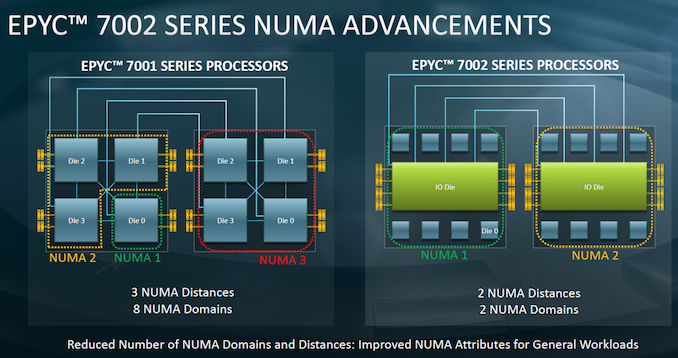

Main advantage is that the 2nd Gen 'EPYC 7002' family is much easier to understand and optimize for, especially from a software point of view, compared to Naples. Ultimately each processor only has one memory latency environment, as each core has the same latency to speak to all eight memory channels simultanously - this is compared to the first generation Naples, which had two NUMA regions per CPU due to direct attached memory.

As seen in the image below, this means that in a dual socket setup, a Naples processor will act like a traditional NUMA environment that most software engineers are familiar with.

Ultimately the only other way to do this is with a large monolithic die, which for smaller process nodes is becoming less palatable when it comes to yields and pricing. In that respect, AMD has a significant advantage in being able to develop small 7nm silicon with high yields and also provide a substantial advantage when it comes to binning for frequency.

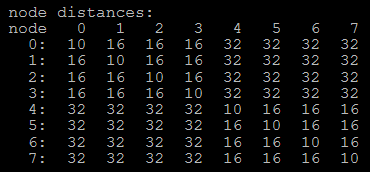

How a system sees the new NUMA environment is quite interesting. For the Naples EPYC 7001 CPUs, this was rather complicated in a dual socket setup:

Here each number shows the 'weighting' given to the delay to access each of the other NUMA domains. Within the same domain, the weighting is light at only 10, but then a NUMA domain on the same chip was given a 16. Jumping off the chip bumped this up to 32.

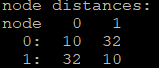

This changed significantly on Rome EPYC 7002:

Although there are situations where the EPYC 7001 CPUs communicated faster, but the fact that the topology is much simpler from the software point of view is worth a lot. It makes getting good performance out of the chip much easier for everyone that has to used it, which will save a lot of money in Enterprise, but also help accelerate adoption.

180 Comments

View All Comments

close - Thursday, August 8, 2019 - link

VMware licenses per socket. I'm not sure what kind of niche market one would have to be in (maybe HPC on Windows with the HPC Pack?) to run Win server bare metal on this thing. So I'm pretty sure the average cores/VM for Windows servers is relatively low and no reason for concern.schujj07 - Thursday, August 8, 2019 - link

@deltaFx2 Most people purchase more cores than they currently need so that they can grow. In the long run it is cheaper to purchase a higher SKU right now than purchase a second host a year down the road.@close There are companies that are Windows only so they would install Hyper-V onto this host to use as their hypervisor. However, even under VMware if you want to license Windows as a VM you have to pay the per-core licensing for every CPU core on each VM. I looked into getting volume licensing for Server 2016 for the company I work for we have 2 hosts with dual 24 core Epyc 7401's and we would need to get 16 dual core license packs for each instance of Server 2016. It ended up that we couldn't afford to get Sever 2016 because it would have cost us $5k per instance of Server 2016.

DigitalFreak - Thursday, August 8, 2019 - link

@schujj07 Just buy a Windows Server Datacenter license for each host and you don't have to worry about licensing each VM.schujj07 - Thursday, August 8, 2019 - link

AFAIK it doesn't work that way when you are running VMware. With VMware you will still have to license each one.wolrah - Thursday, August 8, 2019 - link

@schujj07 nope. Windows Server licensing is the same no matter which hypervisor you're using. Datacenter licenses allow unlimited VMs on any licensed host.diehardmacfan - Thursday, August 8, 2019 - link

This is correct. You do need to buy the licenses to match the core count of the hypervisor, however.Dug - Friday, August 9, 2019 - link

You still have to pay for cores on datacenter. Each datacenter license covers 2 cores with a minimum purchase of 8. So over 8 cores and you are buying more licenses. 64 cores is about $25kMDD1963 - Friday, August 9, 2019 - link

Windows license (Standard or Datacenter) covers 2 *sockets* for, a total of 16 cores....; if you have more than 2 sockets, you need more licenses...; if you have 2 sockets, filled with 8 core CPUs, you are good with one standard license... If you have 20 total cores, you need a standard license, and a pair of '2 core' add ons... If you have 32 cores, you need 2 full standard licenses....MDD1963 - Friday, August 9, 2019 - link

Datacenter is still licensed for 16 cores, with little 2 pack increments available, or, in the case of a 64 core CPU, effectively 4 Datacenter licenses would be required...($6k per 16 cores, or, roughly $24k)deltaFx2 - Friday, August 9, 2019 - link

@schujj07: Of course I get that. The OP @Pancakes implied that Rome was going to hurt the wallets of buyers using windows server. The implication being this would not happen if they bought Intel. I was questioning those assumptions. How can Rome cost more money for windows licenses unless rome needs more cores to get the same job done or enterprises overprovision Rome (in terms of total cores) vs. Intel. That would make sense if the per-thread performance is worse but it's not.