The Intel Lakefield Deep Dive: Everything To Know About the First x86 Hybrid CPU

by Dr. Ian Cutress on July 2, 2020 9:00 AM ESTLakefield: Top Die to Bottom Die

At the top is the compute die, featuring the compute cores, the graphics, and the display engines for the monitors.

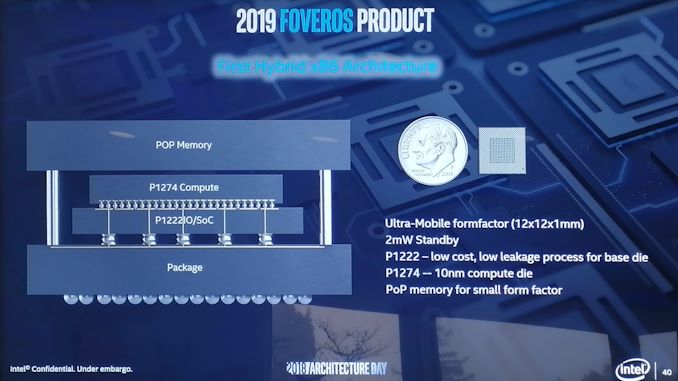

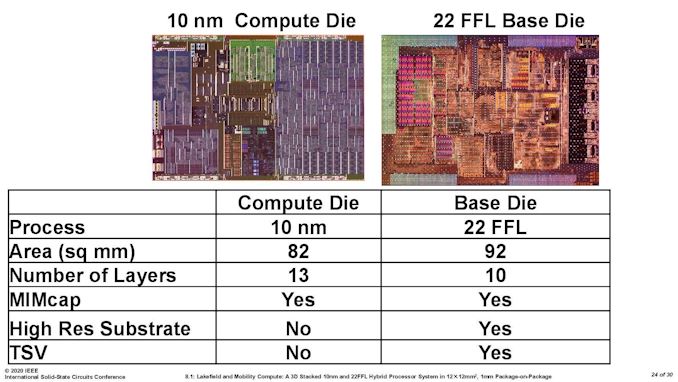

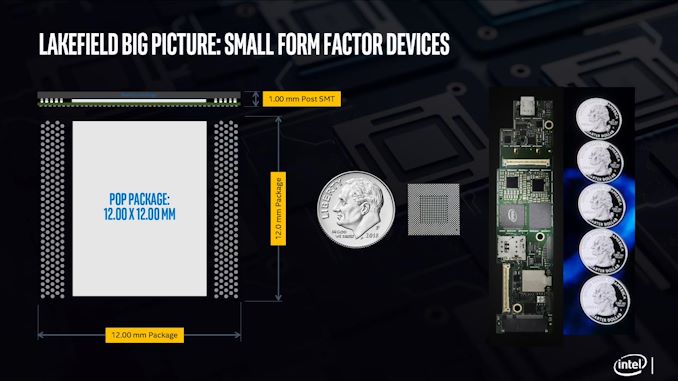

It might be easier to imagine it as the image above. The whole design fits into physical dimensions of 12 mm by 12 mm, or 0.47 inch by 0.47 inch, which means the internal silicon dies are actually smaller than this. Intel has previously published that the base peripheral interposer silicon is 92 mm2, and the top compute die is 82 mm2.

Compute Die

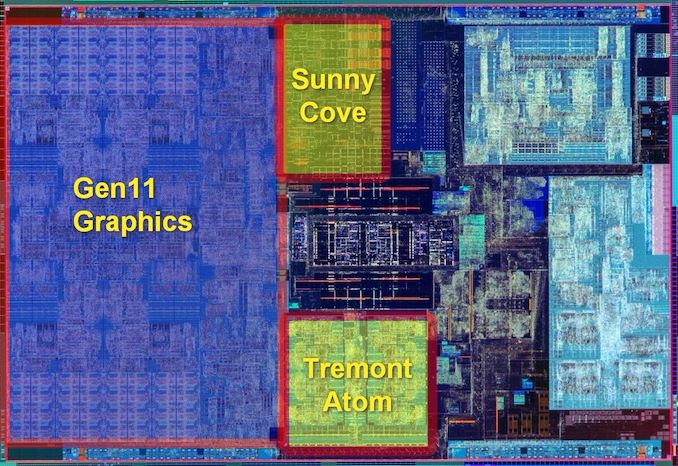

Where most of the magic happens is on the top compute die. This is the piece of silicon built on Intel’s most advanced 10+ nm process node and contains the big core, the small cores, the graphics, the display engines, the image processing unit, and all the point-to-point connectivity. The best image of this die looks something like this:

The big block on the left is the Gen 11 graphics, and is about 37% of the top compute die. This is the same graphics core configuration as what we’ve seen on Intel’s Ice Lake mobile CPUs, which is also built on the same 10+ process.

At the top is the single Sunny Cove core, also present in Ice Lake. Intel has stated that it has physically removed the AVX-512 part of the silicon, however we can still see it in the die shot. This is despite the fact that it can’t be used in this design due to one of the main limitations of a hybrid CPU. We’ll cover that more in a later topic.

At the bottom in the middle are the four Tremont Atom cores, which are set to do most of the heavy lifting (that isn’t latency sensitive) in this processor. It is worth noting the relative sizes of the single Sunny Cove core compared to the four Tremont Atom cores, whereby it seems we could fit around three Tremont cores in the same size as a Sunny Cove.

On this top compute die, the full contents are as follows:

- 1 x Sunny Cove core, with 512 KiB L2 cache

- 4 x Tremont Atom cores, with a combined 1536 KiB of L2 cache between them

- 4 MB of last level cache

- The uncore and ring interconnect

- 64 EUs of Gen11 Graphics

- Gen11 Display engines, 2 x DP 1.4, 2x DPHY 1.2,

- Gen11 Media Core, supporting 4K60 / 8K30

- Intel’s Image Processing Unit (IPU) v5.5, up to 6x 16MP cameras

- JTAG, Debug, SVID, P-Unit etc

- LPDDR4X-4267 Memory Controller

Compared to Ice Lake mobile silicon, which measures in at 122.52 mm2, this top compute die is officially given as 82.x mm2. It’s worth noting that the Ice Lake die also contains what Lakefield has on the base die as well. This top die has been quoted as having 4.05 billion transistors and 13 metal layers. For those playing a transistor density game at home, this top die averages 49.4 million transistors per square millimeter.

Base Die / Interposer Die

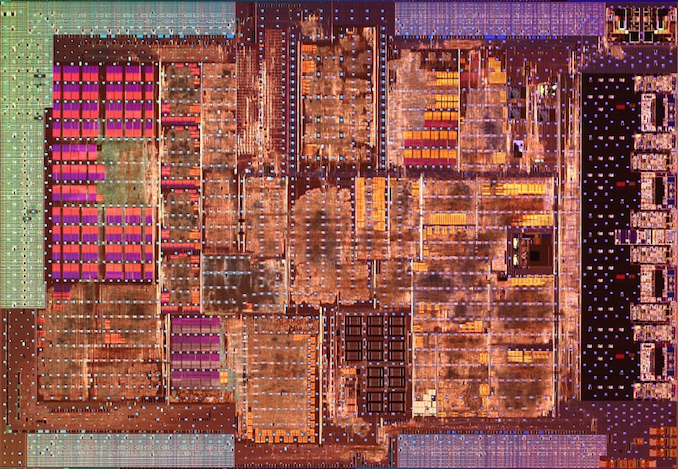

The base interposer die is, by contrast, a lot simpler. It is built on Intel’s 22FFL process, which despite the name is actually an optimized power version of Intel’s 14nm process with some relaxed rules to allow for ultra-efficient IO development. The benefit of 22FFL being a ‘relaxed’ variant of Intel’s own 14nm process also means it is simpler to make, and really chip by comparison to the 10+ design of the compute die. Intel could make these 22FFL silicon parts all year and not break a sweat. The only complex bit comes in the die-to-die connectivity.

The small white dots on the diagram are meant to be the positions of the die-to-die bonding patches. Intel has quoted this base silicon die as having 10 metal layers, and measuring 92.x mm2 for only only 0.65 billion transistors. Again, for those playing at home, this equates to an average density of 7.07 million transistors per square millimeter.

On this bottom die, along with all the management for the die-to-die interconnects, we get the following connectivity which is all standards based:

- Audio Codec

- USB 2.0, USB 3.2 Gen x

- UFS 3.x

- PCIe Gen 3.0

- Sensor Hub for always-on support

- I3C, SDIO, CSE, SPI/I2C

One element key to the base interposer and IO silicon is that it also has to carry power up to the compute die. With the compute die being on top to aid in the cooling configuration, it still has to get power from somewhere. Because the compute die is the more power hungry part of the design, it needs dedicated power connectivity through the package. Whereas all the data signals can move around from the compute die to the peripheral die, the power needs to go straight through. As a result, there are a number of power oriented ‘through silicon vias’ (TSVs) that have to be built into the design of the peripheral part of the processor.

Power and High Speed IO

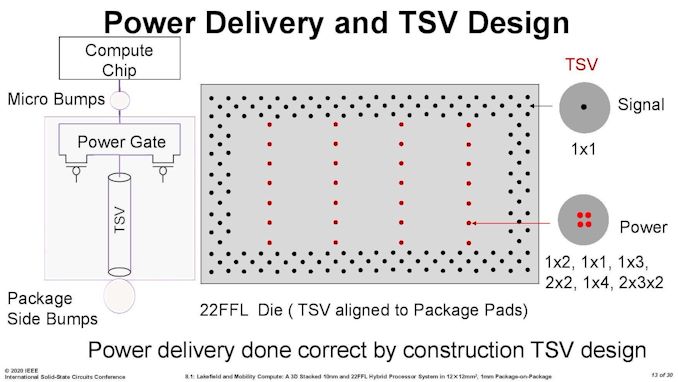

Here’s a more complex image from a presentation earlier this year. It shows that Intel is using two types of connection from the bottom die to the top die: signal (data) connections and power connections. Intel didn’t tell us exactly how many connections are made between the two die, stating it was proprietary information, but I’m sure we will find out in due course when someone decides to put the chip in some acid and find out properly.

However, some napkin math shows 28 power TSV points, which could be in any of the configurations to the right – those combinations have a geometric mean of 3.24 pads per point listed, so with 28 points on the diagram, we’re looking at ~90 power TSVs to carry the power through the package.

Normally passing power through a horizontal or vertical plane has the potential to cause disturbance to any signalling nearby – Intel did mention that their TSV power implementations are actually very forgiving in this instance, and the engineers ‘easily’ built sufficient space for each TSV used. The 22FLL process helped with this, but also the very low density of the process needed gave plenty of room.

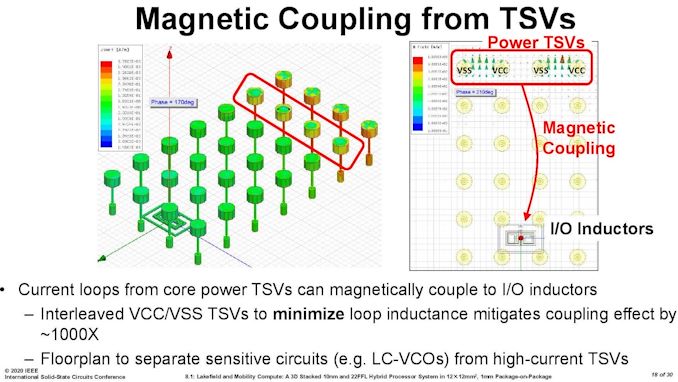

From this slide we can see that the simulations on TSVs in the base die required different types of TSV to be interleaved in order to minimize different electrical effects. High current TSVs are very clearly given the widest berth in the design.

When it comes to the IO of the bottom die, users might see that PCIe 3.0 designation and baulk – here would be a prime opportunity for Intel to announce a PCIe 4.0 product, especially with a separate focused IO silicon chiplet design. However, Lakefield isn’t a processor that is going to be paired with a discrete GPU, and these PCIe lanes are meant for additional peripherals, such as a smartphone modem.

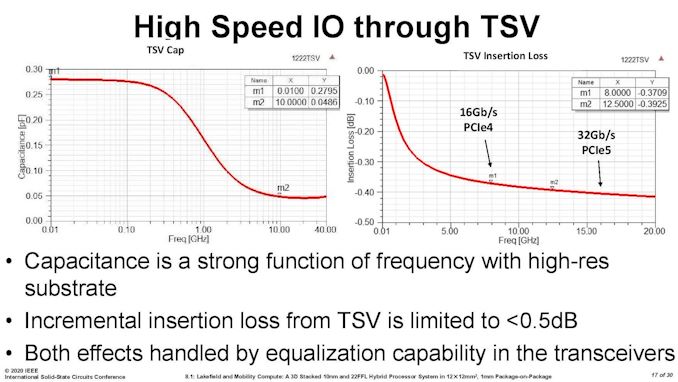

Not to be discouraged, Intel has presented that it has looked into high-speed IO through its die-to-die interconnect.

In this case, Intel battles capacitance as the higher frequency requirements of newer PCIe specifications. In this instance the signal insertion loss difference between PCIe 4.0 and PCIe 5.0 is fairly low, and well within a 0.5 dB variance. This means that this sort of connectivity might see its way into future products.

Memory

Also built into the package is the onboard memory – in this case it is DRAM, not any form of additional cache. The PoP memory on top (PoP stands for Package on Package) comes from a third party, and Intel assembles this at manufacturing before the product is sold to its partners. Intel will offer Lakefield with 8 GB and 4 GB variants, both built on some fast LPDDR4X-4266 memory.

In our conversations with Intel, the company steadfastly refuses to disclose who is producing the memory, and will only confirm it is not Intel. It would appear that the memory for Lakefield is likely a custom part specifically for Intel. We will have to wait until some of our peers take the strong acids to a Lakefield CPU in order to find out exactly who is working with Intel (or Intel could just tell us).

The total height, including DRAM, should be 1 mm.

As mentioned earlier in the article, Intel moving to chiplets one on top of the other exchanges the tradeoff of package size for one of cooling, especially when putting two computationally active parts of silicon together and then a big hunk of DRAM on top. Next we’ll consider some of the thermal aspects to Lakefield.

221 Comments

View All Comments

ichaya - Sunday, July 5, 2020 - link

The chart shows <10% power for <30% perf, and <20% power for <50% perf. That seems like 2-3x perf/watt difference as well. The A13 has a total of 28MB of cache shared between the CPU+GPU, where as this seems to have 6MB for the 4+1 CPU cores sans L1 caches.I'd love to see an Anandtech article on how Apple's large caches help with the code density differences between x86-64/ARM and with lower clock speeds, power consumption.

Wilco1 - Sunday, July 5, 2020 - link

The code density of AArch64 is significantly better than x86_64, so even at same cache sizes Arm has an advantage.ichaya - Wednesday, July 8, 2020 - link

Source? Everything I've read says x86-64 still has a diminishing but slight advantage in code density. If anything, lower clock speeds are helping Apple by avoiding memory pressure issues at higher clock speeds. I highly doubt AArch64 could perform the same as x86-64 with equal caches at any clock speed. uArch differences could outweigh these differences, but I've seen evidence of this given how large Apple's caches have been.ichaya - Wednesday, July 8, 2020 - link

* I've seen no evidence of this given how large Apple's caches have been.Correcting the last sentence in post above.

Wilco1 - Wednesday, July 8, 2020 - link

No, x86 has never had good code density, 32-bit x86 is terrible compared to Thumb-2. x86_64 has worse code density than 32-bit x86, and it gets really bad if you use SIMD instructions.Try building a large binary on both systems using the same compiler and compare the .text sizes. For example I use all of SPEC2017 built with identical GCC version and options. AArch64 code is generally 10-15% smaller.

Many AArch64 cores already have higher IPC - yes that absolutely means they are faster than x86 cores at the same clock frequency using similar sized caches.

This https://images.anandtech.com/graphs/graph15578/115... shows Neoverse N1 has ~28% higher IPC than EPYC 7571 and ~21% higher IPC than Xeon Platinum 8259 on SPECINT2017. While Naples has 2x8MB LLC on each chiplet, the Xeon has 36MBytes, more than the 32MB in Graviton 2 (both also have 1MB L2 per core).

Recent cores like Cortex-A78 and Cortex-X1 are 30-50% faster than Neovere N1. Do the math and see where this is going. 2020 is the year when AArch64 servers outperform the fastest x86 servers, 2021 may be the year when AArch64 CPUs outperform the fastest x86 desktops.

ichaya - Saturday, July 11, 2020 - link

If you compare with -march=x86-64 or with a specific uArch like -march=haswell you'll get comparable code sizes to -march=armv8.4-a. But form the runtime code density differences I've seen, x86-64 still seems to have a slight advantage.From the article you linked the image from (https://www.anandtech.com/show/15578/cloud-clash-a... "If we were to divide the available cache on a per-thread basis, the Graviton2 leads the set at 1.5MB, ahead of the EPYC’s 1.25MB and the Xeon’s 1.05MB." ARM's system-level cache is good idea, as is shared L2 in Apple's A* chips. But cache advantages per thread in Graviton and A* seem to signal it's not the uArch making the difference. Similar cores to Graviton's cores with less cache, do a lot worse. Not being able to clock higher than 2.5Ghz also seems to signal that the uArch/interconnects cannot keep up with memory pressure.

To the extent that die sizes of these chips (Graviton 2 is 7nm, Epyc 7571 and Intel Xeon 8259CL are 14nm) are comparable, it's features like AVX2/SMT that seem to have been replaced with cache in the benchmarks in the article. I'll be looking forward to A* chips to see how they might stack up in Laptops and Desktops, but these are the doubts I still have.

ichaya - Saturday, July 11, 2020 - link

Correct link in post above: https://www.anandtech.com/show/15578/cloud-clash-a...Wilco1 - Saturday, July 11, 2020 - link

Runtime code density? Do you mean accurately counting total bytes fetched from L1I and MOP cache? x86 won't look good because of the inefficiency of byte-aligned instructions, needing 2 extra predecode bits per byte and MOPs being very wide on x86 (64 bits in SandyBridge)... It clearly shows why byte-sized instructions are a bad idea.The graph I posted is for single-threaded performance, so the amount of cache per-thread is not relevant at all. Arm's IPC is higher and thus it is a better micro architecture than Skylake and EPYC 1. IPC is also ~12% better than EPYC 7742 based on https://www.anandtech.com/show/14694/amd-rome-epyc...

In terms of all-core throughput the fastest EPYC 7742 does only ~30% better than Graviton 2 on INTrate2006. That's pretty awful considering it has 8 times the L3 cache (yes eight times!!!), twice the threads, runs at up to 3.4GHz and uses twice the power...

In terms of die size, EPYC 7742 is ~3 times larger in 7nm, so it's extremely area inefficient compared to Graviton 2. So any suggestion that cache is used to make a weak core look better should surely be directed at EPYC?

Graviton 2 is a very conservative design to save cost, hence the low 2.5GHz frequency. Ampere Altra pushes the limits with 80 Neoverse N1 cores at 3.3GHz base (yes that's base, not turbo!). Next year it will have 128 cores, competing with 128 threads in EPYC 3. Guess how that will turn out?

ichaya - Sunday, July 12, 2020 - link

Code density and decoding instructions are separate things. Here's an older paper on code density of a particular program: http://web.eece.maine.edu/~vweaver/papers/iccd09/l...Single threaded workloads are obviously going to do better with a shared system-level and in Apple's case, shared L2 caches. Sharing caches is something that Intel is closer to than AMD. You cannot compare INTrate2006 or any single threaded benchmark running on an ARM where all system-level caches are available for one thread with an Epyc 7742 where only 1 CCX's L3 caches are available to one thread. That would be 32MB on Graviton 2 vs 16MB on an AMD EPYC 2 CCX. So, AMD is being 30% faster with 1/2 the cache and clocked 30% higher than Graviton 2.

I will definitely give credit to efficient shared system/L2 cache usage to Graviton 2, A*, and other ARM chips, but comparing power usage when there are 64 cores of AVX2 on chip when there's nothing comparable on another is an irrelevant comparison if there ever was one.

Wilco1 - Sunday, July 12, 2020 - link

The complexity and overhead of instruction decoding is closely related with the ISA. Byte-aligned instructions have a large cost, and since they don't give a code density advantage, it's an even larger cost! Again if you want to study code density, compare all of SPEC or a whole Linux distro. Code density of huge amounts of compiled code is what matters in the real world, not tiny examples that are a few hundred bytes!Well EPYC 7742 is only 21% faster single threaded while being clocked 36% faster. Sure Graviton 2 has twice the L3 available, but the difference between 16 and 32MBytes is hardly going to be 12%. If every doubling gave 10% then the easiest way to improve performance was to keep doubling caches!

AVX isn't used much, surely not in SPEC, so it contributes little to total power consumption (unless you're trying to say that x86 designers are totally incompetent?). At the end of the day getting good perf/W matters to data centers, not whether a core has AVX or not.