The Samsung 870 QVO (1TB & 4TB) SSD Review: QLC Refreshed

by Billy Tallis on June 30, 2020 11:40 AM ESTWhole-Drive Fill

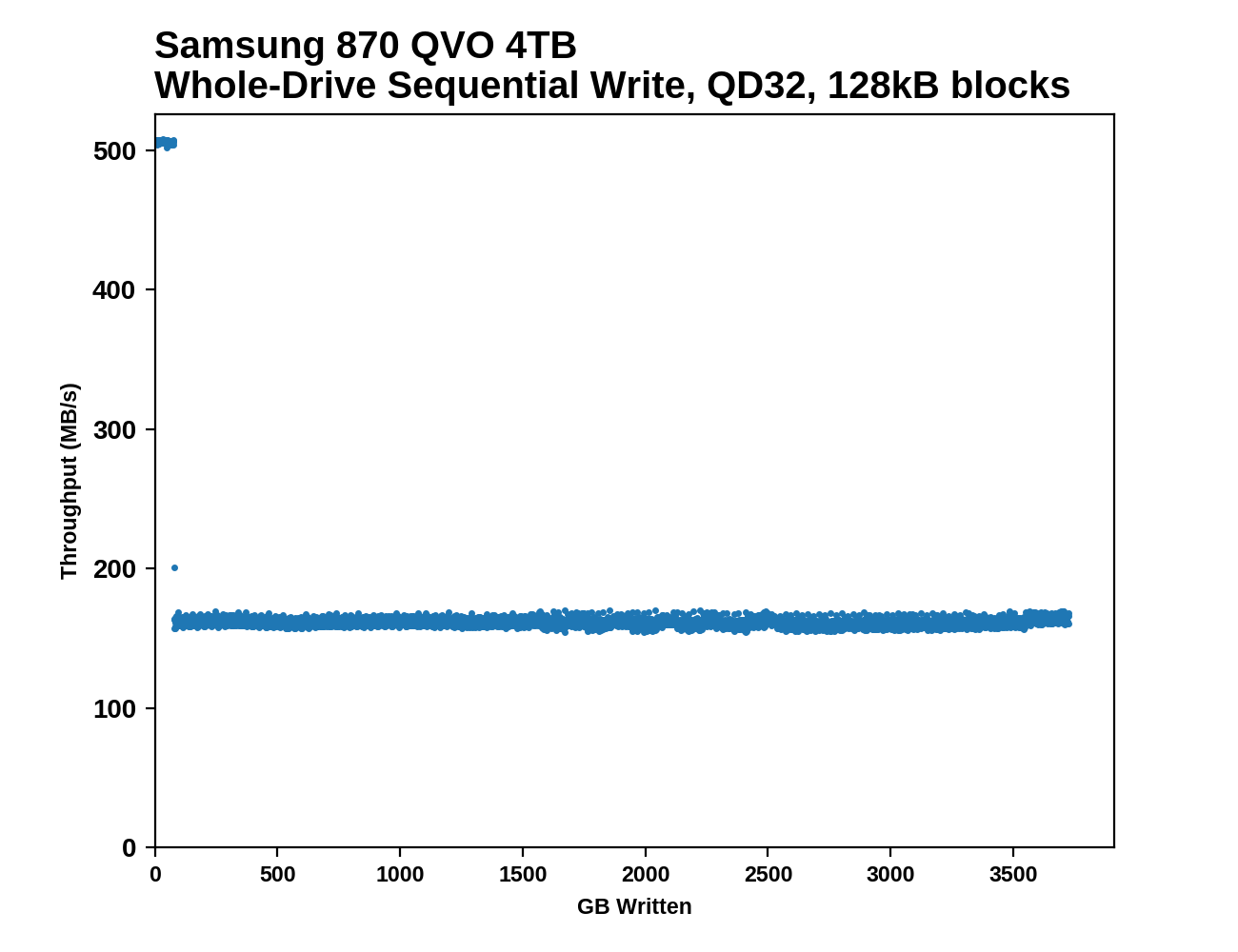

This test starts with a freshly-erased drive and fills it with 128kB sequential writes at queue depth 32, recording the write speed for each 1GB segment. This test is not representative of any ordinary client/consumer usage pattern, but it does allow us to observe transitions in the drive's behavior as it fills up. This can allow us to estimate the size of any SLC write cache, and get a sense for how much performance remains on the rare occasions where real-world usage keeps writing data after filling the cache.

|

|||||||||

The SLC caches on the 870 QVOs run out right on schedule, at 42 GB and 78 GB. Write performance drops precipitously but is stable thereafter, for the rest of the drive fill process. This behavior hasn't changed meaningfully from the 860 QVO.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

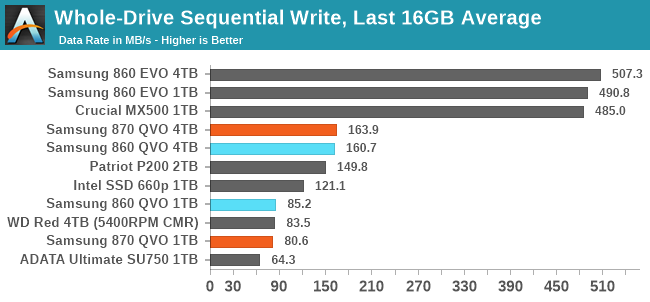

The 870 QVO turns in scores that are very similar to its predecessor. The 1TB model averages similar write performance to a hard drive, albeit with very different performance characteristics along the way. The 4TB model manages to stay ahead of the hard drive's write performance for pretty much the entire run. Both capacities of QLC drives offer a mere fraction of the post-cache write speed of mainstream TLC drives, and even the 2TB DRAMless TLC drive offers much better sequential write performance for almost all of the test duration.

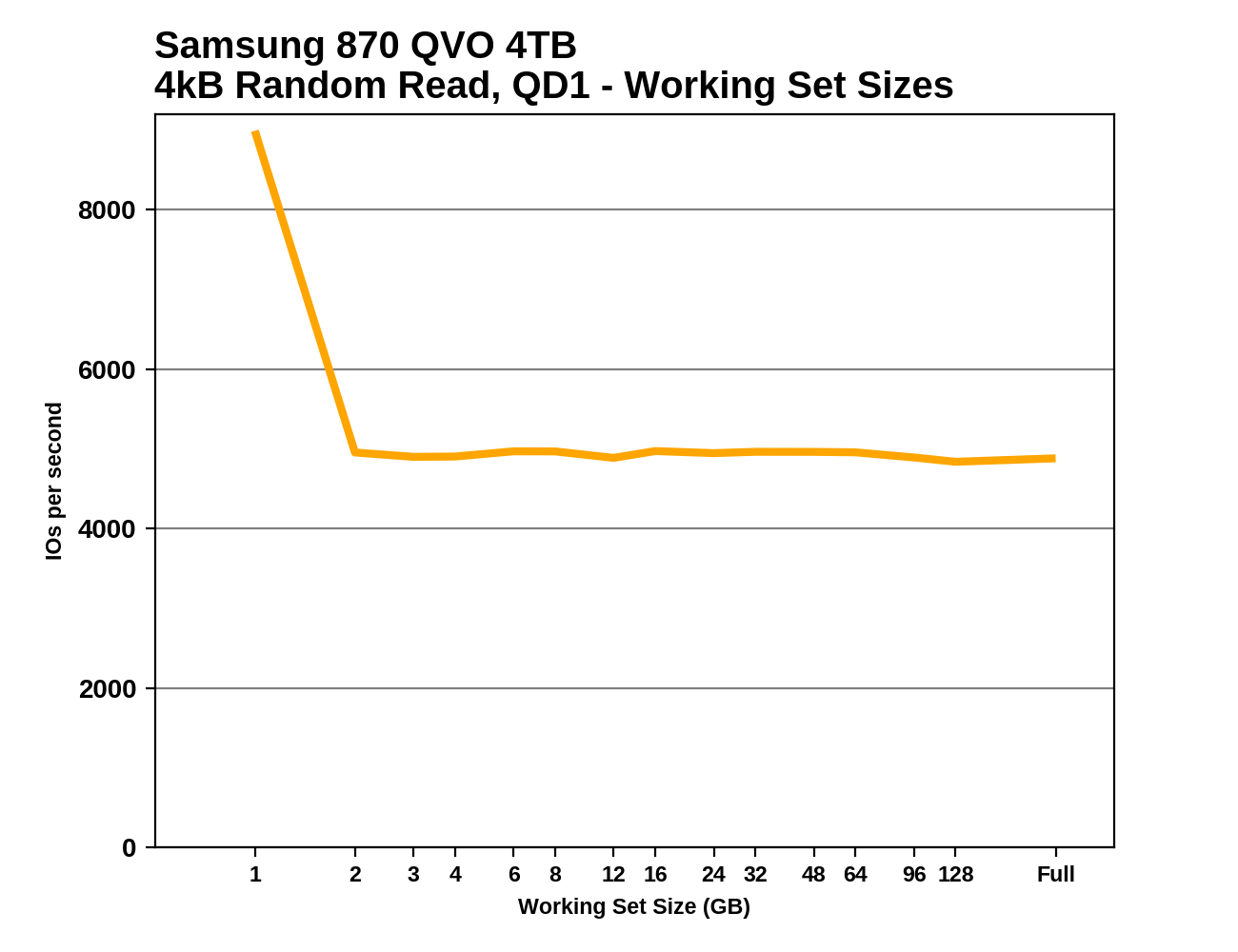

Working Set Size

Most mainstream SSDs have enough DRAM to store the entire mapping table that translates logical block addresses into physical flash memory addresses. DRAMless drives only have small buffers to cache a portion of this mapping information. Some NVMe SSDs support the Host Memory Buffer feature and can borrow a piece of the host system's DRAM for this cache rather needing lots of on-controller memory.

When accessing a logical block whose mapping is not cached, the drive needs to read the mapping from the full table stored on the flash memory before it can read the user data stored at that logical block. This adds extra latency to read operations and in the worst case may double random read latency.

We can see the effects of the size of any mapping buffer by performing random reads from different sized portions of the drive. When performing random reads from a small slice of the drive, we expect the mappings to all fit in the cache, and when performing random reads from the entire drive, we expect mostly cache misses.

When performing this test on mainstream drives with a full-sized DRAM cache, we expect performance to be generally constant regardless of the working set size, or for performance to drop only slightly as the working set size increases.

|

|||||||||

The 870 QVO clearly has improved read latency over its predecessors, and that's enough for the 1TB 870 to slightly outperform the ADATA SU750, a DRAMless TLC drive. The 4TB 870 QVO also shows a new behavior, with excellent random read performance at the very beginning of the test—better even that the TLC-based 860 EVOs. It looks like this test may have caught some data that was still being served from the SLC cache. Otherwise, the 870 QVOs don't care much about data locality for random reads, unlike many drives with limited or no DRAM cache.

64 Comments

View All Comments

ksec - Tuesday, June 30, 2020 - link

You get additional 5% off on Newegg as well. Soon I will have this in my NAS.Slash3 - Wednesday, July 1, 2020 - link

I have two of the 2TB MX500 SSDs. They're fantastic drives, and I paid ~$220 each for them... eighteen months ago. The market hasn't stagnated, it's ceased movement entirely. :(eek2121 - Wednesday, July 1, 2020 - link

This was due to a conscious movement of NAND manufacturers to “preserve profitability”. We need some fresh blood in the industry.scineram - Friday, July 3, 2020 - link

That makes sense. We wouldn't want any of them to go bankrupt.damianrobertjones - Tuesday, June 30, 2020 - link

"That's a shrinking market segment, but high-capacity drives are probably going to be one of the last areas where SATA still makes sense"I put together an mAtx system today and it only had 1x m.2 slot. Sata SSD for storage (games) it is then.

Great_Scott - Thursday, July 2, 2020 - link

I see this "SATA is dead!?!?!12" on a lot of sites, and it makes no sense at all.Most motherboards, even full size ones, as of 2020, have an average of 2 M.2 slots. And on a lot of boards the second slot isn't PCIe(!)

The plain fact of life is that if you intend to have multiple game drives (and a lot of people do) or a smaller SSD for storage, you're going to HAVE TO use SATA. No other choice.

I'm sorry, but in this particular case, Anandtech Editors, SATA is not and can not "go away". Not for at least another 2 PC replacement cycles at that.

Lolimaster - Monday, July 6, 2020 - link

Fact is theres almost zero difference between sata and the fastest nvme ssds. Even on the most open world/huge map load the diffetence is between zero or 1sec on a 10-15sec loading screen.Lucky Stripes 99 - Saturday, July 4, 2020 - link

If you don't mind eating up a PCIe slot, you can get PCIe M.2 adapter cards for fairly cheap from Chinese resellers. Single slot cards are around $10 while dual slot cards are around $13. I picked one up because the M.2 slot on my H97 board was restricted to X2 and my 970 EVO was saturating the link.Sivar - Tuesday, June 30, 2020 - link

"The 1TB QVOs (both old and new) are prone to write latencies that are worse than the 5400RPM hard drive."... This means the following sentence is a valid argument, in reality, in 2020: "I replaced my 1TB SSD with a 7200RPM hard drive to reduce write latencies, improve durability, and save more than 50% in costs."

Billy Tallis - Tuesday, June 30, 2020 - link

Just make sure to keep in mind that write latency matters a lot less than read latency for general consumer usage, because your OS is happy to do a lot of write buffering in RAM if the software isn't specifically requesting otherwise (eg. databases).