The AMD Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G Review

by Dr. Ian Cutress on August 4, 2021 1:45 PM ESTCPU Tests: Legacy and Web

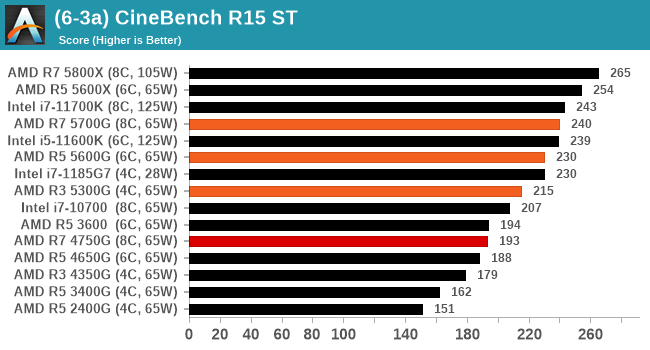

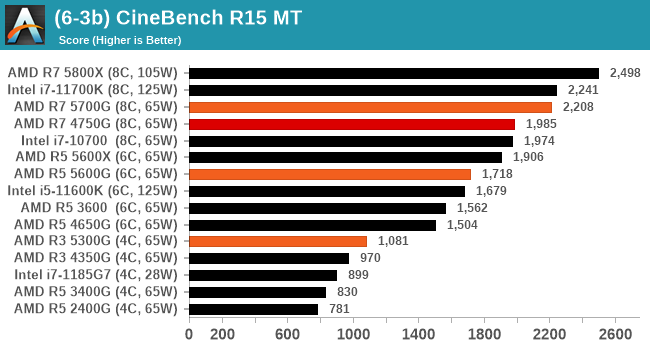

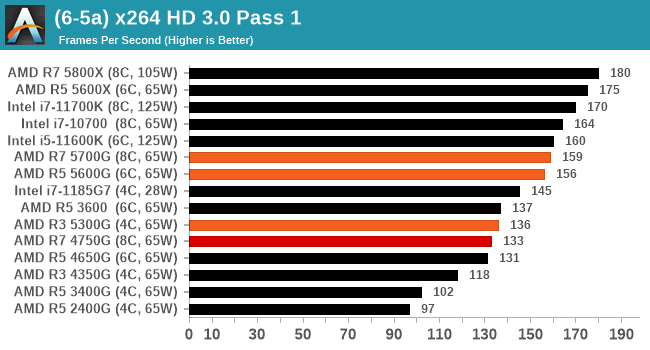

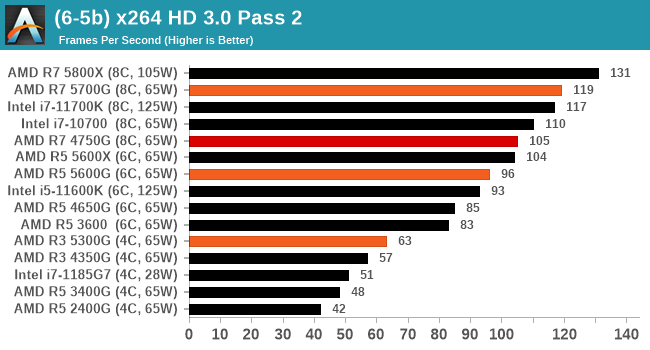

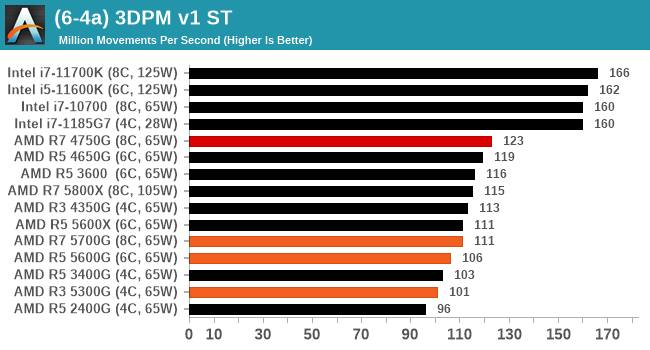

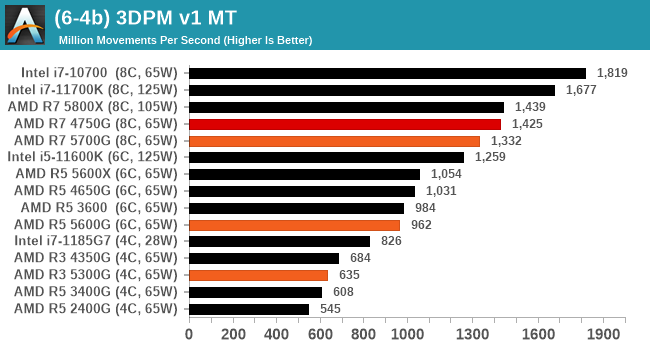

In order to gather data to compare with older benchmarks, we are still keeping a number of tests under our ‘legacy’ section. This includes all the former major versions of CineBench (R15, R11.5, R10) as well as x264 HD 3.0 and the first very naïve version of 3DPM v2.1. We won’t be transferring the data over from the old testing into Bench, otherwise it would be populated with 200 CPUs with only one data point, so it will fill up as we test more CPUs like the others.

The other section here is our web tests.

Web Tests: Kraken, Octane, and Speedometer

Benchmarking using web tools is always a bit difficult. Browsers change almost daily, and the way the web is used changes even quicker. While there is some scope for advanced computational based benchmarks, most users care about responsiveness, which requires a strong back-end to work quickly to provide on the front-end. The benchmarks we chose for our web tests are essentially industry standards – at least once upon a time.

It should be noted that for each test, the browser is closed and re-opened a new with a fresh cache. We use a fixed Chromium version for our tests with the update capabilities removed to ensure consistency.

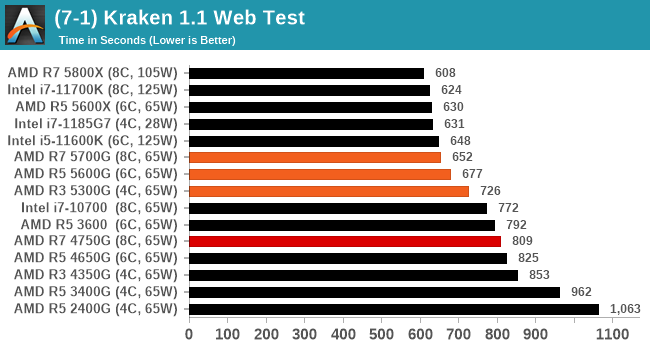

Mozilla Kraken 1.1

Kraken is a 2010 benchmark from Mozilla and does a series of JavaScript tests. These tests are a little more involved than previous tests, looking at artificial intelligence, audio manipulation, image manipulation, json parsing, and cryptographic functions. The benchmark starts with an initial download of data for the audio and imaging, and then runs through 10 times giving a timed result.

We loop through the 10-run test four times (so that’s a total of 40 runs), and average the four end-results. The result is given as time to complete the test, and we’re reaching a slow asymptotic limit with regards the highest IPC processors.

Sizeable single thread improvements.

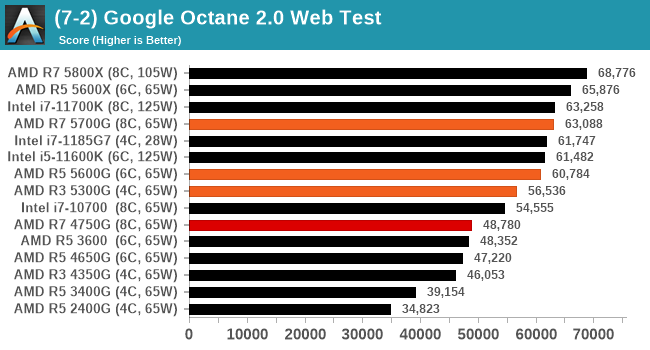

Google Octane 2.0

Our second test is also JavaScript based, but uses a lot more variation of newer JS techniques, such as object-oriented programming, kernel simulation, object creation/destruction, garbage collection, array manipulations, compiler latency and code execution.

Octane was developed after the discontinuation of other tests, with the goal of being more web-like than previous tests. It has been a popular benchmark, making it an obvious target for optimizations in the JavaScript engines. Ultimately it was retired in early 2017 due to this, although it is still widely used as a tool to determine general CPU performance in a number of web tasks.

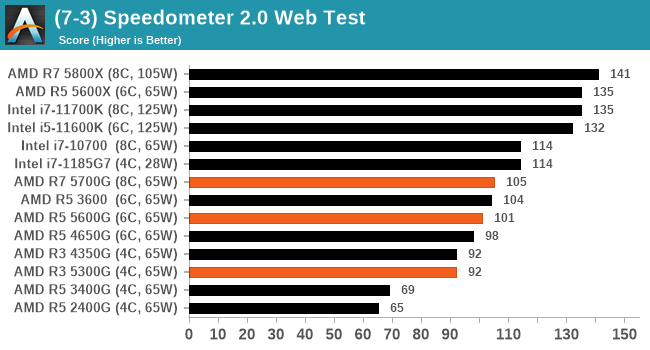

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a test over a series of JavaScript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics.

We repeat over the benchmark for a dozen loops, taking the average of the last five.

Legacy Tests

135 Comments

View All Comments

abufrejoval - Thursday, August 5, 2021 - link

There are indeed so many variables and at least as many shortages these days. And it's becoming a playground for speculators, who are just looking for such fragilities in the suppy chain to extort money.I remember some Kaveri type chips being sold by AMD, which had the GPU parts chopped off by virtue of being "borderline dies" on a round 300mm wafer. Eventually they also had enough of these chips with the CPU (and SoC) portion intact, to sell them as a "GPU-less APU".

Don't know if the general layout of the dies allows for such "halflings" on the left or right of a wafer...

mode_13h - Wednesday, August 4, 2021 - link

Ian, please publish the source of 3DPM, preferably to github, gitlab, etc.mode_13h - Wednesday, August 4, 2021 - link

For me, the fact that 5600X always beats 5600G is proof that the non-APUs' lack of an on-die memory controller is no real deficiency (nor is the fact that the I/O die is fabbed on an older process node).GeoffreyA - Thursday, August 5, 2021 - link

The 5600X's bigger cache and boost could be helping it in that regard. But, yes, I don't think the on-die memory controller makes that much of a difference compared to the on-package one.mode_13h - Friday, August 6, 2021 - link

I wrote that knowing about the cache difference, but it's not going to help in all cases. If the on-die memory controller were a real benefit over having it on the I/O die, I'd expect to see at least a couple benchmarks where the 5600G outperformed the 5600X. However, they didn't switch places, even once!I know the 5600X has a higher boost clock, but they're both 65W and the G has a higher base frequency. So, even on well-threaded, non-graphical benchmarks, it's quite telling that the G can never pass the X.

GeoffreyA - Friday, August 6, 2021 - link

Remember how the Core 2 Duo left the Athlon 64 dead on the floor? And that was without an on-die MC.mode_13h - Saturday, August 7, 2021 - link

That's not relevant, since there were incredible differences in their uArch and fab nodes.In this case, we get to see Zen 3 cores on the same manufacturing process. So, it should be a very well-controlled comparison. Still not perfect, but about as close as we're going to get.

Also, the memory controller is in-package, in both cases. The main difference of concern is whether or not it's integrated into the 7 nm compute die.

GeoffreyA - Saturday, August 7, 2021 - link

In agreement with what you are saying, even in my first comment. I think Cezanne shows that having the memory controller on the package gets the critical gains (vs. the old northbridge), and going onto the main die doesn't add much more.As for K8 and Conroe, I always felt it was notable in that C2D was able to do such damage, even without an IMC. Back when K8 was the top dog, the tech press used to make a big deal about its IMC, as if there were no other improvements besides that.

mode_13h - Sunday, August 8, 2021 - link

One bad thing about moving it on-die is that this gave Intel an excuse to tie ECC memory support to the CPU, rather than just the motherboard. I had a regular Pentium 4 with ECC memory, and all it required was getting a motherboard that supported it.As I recall, the main reason Intel lagged in moving it on-die is that they were still flirting with RAMBUS, which eventually went pretty much nowhere. At work, we built one dual-CPU machine that required RAMBUS memory, but that was about the only time I touched the stuff.

As for the benefits of moving it on-die, it was seen as one of the reasons Opteron was able to pull ahead of Pentium 4. Then, when Nehalem eventually did it, it was seen as one of the reasons for its dominance over Core 2.

GeoffreyA - Sunday, August 8, 2021 - link

Intel has a fondness for technologies that go nowhere. RAMBUS was supposed to unlock the true power of the Pentium 4, whatever that meant. Well, the Willamette I used for a decade had plain SDRAM, not even DDR. But that was a downgrade, after my Athlon 64 3000+ gave up the ghost (cheapline PSU). That was DDR400. Incidentally, when the problems began, they were RAM related. Oh, those beeps!