The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTIntel Disabled AVX-512, but Not Really

One of the more interesting disclosures about Alder Lake earlier this year is that the processor would not have Intel’s latest 512-bit vector extensions, AVX-512, despite the company making a big song and dance about how it was working with software developers to optimize for it, why it was in their laptop chips, and how no transistor should be left behind. One of the issues was that the processor, inside the silicon, actually did have the AVX-512 unit there. We were told as part of the extra Architecture Day Q&A that it would be fused off, and the plan was for all Alder Lake CPUs to have it fused off.

Part of the issue of AVX-512 support on Alder Lake was that only the P-cores have the feature in the design, and the E-cores do not. One of the downsides of most operating system design is that when a new program starts, there’s no way to accurately determine which core it will be placed on, or if the code will take a path that includes AVX-512. So if, naively, AVX-512 code was run on a processor that did not understand it, like an E-core, it would cause a critical error, which could cause the system to crash. Experts in the area have pointed out that technically the chip could be designed to catch the error and hand off the thread to the right core, but Intel hasn’t done this here as it adds complexity. By disabling AVX-512 in Alder Lake, it means that both the P-cores and the E-cores have a unified common instruction set, and they can both run all software supported on either.

There was a thought that if Intel were to release a version of Alder Lake with P-cores only, or if a system had all the E-cores disabled, there might be an option to have AVX-512. Intel shot down that concept almost immediately, saying very succinctly that no Alder Lake CPU would support AVX-512.

Nonetheless, we test to see if it is actually fused off.

On my first system, the MSI motherboard, I could easily disable the E-cores. That was no problem, just adjust the BIOS to zero E-cores. However this wasn’t sufficient, as AVX-512 was still clearly not detected.

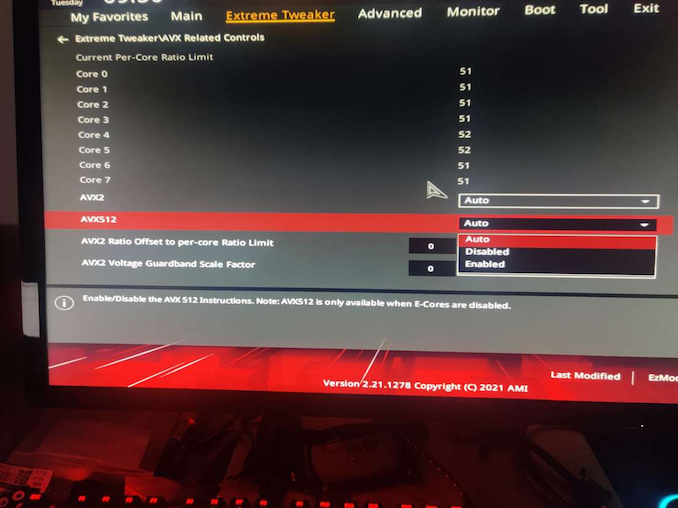

On a second system, an ASUS motherboard, there was some funny option in the BIOS.

Well I’ll be a monkey’s uncle. There’s an option, right there, front and centre for AVX-512. So we disable the E-cores and enable this option. We have AVX-512 support.

For those that have some insight into AVX-512 might be aware that there are a couple of dozen different versions/add-ons of AVX-512. We confirmed that the P-cores in Alder Lake have:

- AVX512-F / F_X64

- AVX512-DQ / DQ_X64

- AVX512-CD

- AVX512-BW / BW_X64

- AVX512-VL / VLBW / VLDQ / VL_IFMA / VL_VBMI / VL_VNNI

- AVX512_VNNI

- AVX512_VBMI / VBMI2

- AVX512_IFMA

- AVX512_BITALG

- AVX512_VAES

- AVX512_VPCLMULQDQ

- AVX512_GFNI

- AVX512_BF16

- AVX512_VP2INTERSECT

- AVX512_FP16

This is, essentially, the full Sapphire Rapids AVX-512 support. That makes sense, given that this is the same core that’s meant to be in Sapphire Rapids (albeit with cache changes). The core also supports dual AVX-512 ports, as we’re detecting a throughput of 2 per cycle on 512-bit add/subtracts.

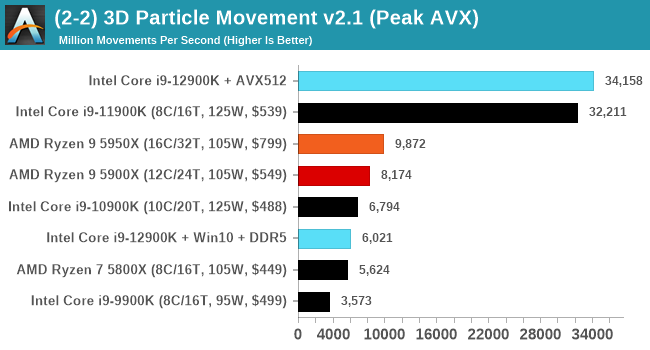

For performance, I’m using our trusty 3DPMAVX benchmark here, and compared to the previous generation Rocket Lake (which did have AVX-512), the score increases by a few percent in a scenario which isn’t DRAM limited.

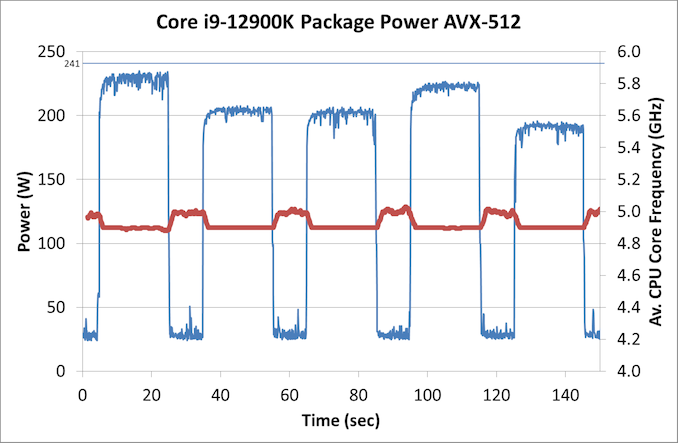

Now back in that Rocket Lake review, we noted that the highest power consumption observed for the chip was during AVX-512 operation. At that time, our testing showcased a big +50W jump between AVX2 and AVX-512 workloads. This time around however, Intel has managed to adjust the power requirements for AVX-512, and in our testing they were very reasonable:

In this graph, we’re showing each of the 3DPM algorithms running for 20 seconds, then idling for 10 seconds. Each one has a different intensity of AVX-512, hence why the power is up and down. IN each instance, the CPU used an all-core turbo frequency of 4.9 GHz, in line with non-AVX code, and our peak power observed is actually 233 W, well below the 241 W rated for processor turbo.

Why?

So the question then refocuses back on Intel. Why was AVX-512 support for Alder Lake dropped, and why were we told that it is fused off, when clearly it isn’t?

Based on a variety of conversations with individuals I won’t name, it appears that the plan to have AVX-512 in Alder Lake was there from the beginning. It was working on early silicon, even as far as ES1/ES2 silicon, and was enabled in the firmware. Then for whatever reason, someone decided to remove that support from Intel’s Plan of Record (POR, the features list of the product).

By removing it from the POR, this means that the feature did not have to be validated for retail, which partly speeds up the binning and testing/validation process. As far as I understand it, the engineers working on the feature were livid. While all their hard work would be put to use on Sapphire Rapids, it still meant that Alder Lake would drop the feature and those that wanted to prepare for Alder Lake would have to remain on simulated support. Not only that, as we’ve seen since Architecture Day, it’s been a bit of a marketing headache. Whoever initiated that dropped support clearly didn’t think of how that messaging was going to down, or how they were going to spin it into a positive. For the record, removing support isn’t a positive, especially given how much hullaballoo it seems to have caused.

We’ve done some extensive research on what Intel has done in order to ‘disable’ AVX-512. It looks like that in the base firmware that Intel creates, there is an option to enable/disable the unit, as there probably is for a lot of other features. Intel then hands this base firmware to the vendors and they adjust it how they wish. As far as we understand, when the decision to drop AVX-512 from the POR was made, the option to enable/disable AVX-512 was obfuscated in the base firmware. The idea is that the motherboard vendors wouldn’t be able to change the option unless they specifically knew how to – the standard hook to change that option was gone.

However, some motherboard vendors have figured it out. In our discoveries, we have learned that this works on ASUS, GIGABYTE, and ASRock motherboards, however MSI motherboards do not have this option. It’s worth noting that all the motherboard vendors likely designed all of their boards on the premise that AVX-512 and its high current draw needs would be there, so when Intel cut it, it meant perhaps that some boards were over-engineered with a higher cost than needed. I bet a few weren’t happy.

Update: MSI reached out to me and have said they will have this feature in BIOS versions 1.11 and above. Some boards already have the BIOS available, the rest will follow shortly.

But AVX-512 is enabled, and we are now in a state of limbo on this. Clearly the unit isn’t fused off, it’s just been hidden. Some engineers are annoyed, but other smart engineers at the motherboard vendors figured it out. So what does Intel do from here?

First, Intel could put the hammer down and execute a scorched earth policy. Completely strip out the firmware for AVX-512, and dictate that future BIOS/UEFI releases on all motherboards going forward cannot have this option, lest the motherboard manufacturer face some sort of wrath / decrease in marketing discretionary funds / support. Any future CPUs coming out of the factory would actually have the unit fused out, rather than simply turned off.

Second, Intel could lift the lid, acknowledge that someone made an error, and state that they’re prepared to properly support it in future consumer chips with proper validation when in a P-core only mode. This includes the upcoming P-core only chips next year.

Third, treat it like overclocking. It is what it is, your mileage may vary, no guarantee of performance consistency, and any errata generated will not be fixed in future revisions.

As I’ve mentioned, apparently this decision didn’t go down to well. I’m still trying to find the name of the person/people who made this decision, and get their side of the story as to technically why this decision was made. We were told that ‘No Transistor Left Behind’, except these ones in that person’s mind, clearly.

474 Comments

View All Comments

Maxiking - Thursday, November 4, 2021 - link

Let's be real, no one expected anything else, the first time Intel can use a different node that still has its problems and AMD is embarrassed and slower again.Lol, once Intel starts making their GPUs using TSMC, AMD back to being slowest there too.

LOL what a pathetic company

factual - Thursday, November 4, 2021 - link

Why disparage AMD !? the harder these companies compete, the better for consumers! Stop being a mindless fanboi of any company and start thinking rationally and become a fan of your own pocketbook !Fulljack - Thursday, November 4, 2021 - link

so, get this, you're fine with Intel stuck giving you max 4c/8t for nearly 6 years? really?Maxiking - Thursday, November 4, 2021 - link

You must be from the certain sub called AMD.I bought a 6 six for 390 USD in 2014, a 5820k.

Cry more. LOL

Maxiking - Thursday, November 4, 2021 - link

*a 6 coreTheinsanegamerN - Thursday, November 4, 2021 - link

Don't forget their 6 core 980x in 2009."But but but it wasn't a CONSUMER (read: cheap enough) CPU!!!!!!"

- THE AMDrones who forget that AMD sold a 6 core phenom that lost game benchmarks to a dual core i3 and then spent the next 5 years selling fake octo core chips.

Spunjji - Friday, November 5, 2021 - link

"But but but it wasn't a CONSUMER (read: cheap enough) CPU!!!!!!"Correct, there's a difference between a CPU that needs a consumer-level dual-channel memory platform and a workstation-grade triple-channel (or greater) platform. It doesn't sound so absurd if you don't strawman it with emotive nonsense and/or pretending that Used prices are the same as New.

I don't think anybody's forgotten how much better Intel's CPUs were from Core 2 all the way through to Skylake. The fact remains that when AMD returned to competition, they did so by opening up entirely new performance categories in desktop and notebook chips that Intel had previously restricted to HEDT hardware. I don't really understand why Intel fanbots like Maxiking feel so pressed about it, but they do, and it's kind of amusing to see them externalise it.

Qasar - Friday, November 5, 2021 - link

too bad that was hedt, not mainstream, thats where your beloved intel stagnated the cpu market, maxipadking, maybe you should cry more. intels non hedt lineup was so confusing i gave up trying to decide which to get, so i just grabbed a 5830k and an asus x99 deluxe, and was done woth it.lmcd - Friday, November 5, 2021 - link

The current street prices of $600+ for high-end desktop CPUs are comparable to HEDT prices. Let's face it, the HEDT market is underserved right now as a cost-saving measure (make them spend more for bandwidth) and not because the segmentation was bad.In sum, my opinion is that ignoring the HEDT line doesn't make much sense because segmenting the market was a good thing for consumers. Most people didn't end up needing the advantages that the platform provided before that era got excised with Windows 11 (unrelated rant not included). That provided a cost savings.

Qasar - Friday, November 5, 2021 - link

" The current street prices of $600+ for high-end desktop CPUs are comparable to HEDT prices " i didnt say current, as you saw, i mentioned X99, which was what 2014, that came out ?" Let's face it, the HEDT market is undeserved right now as a cost-saving measure " more like intel cant compete in that space due to ( possibly ) not being able to make a chip that big with acceptable yields so they just havent bothered. maybe that is also why their desktop cpus maxed out at 8 cores before gen 12, as they couldn't make anything bigger.

" doesn't make much sense because segmenting the market was a good thing for consumers " oh but it is bad, intel has pretty much always had way to many cpus for sale, i spent i think 2 days trying to decide which cpu to get back then, and it gave me a head ache. i would of been fine with z97 i think it was with the I/O and pce lanes, but trying to figure out which cpu, is where the headache came from, the prices were so close between each that i gave up, spent a little more, and picked up x99 and a 5930k( i said 5830k above, but meant 5930k) and that system lasted me until early last year.