The Snapdragon 8 Gen 1 Performance Preview: Sizing Up Cortex-X2

by Dr. Ian Cutress on December 14, 2021 8:00 AM EST

At the recent Qualcomm Snapdragon Tech Summit, the company announced its new flagship smartphone processor, the Snapdragon 8 Gen 1. Replacing the Snapdragon 888, this new chip is set to be in a number of high performance flagship smartphones in 2022. The new chip is Qualcomm’s first to use Arm v9 CPU cores as well as Samsung’s 4nm process node technology. In advance of devices coming in Q1, we attended a benchmarking session using Qualcomm’s reference design, and had a couple of hours to run tests focused on the new performance core, based on Arm’s Cortex-X2 core IP.

The Snapdragon 8 Gen 1

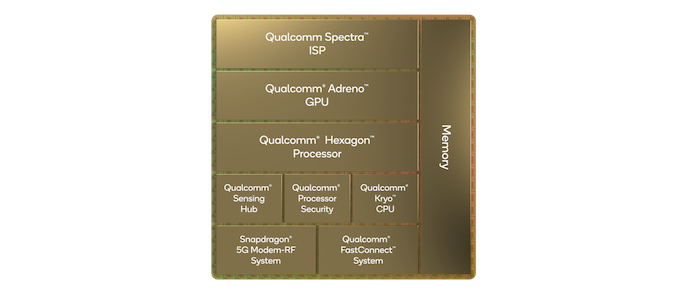

Rather than continue with the 800 naming scheme, Qualcomm is renaming its smartphone processor portfolio to make it easier to understand / market to consumers. The Snapdragon 8 Gen 1 (hereafter referred to as S8g1 or 8g1) will be the headliner for the portfolio, and we expect Qualcomm to announce other processors in the family as we move into 2022. The S8g1 uses the latest range of Arm core IP, along with updated Adreno, Hexagon, and connectivity IP including an integrated X65 modem capable of both mmWave and Sub 6 GHz for a worldwide solution in a single chip.

While Qualcomm hasn’t given any additional insight into the Adreno / graphics part of the hardware, not even giving us a 3-digit identifier, we have been told that it is a new ground up design. Qualcomm has also told us that the new GPU family is designed to look very similar to previous Adreno GPU sfrom a feature/API standpoint, which means that for existing games and other apps, it should allow a smooth transition with better performance. We had time to run a few traditional gaming tests in this piece.

On the DSP side, Qualcomm’s headlines are that the chip can process 3.2 Gigapixels/sec for the cameras with an 18-bit pipeline, suitable for a single 200MP camera, 64MP burst capture, or 8K HDR video. The encode/decode engines allow for 8K30 or 4K120 10-bit H.265 encode, as well as 720p960 infinite recording. There is no AV1 decode engine in this chip, with Qualcomm’s VPs stating that the timing for their IP block did not synchronize with this chip.

AI inference performance has also quadrupled - 2x from architecture updates and 2x from software. We have a couple of AI tests in this piece.

As usual with these benchmarking sessions, we’re very interested in what the CPU part of the chip can do. The new S8g1 from Qualcomm features a 1+3+4 configuration, similar to the Snapdragon S888, but using Arm’s newest v9 architecture cores.

- The single big core is a Cortex-X2, running at 3.0 GHz with 1 MiB of private L2 cache.

- The middle cores are Cortex-A710, running at 2.5 GHz with 512 KiB of private L2 cache.

- The four efficiency cores are Cortex-A510, running at 1.8 GHz and an unknown amount of L2 cache. These four cores are arranged in pairs, with L2 cache being private to a pair.

- On the top of these cores is an additional 6 MiB of shared L3 cache and 4 MiB of system level cache at the memory controller, which is a 64-bit LPDDR5-3200 interface for 51.2 GB/s theoretical peak bandwidth.

Compared to the Snapdragon S888, the X2 is clocked higher than the X1 by around 5% and has additional architectural improvements on top of that. Qualcomm is claiming +20% performance or +30% power efficiency for the new X2 core over X1, and on that last point it is beyond the +16% power efficiency quoted by Samsung moving from 5nm to 4nm, so there are additional efficiencies Qualcomm is implementing in silicon to get that number. Unfortunately Qualcomm would not go into detail what those are, nor provide details about how the voltage rails are separated, if this is the same as S888 or different – Arm has stated that the X2 core could offer reduced power than the X1, and if the X2 is on its own voltage rail that could provide support for Qualcomm’s claims.

The middle A710 cores are also Arm v9, with an 80 MHz bump over the previous generation likely provided by process node improvements. The smaller A510 efficiency cores are built as two complexes each of two cores, with a shared L2 cache in each complex. This layout is meant to provide better area efficiency, although Qualcomm did not explain how much L2 cache is in each complex – normally they do, but for whatever reason in this generation it wasn’t detailed. We didn’t probe the number in our testing here due to limited time, but no doubt when devices come to market we’ll find out.

On top of the cores is a 6 MiB L3 cache as part of the DSU, and a 4 MiB system cache with the memory controllers. Like last year, the cores do not have direct access to this 4 MiB cache. We’ve seen Qualcomm’s main high-end competitor for next year, MediaTek, showcase that L3+system cache will be 14 MiB, with cores having access to all, so it will be interesting to see how the two compare when we have the MTK chip to test.

Benchmarking Session: How It Works

For our benchmarking session, we were given a ‘Qualcomm Reference Device’ (QRD) – this is what Qualcomm builds to show a representation of how a flagship featuring the processor might look. It looks very similar to modern smartphones, with the goal to mirror something that might come to market in both software and hardware. The software part is important, as the partner devices are likely a couple of months from launch, and so we recognize that not everything is final here. These devices also tend to be thermally similar to a future retail example, and it’s pretty obvious if there was something odd in the thermals as we test.

These benchmark sessions usually involve 20-40 press, each with a device, for 2-4 hours as needed. Qualcomm preloads the device with a number of common benchmarking applications, as well as a data sheet of the results they should expect. Any member of the press that wants to sideload any new applications has to at least ask one of the reps or engineers in the room. In our traditional workflow, we sideload power monitoring tools and SPEC2017, along with our other microarchitecture tests. Qualcomm never has any issue with us using these.

As with previous QRD testing, there are two performance presets on the device – a baseline preset expected to showcase normal operation, and a high performance preset that opportunistically puts threads onto the X2 core even when power and thermals is quite high, giving the best score regardless. The debate in smartphone benchmarking of initial runs vs. sustained performance is a long one that we won’t go into here (most noticeably because 4 hours is too short to do any extensive sustained testing) however the performance mode is meant to enable a ‘first run’ score every time.

169 Comments

View All Comments

eastcoast_pete - Wednesday, December 15, 2021 - link

As of now, and assuming Mediatek doesn't screw up the firmware, the Dimensity 9000 would best QC's "flagship" mobile SoC for 2022. Now, I remember reading that no major smartphone maker plans to sell phones with the Dimensity 9000 in the US, at least not officially. Is that really so, and if yes, does anyone here know why? I gladly do without the mm wave 5G channels (the 9000 doesn't cover those), which are really only available on Verizon, and even then only in some places.TheinsanegamerN - Friday, December 17, 2021 - link

Dimensity has no support for Verizon, which means you lose about 40% of the US market from the get go.Wardrive86 - Wednesday, December 15, 2021 - link

Excellent article Ian! Those geekbench scores are extremely close to my tigerlake i5-1135g7 in fullsize notebook. Extremely impressive, look forward to sustained performance testing and comparisons to mediatekTheinsanegamerN - Friday, December 17, 2021 - link

How many times does it need to be pointed out that geekbench is worthless for comparisons across systems? Unless you are doing ARM android VS ARM android or the like, the scores are not representative of real world performance.Comparing ARM android to x86 windows is like comparing an apple to a medieval sword. Totally different use cases.

Wilco1 - Saturday, December 18, 2021 - link

How many times does it need to be pointed out the scores are directly comparable? It compilers the same benchmark source code using LLVM whenever possible and runs the same datasets. There are differences between OSes of course, but that only affects scores in a minor way.And it's not like Geekbench scores show a completely different picture from SPEC results - modern phones are really as fast as laptops.

Wardrive86 - Sunday, December 19, 2021 - link

Agreed Wilco, it may not stress the memory subsystems to the extent that SPEC does, but it seems to paint roughly the same picture.IUU - Monday, February 7, 2022 - link

"modern phones are really as fast as laptops."No they are not. You will inever beat the physical law. The only reason some Android or iOS devices have reached to the point of being comparable with laptops or desktops is because they have managed for the first time in history to grab some superior lithographies first

If that was not the case , laptops and desktops would be x3 to x15 faster than today's mobile devices. But if laptops nad desktops sold at 700 to 2000 dollars then how would smartphones be sold at today's prices ? The comparison would be abysmal.

So the industry found a solution. Keep desktops and laptops at ancient lithographies while grab for android and iOS the best of the best.

Then con

IUU - Monday, February 7, 2022 - link

then convince the sheeple that they somehow live in a revolution of better and most economical devices while keeping them at the same level of computing capacity for the best part of the decade. Not only that , but also get tons of dollars for selling a dream....IUU - Monday, February 7, 2022 - link

I accept both geekbench AND antutu for making a quick comparison between mobile devices(and yes I know the qualms about Antutu but I think they are hysterical. And geekbench for making quick comparisons between platforms, having in the back of my mind their limitations, always.Before the mobile devolution came we had a very clear picture of the computational capacity of our chips. Then the radicals came being secretive about their chips. So , though we still have a gross impression of the theoretical performance of desktop and laptop chips we are in the dark concerning the "mobile" . As far as I am concerned I take their claims with a huge grain of salt.

Good guys don't hide....

ChrisGX - Wednesday, December 15, 2021 - link

On these numbers the Snapdragon 8 Gen 1 doesn't appear to be living up the 4x AI perf claim. Is there more performance to come? We will know soon enough but even without any further improvement in the AI scores the SD8 Gen 1 still looks pretty good.With the SD8 Gen 1 and the MediaTek Dimensity 9000 posting good AI scores (unconfirmed in the case of the D9000) we can expect Google and even Apple to start raising the stakes on the AI performance of future silicon. Qualcomm, too, is going to have to lift its game with MediaTek looking very competitive in so many areas.