ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

Power Supply Requirements

With new product launches, we expect to see increased power requirements for increased performance. With the 8800 series, we saw hardware that offered excellent performance without breaking the bank on power, while the highest end part available required two PCIe power connectors. We can forgive the power gluttony of the 8800 GTX as the 8800 GTS offers terrific performance with a more efficient use of power.

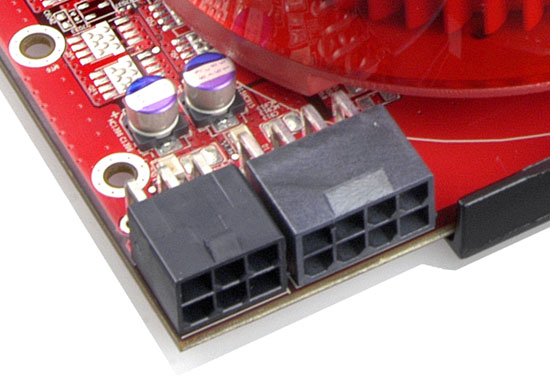

R600 goes in another direction. We have a new part that doesn't compete with the high end hardware but has even more stringent power requirements. While NVIDIA's $400 hardware offered good power efficiency, AMD's Radeon HD 2900 XT eats power for breakfast. In fact, with the R600, we see the first use of PCIe 2.0 power connectors. These expand on the current 6-pin power connector to offer up to 150W over an 8-pin configuration.

The 8-pin PCIe 2.0 power connector enables graphics cards to pull up to 300W of power just for themselves. With 75W delivered through the slot, 75W through a 6-pin PCIe power cable, and 150W sliding down the PCIe 2.0 wire, the R600 has plenty of juice on tap. While it doesn't pull a full 300W in any test we ran, overdrive won't be able to function without the combination of a 6-pin and 8-pin connector.

All is not lost, however, as two 6-pin connectors will still be able to power the R600 for normal operation. The 8-pin receptacle will accept a 6-pin cable leaving two holes empty. This doesn't degrade performance when running R600 at normal clock speeds, but overclocking will be affected without the added power.

The bottom line as we'll shortly show is that AMD has built hardware with the performance of an 8800 GTS in a power envelope beyond the 8800 Ultra. We will take a closer look in our performance benchmarks when we actually test power draw under idle and load using 3dmark06.

The Test

| CPU: | Intel Core 2 Extreme X6800 (2.93GHz/4MB) |

| Motherboard: | EVGA nForce 680i SLI ASUS P5W-DH |

| Chipset: | NVIDIA nForce 680i SLI Intel 975X |

| Chipset Drivers: | Intel 8.2.0.1014 NVIDIA nForce 9.53 |

| Hard Disk: | Seagate 7200.7 160GB SATA |

| Memory: | Corsair XMS2 DDR2-800 4-4-4-12 (1GB x 2) |

| Video Card: | Various |

| Video Drivers: | ATI Catalyst 8.37 NVIDIA ForceWare 158.22 |

| Desktop Resolution: | 2560 x 1600 - 32-bit @ 60Hz |

| OS: | Windows XP Professional SP2 |

86 Comments

View All Comments

dragonsqrrl - Thursday, August 25, 2011 - link

You forgot c).-if you're an ATI fanboy

vijay333 - Monday, May 14, 2007 - link

http://www.randomhouse.com/wotd/index.pperl?date=1...">http://www.randomhouse.com/wotd/index.pperl?date=1..."the expression to call a spade a spade is thousands of years old and etymologically has nothing whatsoever to do with any racial sentiment."

strikeback03 - Wednesday, May 16, 2007 - link

What about in Euchre, where a spade can be a club (and vice versa)?johnsonx - Monday, May 14, 2007 - link

Just wait until AT refers to AMD's marketing budget as 'niggardly'...bldckstark - Monday, May 14, 2007 - link

What do shovels have to do with race?Stan11003 - Monday, May 14, 2007 - link

My big hope out all of this that the ATI part forces the Nvidia parts lower so I can use my upgrade option from EVGA to get a nice 8800 GTX instead of my 8800 GTS ACS3 320. However with a quad core and a decent 2GB I have no gaming issues at all. I play at 1600x1200(when that become a low rez?) and everything is butter smooth. Without newer titles all this hardware is a waist anyways.Gul Westfale - Monday, May 14, 2007 - link

the article says that the part is not a failure, but i disagree. i switched from a radeon 1950pro to an nvidia geforce 8800GTS 320MB about a mont ago, and i paid only $350US for it. now i see that it still outperforms the new 2900...one of my friends wanted to wait to buy a new card, he said he hoped that the ATI part was going to be faster. now he says he will just buy the 8800GTS 320, since ATI have failed.

if they can bring out a part that competes well with the 8800GTS and price it similarly or lower then it would be worth buying, but until then i will stick with nvidia. better performance, better price, and better drivers... why would anyone buy the ATI card now?

ncage - Monday, May 14, 2007 - link

My conclusion is to wait. All of the recent GPU do great with dx9...the question is how will they do with dx10? I think its best to wait for dx10 titles to come out. I think crysis would be a PERFECT test.wingless - Monday, May 14, 2007 - link

I agree with you. Crysis is going to be the benchmark for these DX10 cards. Its hard to tell both Nvidia and AMD's DX10 performance with these current, first generation DX10 titles (most of which have a DX9 version) because they don't fully take advantage of all the power on both the G80 or R600 yet. Its true that Crysis will have a DX9 version as well but the developer stated there are some big differences in code. I'm an Nvidia fanboy but I'm disappointed with the Pure Video and HDMI support on the 8800 series cards. ATI got this worked out with their great AVIVO and their nice HDMI implementation but for now Nvidia is still the performance champ with "simpler" hardware. The G80 and R600 continue the traditions of their manufacturers. Nvidia has always been about raw power and all out speed with few bells and whistles. ATI is all about refinement, bells and whistles, innovations, and unproven new methods which may make or break them.All I really want to wait for is to see how developers embrace CUDA or ATI's setup for PHYSICS PROCESSING! Both companies seem to have well thought out methods to do physics and I cant wait to see that showdown. AGEIA and HAVOK need to hop on-board and get some software support for all this good hardware potential they have to play with. Physics is the next big gimmick and you know how much we all love gimmicks (just like good 'ole 3D acceleration 10 years ago).

poohbear - Monday, May 14, 2007 - link

they dont make a profit from high end parts that's why they're not bothering w/ it? that's AMD's story? so why bother having an FX line w/ their cpus?