NVIDIA Optimus - Truly Seamless Switchable Graphics and ASUS UL50Vf

by Jarred Walton on February 9, 2010 9:00 AM ESTNVIDIA Optimus Unveiled

Optimus is switchable graphics on steroids, but how does it all work and what makes it so much better than gen2? If you refer back to the last page where we discussed the problems with generation two switchable graphics, Optimus solves virtually every one of the complaints. Manual switching? It's no longer required. Blocking applications? That doesn't happen anymore. The 5 to 10 second delay is gone, with the actual switch taking around 200 ms—and that time is hidden in the application launch process, so you won't notice it. Finally, there's no flicker or screen blanking when you switch between IGP and dGPU. The only remaining concern is the frequency of driver updates. NVIDIA has committed to rolling Optimus into their Verde driver program, which means you should get at least quarterly driver updates, but we're still looking forward to the day when notebook and desktop drivers all come out at the same time.

As we mentioned, most of the work that went into Optimus is on the software side of things. Where the previous switchable graphics implementations used hardware muxes, all of that is now done in software. The trick is that NVIDIA's Optimus driver is able to look at each running application and decide whether it should use discrete graphics or the IGP. If an application can benefit from discrete graphics, the GPU is "instantly" powered up (most of the 200 ms delay is spent waiting for voltages to stabilize), the GPU does the necessary work, and the final result is then copied from the GPU frame buffer into the IGP frame buffer over the PCI Express bus. This is how NVIDIA is able to avoid screen flicker, and they have apparently done all of the background work using standard API calls so that there's no need to worry about updating drivers for both graphics chips simultaneously. They're a bit tight-lipped about the precise details of the software implementation (with a patent pending for what they've done), but we can at least go over the high-level view and block diagram as we discuss how things work.

NVIDIA states that their goal was to create a solution that was similar to hybrid cars. In a hybrid car, the driver doesn't worry about whether they're currently using the battery or if they're running off the regular engine. The car knows what's best and it dynamically switches between the two as necessary. It's seamless, it's easy, and it just works. You can get great performance, great battery life, and you don't need to worry about the small details. (Well, almost, but we'll discuss that in a bit.) The demo laptop for Optimus is the ASUS UL50Vf, which is identical from the outside when looking at the UL50Vt, but there are some internal changes.

Previously, switchable graphics required several hardware multiplexers and a hardware or software switch. With Optimus, all of the video connections come through the IGP, so there's no extra hardware on the motherboard. Let me repeat that, because this is important: Optimus requires no extra motherboard hardware (beyond the GPU, naturally). It is now possible for a laptop manufacturer to have a single motherboard design with an optional GPU. They don't need to have extra layers for the additional video traces and multiplexers, R&D times are cut down, you don't need to worry about signal integrity issues or other quality concerns, and there's no extra board real estate required for multiplexers. In short, if a laptop has an NVIDIA GPU and a CPU/chipset with an IGP, going forward there is no reason it shouldn't have Optimus. That takes care of one of the biggest barriers to adoption, and NVIDIA says we should see more than 50 Optimus enabled notebooks and laptops this summer. These will be in everything from next-generation ION netbooks to CULV designs, multimedia laptops, and high-performance gaming monsters.

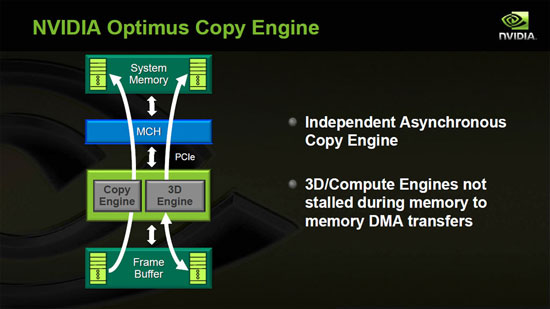

We stated that most of the work was on the software side, but there is one new hardware feature required for Optimus, which NVIDIA calls the Optimus Copy Engine. In theory you could do everything Optimus does without the copy engine, but in that case the 3D Engine would be responsible for getting the data over the PCI-E bus and into the IGP frame buffer. The problem with that approach is that the 3D engine would have to delay work on graphics rendering while it copied the frame buffer, resulting in reduced performance (it would take hundreds of GPU cycles to copy a frame). To eliminate this problem, NVIDIA added a copy engine that works asynchronously from frame rendering, and to do that they had to separate the rendering effort from the rest of the graphics engine. With those hardware changes complete, the rest is relatively straightforward. Graphics rendering is already buffered, so the Copy Engine simply transfers a finished frame over the PCI-E bus while the 3D Engine continues working on the next frame.

If you're worried about bandwidth, consider this: In a worst-case situation where sixty 2560x1600 32-bit frames are sent at 60FPS (the typical LCD refresh rate), the copying only requires 983MB/s. An x16 PCI-E 2.0 link is capable of transferring 8GB/s, so there's still plenty of bandwidth left. A more realistic resolution of 1920x1080 (1080p) reduces the bandwidth requirement to 498MB/s. Remember that PCI-E is bidirectional as well, so there's still 8GB/s of bandwidth from the system to the GPU; the bandwidth from GPU to system isn't used nearly much. There may be a slight performance hit relative to native rendering, but it should be less than 5% and the cost and routing benefits far outweigh such concerns. NVIDIA states that the copying of a frame takes roughly 20% of the frame display time, adding around 3ms of latency.

That covers the basics of the hardware and software, but Optimus does work beyond simply rendering an image and transferring it to the IGP buffer. It needs to know which applications require the GPU, and that brings us to a discussion of the next major software enhancement NVIDIA delivers with Optimus.

49 Comments

View All Comments

JarredWalton - Tuesday, February 9, 2010 - link

You can manually set applications to only use the IGP instead of turning on the dGPU, but to my knowledge there's no way to completely turn off the dGPU and keep it off. Of course, when the GPU isn't needed it is totally powered off so you don't lose battery life unless you start running apps that want to run on the GPU.macroecon - Tuesday, February 9, 2010 - link

Well, I was getting ready to pull the trigger over the weekend to buy a UL30Vt, but I'm glad that I waited. While this is not a revolutionary feature, it does make laptops that lacks it less valuable in my opinion. The video that Jarred posted toward the end of the article really demonstrates the value of on-the-fly GPU switching. I think that I'll wait for bit longer for Optimus, and also DirectX11 nVidia GPU, to hit the market. Thanks for the coverage Jarred!lopri - Tuesday, February 9, 2010 - link

Not to rain on NV's parade, but I'd much prefer if Optimus is doing its thing in 100% hardware. In an ideal world, software solution can do the same job as hardware solution, but I've seen some caveats on software solutions - on desktops, admittedly. Instead of trying to 'detect' the apps, detecting 'loads' and take care of it in hardware.Some might know what I'm talking about.

JarredWalton - Tuesday, February 9, 2010 - link

The only problem with this is that the software is needed to work between Intel and NVIDIA hardware. There's also a concern about if you want something to NOT run on the dGPU (for testing purposes or to save battery life). With IGP reaching the point where it can handle most video tasks, you wouldn't want to power up the dGPU to do H.264 decoding as power requirements would jump several watts.Of course, if you could have NVIDIA IGP and dGPU it might be possible to do more on the hardware side, but Arrandale, Pineview, Sandy Bridge, etc. make it very unlikely that we would see another NVIDIA IGP any time soon.

acooke - Tuesday, February 9, 2010 - link

OK, so this is awesome (particularly with Lenovo and CUDA mentioned). But how is the encrupted profile update driver yadda yadda stuff going to work with Linux?I'm a software developer, I work with CUDA (OpenCL actually), I use Linux. NVidia should worry about people like me because we're the motor behind the take-up of Fermi, which is going to be a significant source of cash for them. Currently I can do very basic OpenCL development while on the road with my laptop using the AMD CPU driver (despite having Intel/Lenovo hardware), but being able to use a GPU woul dbe a huge improvement (it's not that much fun running GPU code on a CPU!).

darckhart - Wednesday, February 10, 2010 - link

yes, i'm curious about this also.room1oh1 - Tuesday, February 9, 2010 - link

I hope they don't fit any brakes into a laptop!MonkeyPaw - Tuesday, February 9, 2010 - link

Yeah, it's rather unfortunate that they said it should work like a hybrid, and they have the picture of a 2010 Prius in the slide. Just goes to show that car analogies don't work! They could have just drawn the parallel to your laptop battery--when you unplug the laptop, it starts using the battery with no user intervention.horseeater - Tuesday, February 9, 2010 - link

Switchable graphics are nice, but I want external gfx cards (or enclosures for desktop gfx cards) for laptops. Just plug it in when you're home, kill precious time playing useless junk, and use the igp when on the road.That being said, UL80-vt is reportedly awesome, and improvements are surely welcome, if they don't up the price.

synaesthetic - Wednesday, February 10, 2010 - link

I want external GPUs also, but I want one that can use the laptop's LCD display rather than forcing me to plug in an external display. After all, external displays aren't portable, but a ViDock isn't terribly large.