Low Power Server CPUs: the energy saving choice?

by Johan De Gelas on July 15, 2010 4:54 AM EST- Posted in

- IT Computing

If you are just trying to get your application live on the Internet, you might be under the impression that “green IT” and “Power Usage Efficiency” is something the infrastructure guys should worry about. As an hardware enthusiast working for an internet company, you probably worry a lot more about keeping everything “in the air” than the power budget of your servers. Still, it will have escaped no one’s attention that power is a big issue.

But instead of reiterating the clichés again, let us take a simple example. Let us say that you need a humble setup of 4 servers to run your services on the internet. Each server consuming 250W when running at a high load (CPU at 60% or so). If you are based in the US, that means that you need about 10-11 amps: 1000W divided by 110V plus a safety margin. In Europe, you’ll need about 5 amps (Voltage = 230V). In Europe you’ll pay up to $100 per month per amp that you need. The US prices vary typically between $15 and $30 per amp per month. So depending on where you live, it is not uncommon to pay something like $300 to $500 per month just to feed electricity to your servers. In the worst case, you are paying up to $6000 per year ($100 x 5 x 12 months) to keep a very basic setup up and running. That is $24000 in four years. If you buy new servers every 4 years, you probably end up spending more on power than on the hardware!

Keeping an eye on power when choosing the hardware and software components is thus much more than naively following the hype of “green IT”. It is simply the smart thing to do. We take another shot at understanding how choosing your server components wisely can give you a cost advantage. In this article, we focus on low power Xeons in a consolidated Hyper-V/Windows 2008 virtualization scenario. Do Low Power Xeons save energy and costs? We designed a new and improved methodology to find out.

49 Comments

View All Comments

has407 - Thursday, July 15, 2010 - link

Good points. At the datacenter level, the IT equipment typically consumes 30% or less of the total power budget; for every 1 watt those servers etc. consume, add 2 watts to your budget for cooling, distribution, etc.Not to mention that the amortized power provisioning cost can easily exceed the power cost over the life of the equipment (all that PD equipment, UPS's etc cost $$$), or that high power densities have numerous secondary effects (none good, at least in typical air-cooled environments).

I guess it really depends on your perspective: a datacenter end customer with a few racks (or less); a datacenter volume customer/reseller; a datacenter operator/owner; or a business who builds and operates your own private datacenters? Anadtech's analysis is really more appropriate for the smaller datacenter customer, and maybe smaller resellers.

Google published "The Datacenter as a Computer -- An Introduction to the Design of Warehouse-Scale Machines" which touches on many of the issues you mention, and should be of interest to anyone building or operating large systems or datacenters. It's not just about Google and has an excellent set of references; freely available at::

http://www.morganclaypool.com/doi/abs/10.2200/S001...

Whizzard9992 - Monday, July 19, 2010 - link

Very cool article. Bookmarked, though I'm not sure I'll ever read the whole thing. Scanning for gems now. Thanks!gerry_g - Thursday, July 15, 2010 - link

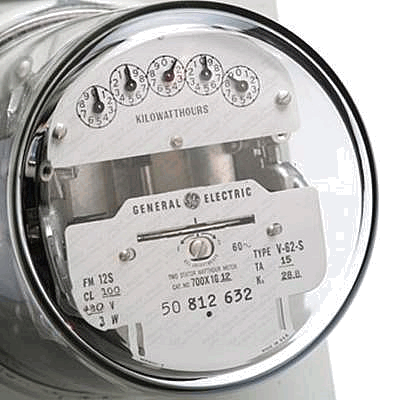

Just a little engineering clarification, one pays for electricity via energy units, not voltage, amperage or even power. Power is an instantaneous measurement, over time.Electrical energy is almost universally measured/billed in KWh Kilo Watts x hours). Power plants and some rare facilities may use Mega Watt hours.

Why does it make a difference? Actual voltage not only has different standards but there are distribution loses. For example, nominal US 240/120V (most common on newer utility services) may droop considerably. However, in the US, if the building is fed via a three phase supply, nominal will be 208/120 volts!

That meter that measures the energy you pay for measures real power multiplied by time regardless of single phase, three phase, nominal voltage or voltage loses in the distribution system.

Most modern computer power supplies are fairly insensitive to reasonable variations in supply voltage. They have about the same power efficiency at high or low voltage. Thus they will consume the same wattage based given the same load regardless of present voltage.

Since we measure computer power supplies in Watts (capitalized since the unit is named or abbreviated after a persons name as is Volt and AMP or W, V and A). Wh (hours are not named after a person) is the most consistent base unit for energy consumption.

Watts of course are directly converted to units of heat, thus the air conditioning costs are somewhat directly computable.

Just trivia but consistent ;)

gerry_g - Thursday, July 15, 2010 - link

I should clarify, Watts are NOT voltage times amperage in AC systems! Watts represent real power (billable and real heat). reactive and non-linear components generally draw greater amperage than is billed for if one just multiplies V x A! Look at a power supply sticker, it has a wattage rating and a VA (Voltage x Amperage) rating that do not match!Also note, UPS systems have different VA and Wattage ratings!

For this reason, costs can only be computed by Watts x Time. (KWh as an example), not Voltage or Amperage.

has407 - Friday, July 16, 2010 - link

Hmm.... If your Watts come with power distribution, UPS, cooling and amortization, then maybe "costs can only be computed by Watts x Time".If not, I think you're going to go broke rather quickly, at least allowing for ~1.25x for power distribution, ~1.5x for UPS, ~2x for cooling, and ~3x for CAPEX/amortization.

Would you settle at 12x your Watts for mine (in volume of course and leaving me a bit of margin)? Counter offer anyone?

has407 - Thursday, July 15, 2010 - link

True, but as Johan posted earlier, he's looking at it from the datacenter customer perspective, and amps is how it's typically priced, because that's how it's typically provisioned.The typical standard datacenter rate sheet has three major variables if you're using your own hardware: rack space, network, and power. Rack space is typically RU's with a minimum of 1/4 rack. Network is typically flat rate at a given speed, GB/mo or some combination thereof. Power is typically 110VAC at a given amperage, usually in 5-10A increments (at least in the US). You want different power--same watts but 220 instead of 110? Three-phase? Most will sell it to you, but at an extra charge because they'll probably need a different PDU (and maybe power run) just for you.

They don't generally charge you by the Watt for power, by the bit for network, or anything less than a 1/4 rack because the datacenter operators have to provision in manageable units, and to ensure they can meet SLAs. They have to charge for those units whether you use them or not because they have to provision the infrastructure based on the assumption that you will use them. (And if you exceed them, be prepared for nasty notes or serious additional charges.)

Those PDU's, UPS's, AC units, chillers, etc. don't care if you only used 80% of your allotted watts last month--they had to be sized and provisioned based on what you might have used. That's a fixed overhead that dwarfs the cost of the watts you actually used, that the datacenter operator had to provision and has to recover. If you want/need less than that, some odd increment or on-demand, then you go to a reseller who will sell you a slice of a server, or something like Amazon EC2.

If it makes people feel better (at least for those in the US), simply consider the unit of power/billing as ~396KWH (5A@110VA/mo) or ~792KWH (10A@110VAC/mo). Then multiply by 3, because that's the typical amount of power that's actually used (e.g., for distribution, cooling and UPS) above and beyond what your servers consume. Then multiply that by the $/KWH to come up with the operating cost. Then double it (or more) because that's the amortized cost of all those PDU's, UPS's and the cooling infrastructure in addition to the direct costs of electricity you're using.

In short, for SMB's who are using and paying for colo space and have a relatively small number of servers, the EX Xeon's may make sense, whereas those operating private datacenters or a warehouse full of machines may be better served by LP's.

gerry_g - Friday, July 16, 2010 - link

>True, but as Johan posted earlier, he's looking at it from the datacenter customer perspective, and amps is how it's typically priced, because that's how it's typically provisioned.Not true at least in the US or UK. Electricity is priced in KWh. Circuit protection is rated (provisioned) by Amps. (a simple Google search or electricity price or cost will reveal that).

Volts x Amps is not equal to Wattage for most electronics. Thus VxAxHours is not what one is billed for.

JarredWalton - Friday, July 16, 2010 - link

You're still looking at it from the residential perspective. If you colocate your servers to a data center, you pay *their* prices, not the electric company prices, which means you don't just pay for the power your server uses but you also pay for cooling and other costs. Just a quick example:Earthnet charges $979 per month for one rack, which includes 42U of space, 175GB of data, 8 IP addresses, and 20 Amps of power use. So if you're going with the 1U server Johan used for testing, each server can use anywhere from 168W to ~300W, or somewhere between 1.46A and 2.61A. For 40 such servers in a rack, you're looking at 58.4A to 104A worst-case. If you're managing power so that the entire rack doesn't go over 60A, at Earthnet you're going to pay and extra $600 per month for the additional 40A. This is all in addition to bandwidth and hardware costs, naturally.

In other words, Earthnet looks to charge business customers roughly $0.18 per Wh, but it's a flat rate where you pay the full $0.18 per Wh for the entire month, assuming a constant 2300W of power draw (i.e. 20A at 115VAC). In reality your servers are probably maxing out at that level and generally staying closer to half the maximum, which means your "realistic" power costs (plus cooling, etc.) work out to nearly $0.40 per kWh.

Penti - Saturday, July 17, 2010 - link

Data centers aren't exactly using a 400V/240V residential connection. It's 10/11/20 or even 200 kV and their own transformers or so. And of course megawatts of power.Inside the datacenter it's all based on the connections and amps available though. You just can't have an unlimited load on a power socket from a PDU. And it's mostly metered.

gerry_g - Thursday, July 15, 2010 - link

Humor intended...The average human outputs ~300 BTU/hour of heat and significant moisture. Working from home will cut the server facilities air conditioning bill by lowering the heat and humidity the AC system needs to remove!