OWC Mercury Electra 3G MAX 960GB Review: 1TB of NAND in 2.5" Form Factor

by Kristian Vättö on October 18, 2012 1:00 AM ESTIntroduction

One of the main concerns about buying an SSD has always been the limited capacity compared to traditional hard drives. Nowadays we have hard drives as big as 4TB, whereas most SATA SSDs top out at 512GB. Of course, SATA SSDs are usually 2.5” and standard 2.5” hard drives don’t offer capacities like 4TB – the biggest we have at the moment is 1TB. The real issue, however, has been price. If we go a year back in time, you had to fork out around $1000 for a 512GB SSD. Given that a 500GB hard drive could be bought for a fraction of that, there weren’t many who were willing to pay such prices for a spacious SSD. In only a year NAND prices have dropped dramatically and it’s not unusual to see 512GB SSDs selling for less than $400. While $400 is still a lot money for one component, it’s somewhat justifiable if you need to have one big drive instead of a multi-drive setup (e.g. in a laptop).

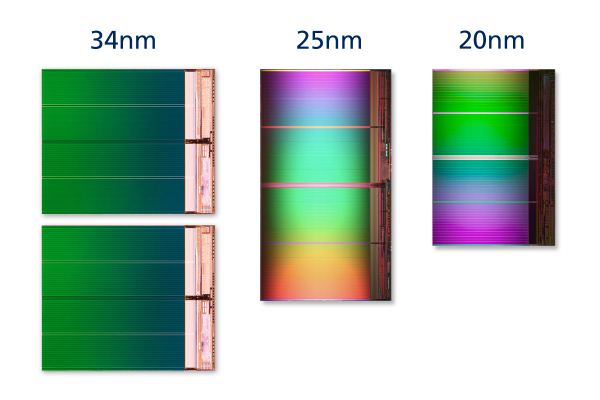

The decrease in NAND prices has not only boosted SSD sales but also opened the market for bigger capacities. Given the current price per GB, a 1TB SSD should cost around $1000, the same price a 512GB SSD cost a year ago. But there is one problem: 128Gb 20nm IMFT die isn’t due until 2013. Most consumer-grade controllers don’t support more than a total of 64 NAND dies, or eight NAND dies per channel. With a 64Gb die, the maximum capacity for most controllers works out to be 512GB. There are exceptions such as Intel’s SSD 320 as it uses Intel’s in-house controller, a 10-channel design allowing greater capacities of up to 640GB (of which 600GB are user accessible). Samsung's SSD 830 also supports greater capacities (Apple is offering 768GB in the Retina MacBook Pro) but at least for now that model is OEM only.

The reason behind this limitation is simple: with twice as much NAND, you have twice as many pages to track. A 512GB SSD with 25nm NAND already has 64 million pages. That is a lot data for the controller to sort through and you need a fast controller to address that many pages without a performance hit. 128Gb 20nm IMFT NAND will double the page size from 8KB to 16KB, which allows 1024GB of NAND to be installed while keeping the page count the same as before. The increase in page size is also why it takes a bit longer for SSD manufacturers to adopt 128Gb NAND dies. You need to tweak the firmware to comply with the new page and block sizes as well as change program and erase times. Remember that page size is the smallest amount of data you can write. When you increase page size, especially the way small IOs (smaller than the page size) are handled needs to be reworked. For example, if you’re writing a 4KB transfer, you don’t want to end up writing 16KB or write amplification will go through the roof. Block size will also double, which can have an impact on garbage collection and wear leveling (you now need to erase twice as much data as before).

Since 128Gb 20nm IMFT die and 1TB SSDs may be over a half a year away, something else has to be done. There are plenty of PCIe SSDs that offer more than 512GB, but outside of enterprise SSDs they are based on the same controllers as regular 2.5” SATA SSDs. What is the trick here, then? It’s nothing more complicated than RAID 0. You put two or more controllers on one PCB, give each controller their own NAND, and tie up the package with a hardware RAID controller. PCIe SSDs will also have SATA/SAS to PCIe bridges to allow PCIe connectivity. Basically, that’s two or more SSDs in one package but only one SATA port or PCIe slot will be taken and the drive will appear as a single volume.

PCIe SSDs with several SSD controllers in RAID 0 have existed for a few years now but we haven’t seen many similar SSDs in SATA form factors. PCIe SSDs are easier in the sense that there are less space limitations to worry about. You can have a big PCB, or even multiple PCBs, and there won’t be any issues because PCIe cards are fairly big to begin with. SATA drives, however, have strict dimensions limits. You can’t go any bigger than standard 2.5” or 3.5” because people won’t buy a drive that doesn’t fit in their computer. 3.5” SSD market is more or less non-existent so if you want to make a product that will actually sell, you have to go 2.5”. PCBs in 2.5” SSDs are not big; if you want to fit the components of two SSDs inside a 2.5” chassis you need to be very careful.

SATA is also handicapped in terms of bandwidth. Even with SATA 6Gbps, you are limited to around 560-570MB/s, which can be achieved with a single fast SSD controller. PCIe doesn’t have such limitations as you can go all the way to up to 16GB/s with a PCIe 3.0 x16 slot. Typically PCIe SSDs are either PCIe 2.0 x4 or x8, but we are still looking at 2-4GB/s of raw bandwidth—over three times more than what SATA can currently provide. Hence there’s barely any performance improvement from putting two SSDs inside a 2.5” chassis; you’ll still be bottlenecked by the SATA interface.

But what you do get from a RAID 0 2.5" SSD is the possibility for increased capacity. The enclosures are big enough to house two regular size PCBs, which in theory allows up to two 512GB SSD to be installed into the same enclosure. We haven't seen many solutions similar to this previously, but a few months ago OWC released a 960GB Mercury Electra MAX that has two SF-2181 controllers in RAID 0 and 512GiB of NAND per controller. Lets take a deeper look inside, shall we?

36 Comments

View All Comments

kmmatney - Thursday, October 18, 2012 - link

For most users, the "Light Workload" benchmark is probably the best indicator of real life performance, and here it performs close to a Crucial M4 running at 3Gbps. So not completely terrible (will feel much faster than any hard drive) but you really need to be using a 6Gbps controller to be competitive nowadays.ForeverAlone - Thursday, October 18, 2012 - link

Bloody hell, they're fast!To everyone who is moaning about £/GB or SSD storage, you are idiots for even considering using an SSD for storage.

HKZ - Thursday, October 18, 2012 - link

Maybe for big projects and similar things it's pretty dumb for a regular user, but with games about 10GB or so even on a 256GB SSD you can stuff a lot of games on there!HKZ - Thursday, October 18, 2012 - link

I have an OWC 240GB SSD SATA 3, and it's pretty fast, but at 1TB of space at this price this performance is dismal. I'm saving up for a Samsung 830 (maybe an 840) for the extra space and speed. I only use about 60GB of that 240 for OS X, and the rest for Windows 7. With a 512GB I'd have all kinds of space for my games. Yes, I game on a MacBook and I know it's not the best but it's all I've got right now. Don't kill me in the comments! XDI've got that SSD and a 1TB HDD taking the optical drive spot in my 2011 MBP and there's no way I'd pay a third of the price of my already very expensive machine for an SSD that slow. Someone will buy it, but I can't wait for an affordable 1TB SSD.

Lord 666 - Thursday, October 18, 2012 - link

Think as an iSCSI target for VSA or DAS using an older but still relevant HP DL380 G6 or G7. The 3g connection will be fine and overall performance will be much faster than 1tb SATA not to mention much cheaper than 1tb SAS.JarredWalton - Thursday, October 18, 2012 - link

But will it be reliable long-term? That's someone no one really knows without extended testing in just such a scenario.poccsx - Thursday, October 18, 2012 - link

Not a criticism, just curious, why is the Samsung 840 not in the benchmarks?Kristian Vättö - Friday, October 19, 2012 - link

I made the graphs before we had the 840, that's why (but then this got pushed back due to the 840/Pro reviews). You can always use Bench to compare any SSD to anotherciparis - Friday, October 19, 2012 - link

- it's not affordable- it's not remotely efficient

- it's quite slow

This seems like a company out of it's depth, producing a product it should have cancelled outright when the numbers became clear (it's no wonder they don't want to talk about them). Note that I'm giving them the benefit of the doubt and assuming these numbers weren't what they set out to create; if I'm wrong about that it's perhaps even more damning.

melgross - Friday, October 19, 2012 - link

You shouldn't assume, because you don't know. I wouldn't be surprised if there is a fair amount of demand for this in a laptop despite the price, performance or power draw. Some laptops have a pretty good battery life around 7 hours or so that would still have decent battery life with one of these.OWC is right that this is a good job for video editing on a laptop, and professional use for dailies in the field would be a perfect use for this.

As far as criticism of the performance goes, I remember plenty of guys here talking about how they were going to buy drives a year or two ago that had performance that was much worse for much smaller drives at a higher price per Gb. If you're loading or saving fairly large projects, a doubling of performance is very significant.

Of course, if you never have done any of that, you won't understand it either.