The Great Equalizer 3: How Fast is Your Smartphone/Tablet in PC GPU Terms

by Anand Lal Shimpi on April 4, 2013 1:00 AM EST- Posted in

- Tablets

- Smartphones

- Mobile

- GPUs

- SoCs

GL/DXBenchmark 2.7 & Final Words

While the 3DMark tests were all run at 720p, the GL/DXBenchmark results run at roughly 2.25x the pixel count: 1080p. We get a mixture of low level and simulated game benchmarks with GL/DXBenchmark 2.7, the former isn't something 3DMark offers across all platforms today. The game simulation tests are far more strenuous here, which should do a better job of putting all of this in perspective. The other benefit we get from moving to Kishonti's test is the ability to compare to iOS and Windows RT as well. There will be a 3DMark release for both of those platforms this quarter, we just don't have final software yet.

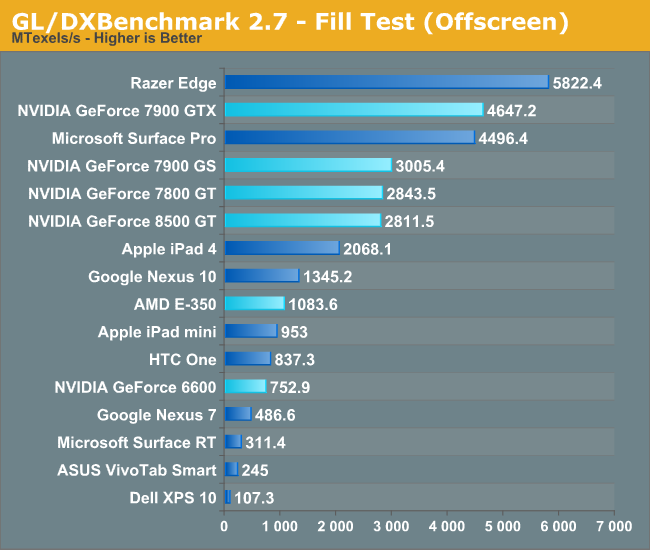

We'll start with the low level tests, beginning with Kishonti's fill rate benchmark:

Looking at raw pixel pushing power, everything post Apple's A5 seems to have displaced NVIDIA's GeForce 6600. NVIDIA's Tegra 3 doesn't appear to be quite up to snuff with the NV4x class of hardware here, despite similarities in the architectures. Both ARM's Mali-T604 (Nexus 10) and ImgTec's PowerVR SGX 554MP4 (iPad 4) do extremely well here. Both deliver higher fill rate than AMD's Radeon HD 6310, and in the case of the iPad 4 are capable to delivering midrange desktop GPU class performance from 2004 - 2005.

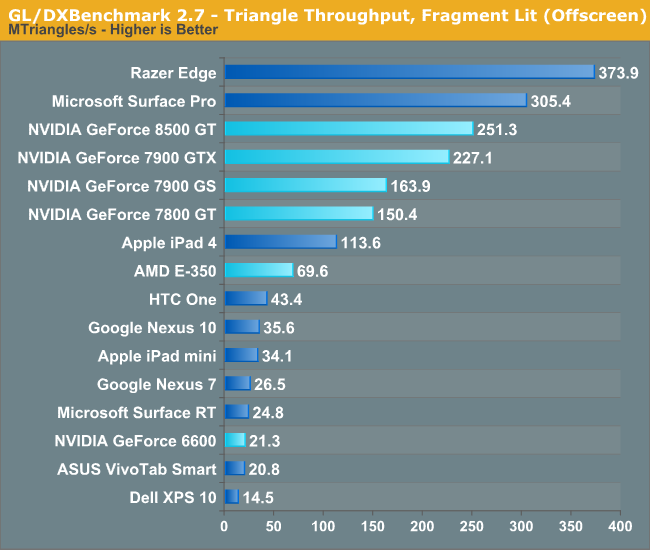

Next we'll look at raw triangle throughput. The vertex shader bound test from 3DMark did some funny stuff to the old G7x based architectures, but GL/DXBenchmark 2.7 seems to be a bit kinder:

Here the 8500 GT definitely benefits from its unified architecture as it is able to direct all of its compute resources towards the task at hand, giving it better performance than the 7900 GTX. The G7x and NV4x based architectures unfortunately have limited vertex shader hardware, and suffer as a result. That being said, most of the higher end G7x parts are a bit too much for the current crop of ultra mobile GPUs. The midrange NV4x hardware however isn't. The GeForce 6600 manages to deliver triangle throughput just south of the two Tegra 3 based devices (Surface RT, Nexus 7).

Apple's iPad 4 even delivers better performance here than the Radeon HD 6310 (E-350).

ARM's Mali-T604 doesn't do very well in this test, but none of ARM's Mali architectures have been particularly impressive in the triangle throughput tests.

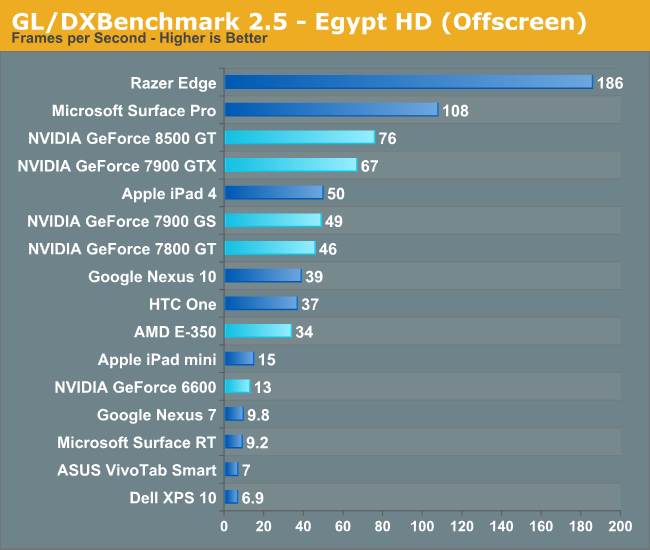

With the low level tests out of the way, it's time to look at the two game scenes. We'll start with the less complex of the two, Egypt HD:

Now we have what we've been looking for. The iPad 4 is able to deliver similar performance to the GeForce 7900 GS, and 7800 GT, which by extension means it should be able to outperform a 6800 Ultra in this test. The vanilla GeForce 6600 remains faster than NVIDIA's Tegra 3, which is a bit disappointing for that part. The good news is Tegra 4 should be somewhere around high-end NV4x/upper-mid-range G7x performance in this sort of workload. Again we're seeing Intel's HD 4000 do remarkably well here. I do have to caution anyone looking to extrapolate game performance from these charts. At best we know how well these GPUs stack up in these benchmarks, until we get true cross-platform games we can't really be sure of anything.

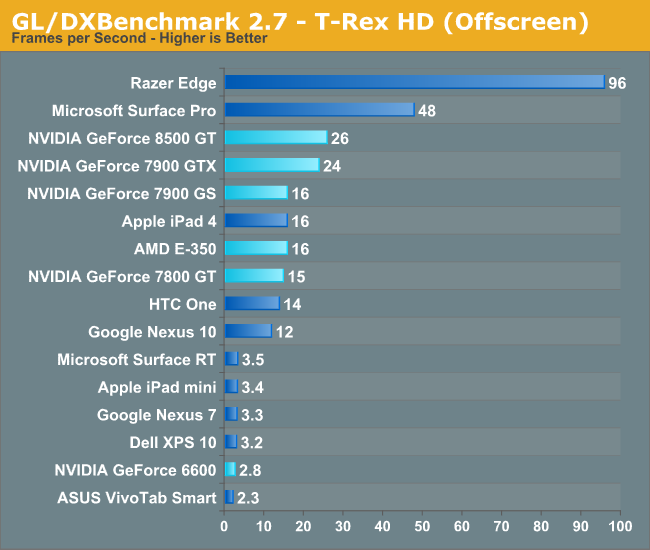

For our last trick, we'll turn to the insanely heavy T-Rex HD benchmark. This test is supposed to tide the mobile market over until the next wave of OpenGL ES 3.0 based GPUs take over, at which point GL/DXBenchmark 3.0 will step in and keep everyone's ego in check.

T-Rex HD puts the iPad 4 (PowerVR SGX 554MP4) squarely in the class of the 7800 GT and 7900 GS. Note the similarity in performance between the 7800 GT and 7900 GS indicates the relatively independent nature of T-Rex HD when it comes to absurd amounts of memory bandwidth (relatively speaking). Given that all of the ARM platforms south of the iPad 4 line have less than 12.8GB/s of memory bandwidth (and those are the platforms these benchmarks were designed for), a lack of appreciation for the 256-bit memory interfaces on some of the discrete cards is understandable. Here the 7900 GTX shows a 50% increase in performance over the 7900 GS. Given the 62.5% advantage the GTX holds in raw pixel shader performance, the advantage makes sense.

The 8500 GT's leading performance here is likely due to a combination of factors. Newer drivers, a unified shader architecture that lines up better with what the benchmark is optimized to run on, etc... It's still remarkable how well the iPad 4's A6X SoC does here as well as Qualcomm's Snapdragon 600/Adreno 320. The latter is even more impressive given that it's constrained to the power envelope of a large smartphone and not a tablet. The fact that we're this close with such portable hardware is seriously amazing.

At the end of the day I'd say it's safe to assume the current crop of high-end ultra mobile devices can deliver GPU performance similar to that of mid to high-end GPUs from 2006. The caveat there is that we have to be talking about performance in workloads that don't have the same memory bandwidth demands as the games from that same era. While compute power has definitely kept up (as has memory capacity), memory bandwidth is no where near as good as it was on even low end to mainstream cards from that time period. For these ultra mobile devices to really shine as gaming devices, it will take a combination of further increasing compute as well as significantly enhancing memory bandwidth. Apple (and now companies like Samsung as well) has been steadily increasing memory bandwidth on its mobile SoCs for the past few generations, but it will need to do more. I suspect the mobile SoC vendors will take a page from the console folks and/or Intel and begin looking at embedded/stacked DRAM options over the coming years to address this problem.

128 Comments

View All Comments

jeffkibuule - Thursday, April 4, 2013 - link

I'm not sure how much more significant overhead there is left when you remove APIs. At least with iOS, developers can specifically target performance characteristics of certain devices if they wish, only problem is that a graphically intense game isn't such an instant money maker on handhelds like it is on consoles. Almost no incentive to go down that route.SlyNine - Saturday, April 6, 2013 - link

You have to remember that a 7900GT is faster than a 8500GT, hell the 8600GT had 2x as many shaders and that performed closely to the 8600GT.Even still I doubt that the benchmark does as well as it could on a 8500GT if it were better optimized. I'm willing to bet you're about right with the Geforce FX estimate.

whyso - Thursday, April 4, 2013 - link

Is this showing how bad AMD is? E-350 is a 18 watt tdp. Even if they double perf/watt with jaguar you are still looking at a chip that hangs with an ipad 4 at 9 watts.tipoo - Thursday, April 4, 2013 - link

The 3DMark online scores seem to be showing higher numbers for the E350, but perhaps those pair it with fast RAM, since RAM seems to be the bottleneck on AMD APUs.Flunk - Thursday, April 4, 2013 - link

A lot of people overclock their equipment for 3Dmark.oaso2000 - Thursday, April 4, 2013 - link

I use a E350 as my desktop computer, and I get much higher results than those in the article. I don't overclock it, but I use 1333 MHz RAM.ET - Thursday, April 4, 2013 - link

You're assuming that performance is linear with power and that a PC processor has the same power as a tablet processor. It's enough to take a look at the other Brazos processors to see that's not the case.The C-50 runs at a lower clock speed of 1GHz vs. 1.6GHz but is 9W. The newer C-60 adds boosting to 1.33GHz.

The Z-60 is a mobile variant which runs at 1GHz and has a TDP of 4.5W.

It's hard to predict where AMD's next gen will land on these charts, but it's obviously not as far in terms of performance/power as you peg it to be.

For me, even if AMD does end up a little slower than some ARM CPU's, I'd pick such as Windows tablet over an ARM based tablet for its compatibility with my large library of (mostly not too demanding) games.

whyso - Thursday, April 4, 2013 - link

The nexus 4 is already more powerful than the e-350 while consuming much less power. By the time jaguar launches, even if they double perf/watt they are still going to be behind any high end tablet or phone soc.c-50 is weaker or the same than atom z-2760 which does't do too well.

lmcd - Thursday, April 4, 2013 - link

You both didn't factor the die shrink... This is 40nm.whyso - Thursday, April 4, 2013 - link

yes I did, I'm assuming they can double perf/ watt to do that they will need to shrink the die. Jaguar only boost IPC by ~15% with small efficiency gains. By going down to 28 nm they might be able to double perf /watt (which is a clearly optimistic prediction).