SanDisk Steps Into Storage Arrays: Launches InfiniFlash Series with Up to 512TB in 3U at <$1/GB

by Kristian Vättö on March 4, 2015 5:59 AM EST- Posted in

- Storage

- SanDisk

- Datacenter

- All-Flash Array

- InfiniFlash

As a rather unexpected move, SanDisk has announced that it will be stepping into the storage array business with its in-house designed InfiniFlash all-flash array series. The driving force behind SanDisk's strategy is big data as the current solutions on the market don't meet all the needs that big data customers have. Most importantly the customers are after higher density and lower price as the data centers can easily be hundreds of petabytes in size, so the acquisition cost as well as the power, cooling and property play a major role.

The InfiniFlash comes in three different flavors. The IF100 is an open platform that is designed for OEMs and hyperscale customers with their own software platforms, although it does include a Software Development Kit (SDK) for monitoring purposes. The IF500 is aimed specifically for object storage using ceph (an open source object storage system), which allows very high capacity clusters to be built efficiently. I admit that I need to dig in a bit deeper to fully understand the way object storage works and its benefits, but it requires less metadata than traditional file and block based storage systems do and the overhead of metadata managing is also lower, which practically enables unlimited scale out opportunities. Finally, the IF700 focuses on performance and is built upon Fusion-io's ION platform with database (Oracle, SAP, etc.) workloads in mind. In a nut shell, the key difference is that the IF100 is hardware only, whereas the IF500 and IF700 come with InfiniFlash OS (I was told it's Ubuntu 14.04 based). Hence the IF100 is also cheaper at less than $1 per GB, while the IF500 and IF700 will be priced somewhere between $1 and $2 per GB. For an all-flash array that's a killer price for raw storage (i.e. before compression and de-duplication) as it's not uncommon for vendors to still charge over $20 per raw gigabyte.

| SanDisk InfiniFlash Specifications | |

| Form Factor | 3U |

| Raw Capacity | 256TB & 512TB |

| Usable Capacity | 245TB & 480TB |

| Performance (IOPS) | >780K IOPS |

| Performance (Throughput) | 7GB/s |

| Power Consumption | 250W (idle) / 750W (active) |

| Connectivity | 8x SAS 2.0 (6Gbps) |

SanDisk offers two capacity configurations: 256TB and 512TB, of which 245TB and 480TB are usable. The ~7% over-provisioning is used mostly for garbage collection with the goal of increasing sustained performance. In terms of performance, SanDisk claims over 780K IOPS, although the presentation showed up to 1 million IOPS at <1ms latency for 4KB random reads with two nodes. The endurance is 75PB of writes, which results in ~4-year lifespan with 90/10 read/write ratio at 6GB/s. For connectivity the InfiniFlash offers eight SAS 6Gbps ports, which can be upgraded to SAS 12Gbps in the future without any downtime.

EDIT: My initial endurance numbers were actually wrong. The endurance for 8KB random writes is 189PB and 2,270TB for sequential 16KB writes. Assuming 6GB/s throughput that would result in a little over 10 years with 8KB random IO with 90/10 read/write ratio.

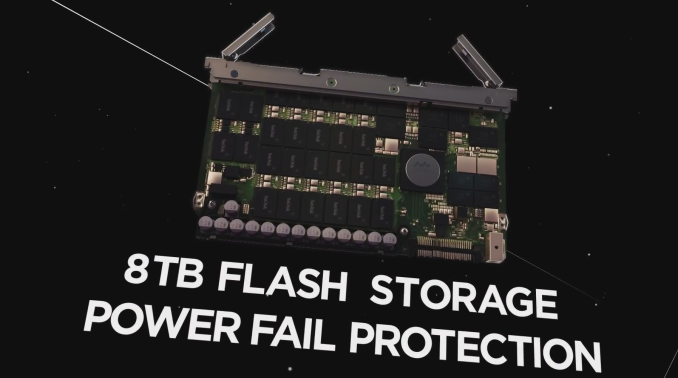

Architecturally the InifiniFlash is made out of 64 custom 8TB cards. The cards are SAS 6Gbps based, although as usual SanDisk wouldn't disclose the underlying controller platform. However, judging by the photo it appears to be a Marvell design (see the hint of a Marvell logo on the chip), which honestly wouldn't surprise me since SanDisk has relied heavily on Marvell for controllers, including SAS ones (e.g. Optimus Eco uses the 88SS9185 SAS 6Gbps controller). As for the NAND, SanDisk is using its own 1Ynm 64Gbit MLC (i.e. second generation 19nm) and each card consists of 64 eight-die NAND packages (this actually sums up to only 4TB, so I'm waiting to hear back if there's a typo somewhere in the equation -- maybe it's a 128Gbit die after all). SanDisk will also be using different types of NAND in the future ones new nodes become available in volume.

EDIT: As I speculated, the die capacity is 128Gbit (16GB), so each package consists of eight 128Gbit dies that yields 128GB per package and with 64 packages per card the total raw capacity is 8TB.

The InfiniFlash will be sold through the usual channel (i.e. through OEMs, SIs & VARs), but SanDisk is also selling it directly to some of its key hyperscale customers that insisted on working directly with SanDisk. While it may seem like SanDisk is stepping into its partners area with direct sales, the big hyperscale customers are known to built their own designs, so in most cases they wouldn't be buying from the OEMs, SIs or VARs anyway. Besides, the InfiniFlash doesn't include any computing resources, meaning that the partners can differentiate by integrating InfiniFlash to servers for an all-in-one approach, whereas the units SanDisk is selling are just a bunch of raw flash.

While the InfitiFlash as a product is certainly exciting and unique, it's not as interesting as the implications of this strategical move. In the past the SSD OEMs have kept their fingers away from the storage array market and have simply focused on building the drives that power the arrays. This strategy originates from the hard drive era where the separation was clear: companies X made the hard drives and companies Y integrated them to arrays. Since there wasn't really a way to customize a hard drive in terms of form factor and features, there was no use of merging X and Y given that the two had completely different expertise.

With flash that's totally different thanks to the versatility of NAND. Only the sky is the limit when it comes to form factors as NAND can easily scale from a tiny eMMC package to 2.5" drive and most importantly to any custom form factor that is desired. SanDisk's biggest advantage in the storage array business is without a doubt its NAND expertise. Integrating NAND properly takes much more knowhow and effort than hard drives because ultimately you have to abandon the traditional form factors and go custom. It's not a surprise that the InfiniFlash is the densest all-flash array on the planet because as a NAND manufacturer SanDisk has the supply and engineering talent to put 8TB behind a single controller with a very space efficient design. The majority of storage array vendors simply source their drives from the likes of SanDisk, Intel and Samsung, so they are limited by the constraining form factors that are available on the open market.

The big question is how will the market react and will this become a trend among other SSD vendors as well. The storage array market is certainly changing and the companies that used to rule the space are starting to lose market share to smaller, yet innovative companies. As the all-flash array market matures, it seems logical for the SSD vendors to step in and eliminate the middle-man for increased vertical integration. The future remains to be seen, but I wouldn't be surprised to see others following SanDisk's lead. The bar has definitely been set high, though.

Source: SanDisk InfiniFlash Product Page

22 Comments

View All Comments

DataGuru - Wednesday, March 4, 2015 - link

While density and power consumption are excellent, for an all-flash storage array performance is extremely poor per TB - we're looking at 1,600-4,000 IOPS per TB (vs typical 50,000 IOPS or more) and throughput is only 15-30MB/sec per TB i.e. less than that of a 7200 RPM SAS drives which cost 60-80$/TB (vs $1,000+/TB here).Basically, it's a yet another way to waste a perfectly good flash and $$$$$$.

ats - Wednesday, March 4, 2015 - link

It is limited by the SAS expanders/switches which in the initial version appear to be only SAS2 (ie 6 Gbps). Assuming its wired up like I think it is with each 8 port external switch connected up to 4 expanders via a 4x SAS connection, you are looking at a max theoretical of 6ish GB/s of bandwidth. I'm assuming that the SAS expanders are in a Mirrored 2+2 configuration.So it appears that both 4k and 8k IOPS are bandwidth limited. The upgrade to SAS3 (aka 12Gb/s) should both double bandwidth and double IOPS.

juhatus - Wednesday, March 4, 2015 - link

Yeah, for that amount of performance it should have more front-end bandwidth, the SAS-ports are totally limiting, but throw in something like Inifiband-switches and the price goes way up. And as the article says, they are selling it to select few hyperscale customer(amazon, fb, google maybe). Also as limiting the ports are think about backing up the data when your running that hot 24/7. There's enterprise and than there's enterpricy :)DataGuru - Thursday, March 5, 2015 - link

Given the price of this storage array (2K$/TB x 256-512TB) extra cost of a pair of 12-port FDR Infiniband/40Gb Ethernet switches (each with non-blocking bandwidth of 1+Tb/s i.e. over 120GB/sec) is trivial (something like 10K-15K$).Real problem is that their backplane is most likely way too slow for 64 4-8TB Flash drives.

DataGuru - Saturday, March 7, 2015 - link

Actually it's not even necessary to use Infiniband to get high front-end bandwidth (probably 70-80GB/sec - not 7GB/sec !) and it could all be SAS3 and pretty cheap. Given the existence of 48-port and 68-port 12Gb SAS3 expanders (like those from PMC - http://pmcs.com/products/storage/sas_expanders/pm8... and http://pmcs.com/products/storage/sas_expanders/pm8... which apparently only cost $320 and $200 ea, 6 expander chips (2 68-port at the top level and 4 48-port at lower level) should allow HA configuration connecting 16-18 4x SAS3 12Gb external ports to 64 SAS3 drives (2 SAS ports per drive). To better visualize it, think of each of those 64 SAS drives as a 2-port NIC, each of 4 SAS3 expanders at the bottom layer as 48-port TOR switch with 32 1x downlinks and 4 (2+2) 4x uplinks connected to 2 top-level 68-port (17x4x) switches each of those having 8 4x downlinks and 8 (or 9) 4x front-end links.Maybe someone who actually understand SAS3 at the hardware level can comment if there are any hidden bottlenecks in this approach above? And if not, why Sandisk did build this bandwidth-handicapped array?

vFunct - Wednesday, March 4, 2015 - link

This is amazing. Pair this up with an 8-socket Xeon system and you have a database monster.FunBunny2 - Wednesday, March 4, 2015 - link

Welcome to the club. Dr. Codd would be proud.lorribot - Wednesday, March 4, 2015 - link

If you look at the likes of EMC and NetApp, what differentiates them from this and why (they would justify) they charge more per GB is the supporting software they supply that enables provisioning, management, replication, Dedupe, integration with virtual platforms etc, very little of which is evident in the article, it woudl seem to be just a bunch of storage. NetApp often state they are a software company, the hardware they have to provide but really it's just comodity stuff, EMC still think hardware and if they ever stop buying companies and get all thier bits in one place they may go that way too.This smacks of a "hey look at what you can do if you really try", slap in the face of these sorts of vendors, "here's the hardware, stick your software in front of this and lets see what we can do together."

ats - Thursday, March 5, 2015 - link

Well the reality is as much as a company like NetApp wants to be a software company, they'll never be one. They sell hardware, and their primary market is legacy SAN/NAS.Anyone building a modern application is bypassing all the legacy storage providers. One needs look no further than AWS. Anyone operating at any scale, which is basically everything new, wants either raw block or raw object, don't give a care about RAID cause RAID is basically useless these days, and will handle redundancy at the application level.

Solutions like this and the Skyera flash boxes (136TB RAW per 1U moving to 300TB per 1U this year!!!) are basically the bees knees. They are basically cost competitive with spinning disk systems while delivering excellent capacity.

Both are somewhat bandwidth limited currently at about 6-8 GB/s.

For the higher performance arena, you have the custom super fast systems like IBM's FlashCore et al.

And at the top end there are the NVMe solutions that are now on the market from several vendors.

I won't even get into the trend of rotational media moving to ethernet interfaces...

DuckieHo - Tuesday, March 10, 2015 - link

Is the stacked piped front panel purely for aesthetics or there's some function to them? I see the vents behind them.