Exploring DirectX 12: 3DMark API Overhead Feature Test

by Ryan Smith & Ian Cutress on March 27, 2015 8:00 AM EST- Posted in

- GPUs

- Radeon

- Futuremark

- GeForce

- 3DMark

- DirectX 12

Other Notes

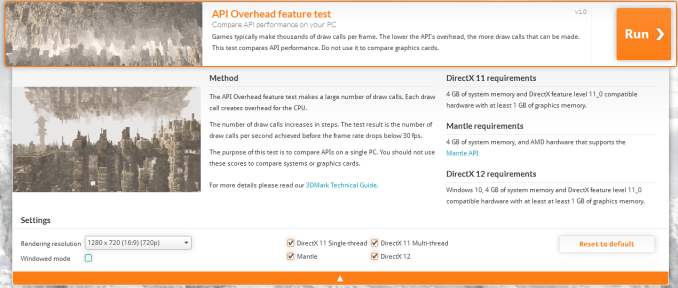

Before jumping into our results, let’s quickly talk about testing.

For our test we are using the latest version of the Windows 10 technical preview – build 10041 – and the latest drivers from AMD, Intel, and NVIDIA. In fact for testing DirectX 12 these latest packages are the minimum versions that the test supports. Meanwhile 3DMark does of course also run on Windows Vista and later, however on Windows Vista/7/8 only the DirectX 11 and Mantle tests are available since those are the only APIs available.

From a test reliability standpoint the API Overhead Feature Test (or as we’ll call it from now, AOFT) is generally reliable under DirectX 12 and Mantle, however we would like to note that we have found it to be somewhat unreliable under DirectX 11. DirectX 11 scores have varied widely at times, and we’ve seen one configuration flip between 1.4 million draw calls per second and 1.9 million draw calls per second based on indeterminable factors.

Our best guess right now is that the variability comes from the much greater overhead of DirectX 11, and consequently all of the work that the API, video drivers, and OS are all undertaking in the background. Consequently the DirectX 11 results are good enough for what the AOFT has set out to do – showcase just how much incredibly faster DX12 and Mantle are – but it has a much higher degree of variability than our standard tests and should be treated accordingly.

Meanwhile Futuremark for their part is looking to make it clear that this is first and foremost a test to showcase API differences, and is not a hardware test designed to showcase how different components perform.

The purpose of the test is to compare API performance on a single system. It should not be used to compare component performance across different systems. Specifically, this test should not be used to compare graphics cards, since the benefit of reducing API overhead is greatest in situations where the CPU is the limiting factor.

We have of course gone and benchmarked a number of configurations to showcase how they benefit from DirectX 12 and/or Mantle, however as per Futuremark’s guidelines we are not looking to directly compare video cards. Especially since we’re often hitting the throughput limits of the command processor, something a real-world task would not suffer from.

The Test

Moving on, we also want to quickly point out the clearly beta state of the current WDDM 2.0 drivers. Of note, the DX11 results with NVIDIA’s 349.90 driver are notably lower than the results with their WDDM 1.3 driver, showing much greater variability. Meanwhile AMD’s drivers have stability issues, with our dGPU testbed locking up a couple of different times. So these drivers are clearly not at production status.

| DirectX 12 Support Status | ||||

| Current Status | Supported At Launch | |||

| AMD GCN 1.2 (285) | Working | Yes | ||

| AMD GCN 1.1 (290/260 Series) | Working | Yes | ||

| AMD GCN 1.0 (7000/200 Series) | Working | Yes | ||

| NVIDIA Maxwell 2 (900 Series) | Working | Yes | ||

| NVIDIA Maxwell 1 (750 Series) | Working | Yes | ||

| NVIDIA Kepler (600/700 Series) | Working | Yes | ||

| NVIDIA Fermi (400/500 Series) | Not Active | Yes | ||

| Intel Gen 7.5 (Haswell) | Working | Yes | ||

| Intel Gen 8 (Broadwell) | Working | Yes | ||

And on that note, it should be noted that the OS and drivers are all still in development. So performance results are subject to change as Windows 10 and the WDDM 2.0 drivers get closer to finalization.

One bit of good news is that DirectX 12 support on AMD GCN 1.0 cards is up and running here, as opposed to the issues we ran into last month with Star Swarm. So other than NVIDIA’s Fermi cards, which aren’t turned on in beta drivers, we have the ability to test all of the major x86-paired GPU architectures that support DirectX 12.

For our actual testing, we’ve broken down our testing for dGPUs and for iGPUs. Given the vast performance difference between the two and the fact that the CPU and GPU are bound together in the latter, this helps to better control for relative performance.

On the dGPU side we are largely reusing our Star Swarm test configuration, meaning we’re testing the full range of working DX12-capable GPU architectures across a range of CPU configurations.

| DirectX 12 Preview dGPU Testing CPU Configurations (i7-4960X) | |||

| Configuration | Emulating | ||

| 6C/12T @ 4.2GHz | Overclocked Core i7 | ||

| 4C/4T @ 3.8GHz | Core i5-4670K | ||

| 2C/4T @ 3.8GHz | Core i3-4370 | ||

Meanwhile on the iGPU side we have a range of Haswell and Kaveri processors from Intel and AMD respectively.

| CPU: | Intel Core i7-4960X @ 4.2GHz |

| Motherboard: | ASRock Fatal1ty X79 Professional |

| Power Supply: | Corsair AX1200i |

| Hard Disk: | Samsung SSD 840 EVO (750GB) |

| Memory: | G.Skill RipjawZ DDR3-1866 4 x 8GB (9-10-9-26) |

| Case: | NZXT Phantom 630 Windowed Edition |

| Monitor: | Asus PQ321 |

| Video Cards: | AMD Radeon R9 290X AMD Radeon R9 285 AMD Radeon HD 7970 NVIDIA GeForce GTX 980 NVIDIA GeForce GTX 750 Ti NVIDIA GeForce GTX 680 |

| Video Drivers: | NVIDIA Release 349.90 Beta AMD Catalyst 15.200.1012.2 Beta |

| OS: | Windows 10 Technical Preview (Build 10041) |

| CPU: | AMD A10-7850K AMD A10-7700K AMD A8-7600 AMD A6-7400L Intel Core i7-4790K Intel Core i5-4690 Intel Core i3-4360 Intel Core i3-4130T Pentium G3258 |

| Motherboard: | GIGABYTE F2A88X-UP4 for AMD ASUS Maximus VII Impact for Intel LGA-1150 Zotac ZBOX EI750 Plus for Intel BGA |

| Power Supply: | Rosewill Silent Night 500W Platinum |

| Hard Disk: | OCZ Vertex 3 256GB OS SSD |

| Memory: | G.Skill 2x4GB DDR3-2133 9-11-10 for AMD G.Skill 2x4GB DDR3-1866 9-10-9 at 1600 for Intel |

| Video Cards: | AMD APU Integrated Intel CPU Integrated |

| Video Drivers: | AMD Catalyst 15.200.1012.2 Beta Intel Driver Version 10.18.15.4124 |

| OS: | Windows 10 Technical Preview (Build 10041) |

113 Comments

View All Comments

Mannymal - Sunday, March 29, 2015 - link

The article fails to address for the layman how exactly this will impact gameplay. Will games simply look better? Will AI get better? will maps be larger and more complex? All of the above? And how much?Ryan Smith - Sunday, March 29, 2015 - link

It's up to the developers. Ultimately DX12 frees up resources and removes bottlenecks; it's up to the developers to decide how they want to spend that performance. They could do relatively low draw calls and get some more CPU performance for AI, or they could try to do more expansive environments, etc.jabber - Monday, March 30, 2015 - link

Yeah seems to me that DX12 isn't so much about adding new eye-sandy its about a long time coming total back end refresh to get rid of the old DX crap and bring it more up to speed with modern hardware.AleXopf - Sunday, March 29, 2015 - link

I would love to see what effect directx 12 has on the cpu side. All the articles so far have been about cpu scalling with different gpus. Would be nice to see how amd compare to intel with a better use of their higher core count.Netmsm - Monday, March 30, 2015 - link

AMD is the thech's hero ^_^. always been.JonnyDough - Tuesday, March 31, 2015 - link

Great! Now all we need are driver hacks to make our over priced non-DX12 video cards worth their money!loguerto - Friday, April 3, 2015 - link

AMD masterpiece. Does this superiority has something to do with AMD Asynchronous Shaders? I know that nvidia's kerpel and maxwell asynchronous pipeline engine is not as powerful as the one in GCN architecture.perula - Thursday, April 9, 2015 - link

1)PASSPORT:

2) license driving:

3)Identity Card:

For other types of documents, the price is to be determined we are also

able to clone credit cards, or create for you a physical card codes

starting with cc in your possession. But they are not able to do it with

cards equipped with the latest generation of chips, but only with the old

ones are still outstanding feature of the single magnetic stripe. The

price in this case is 200 euro per card./

Email /perula0@gmail.com /

Text;+1(201) 588-4406

Clorex - Wednesday, April 22, 2015 - link

On page 4:"Intel does end up seeing the smallest gains here, but again even in this sort of worst case scenario of a powerful CPU paired with a weak CPU, DX12 still improved draw call performance by over 3.2x."

Should be "powerful CPU paired with a weak GPU".

akamateau - Thursday, April 30, 2015 - link

FINALLY THE TRUTH IS REVEALED!!!AMD A6-7400 K CRUSHES INTEL i7 IGP by better than 100%!!!

But Anand is also guilty of a WHOPPER of a LIE!

Anand uses Intel i7-4960X. NOBODY uses RADEON with an Intel i7 cpu. But rather than use either an AMD FX CPU or an AMD A10 CPU they decided to degrade AMD's scores substanbtially by using an Intel product which is not optimsed to work with Radeon. Intel i7 also is not GCN or HSA compatible nor can it take advantage Asynchronous Shader Pipelines either. Only an IDIOT would feed Radeon GPU with Intel CPU.

In short Anand's journalistic integrity is called into question here.

Basically RADEON WOULD HAVE DESTROYED ALL nVIDIA AND INTEL COMBINATIONS if Anand benchmarked Radeon dGPU with AMD silicon. By Itself A6 is staggeringly superior to Intel i3, i5, AND i7.

Ryan Smith & Ian Cutress have lied.

As it stands A10-7700k produces 4.4 MILLION drawcalls per second. At 6 cores the GTX 980 in DX11 only produces 2.2 MILLION draw calls.

DX12 enables a $150 AMD APU to CRUSH a $1500.00 Intel/nVidia gaming setup that runs DX11.

Here is the second lie.

AMD Asynchronous Shader Pipelines allow for 100% multithreaded proceesing in the CPU feeding the GPU whether it is an integrated APU or an 8 core FX feeding a GPU. What Anand sould also show is 8 core scaling using an AMD FX processor.

Anand will say that they are too poor to use an AMD CPU or APU set up. Somehow I think that they are being disingenuous.

NO INTEL/nVidia combination can compete with AMD using DX12.