Fable Legends Early Preview: DirectX 12 Benchmark Analysis

by Ryan Smith, Ian Cutress & Daniel Williams on September 24, 2015 9:00 AM ESTComparing Percentile Numbers Between the GTX 980 Ti and Fury X

As the two top end cards from both graphics silicon manufacturers were released this year, there was all a big buzz about which is best for what. Ryan’s extensive review of the Fury X put the two cards head to head on a variety of contests. For DirectX 12, the situation is a little less clear cut for a number of reasons – games are yet to mature, drivers are also still in the development stage, and both sides competing here are having to rethink their strategies when it comes to game engine integration and the benefits that might provide. Up until this point DX12 contests have either been synthetic or having some controversial issues. So for Fable Legends, we did some extra percentile based analysis for NVIDIA vs. AMD at the top end.

For this set of benchmarks we ran our 1080p Ultra test with any adaptive frame rate technology enabled and recorded the result:

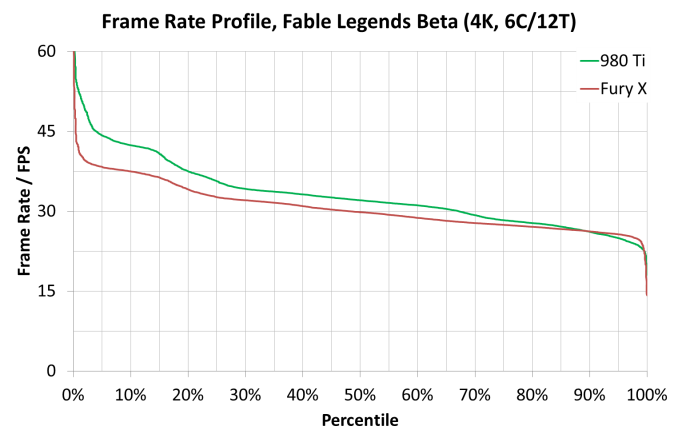

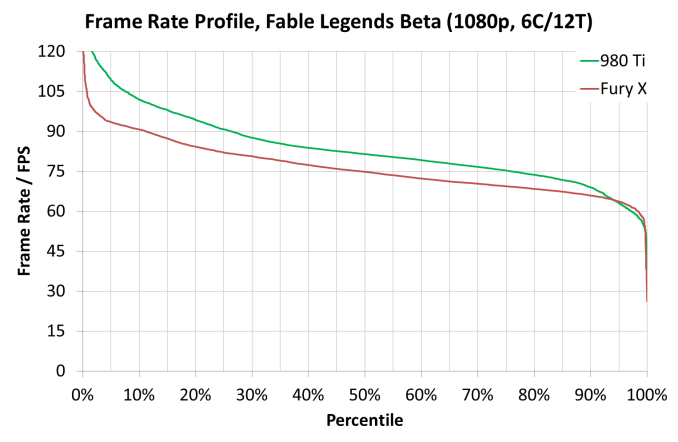

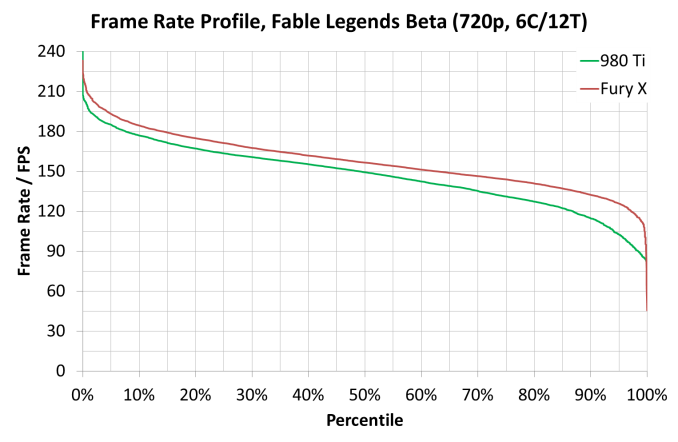

For these tests, usual rules apply – GTX 980 and Fury X, in our Core i7/i5/i3 configurations at all three resolution/setting combinations (3840x2160 Ultra, 1920x1080 Ultra and 1280x720 Low). Data is given in the form of frame rate profile graphs, similar to those on the last page.

As always, Fable Legends is still in early access preview mode and these results may not be indicative of the final version, but at this point they still provide an interesting comparison.

At 3840x2160, both frame rate profiles from each card looks the same no matter the processor used (one could argue that the Fury X is mildly ahead on the i3 at low frame rates), but the 980 Ti has a consistent gap across most of the profile range.

At 1920x1080, the Core i7 model gives a healthy boost to the GTX 980 Ti in high frame rate scenarios, though this seems to be accompanied by an extended drop off region in high frame rate areas. It is also interesting that in the Core i3 mode, the Fury X results jump up and match the GTX 980 Ti almost across the entire range. This again points to some of the data we saw on the previous page – at 1080p somehow having fewer cores gave the results a boost due to lighting scenarios.

At 1280x720, as we saw in the initial GPU comparison page on average frame rates, the Fury X has the upper hand here in all system configurations. Two other obvious points are noticeable here – moving from the Core i5 to the Core i7, especially on the GTX 980 Ti, makes the easy frames go quicker and the harder frames take longer, but also when we move to the Core i3, performance across the board drops like a stone, indicating a CPU limited environment. This is despite the fact that with these cards, 1280x720 at low settings is unlikely to be used anyway.

141 Comments

View All Comments

sr1030nx - Friday, September 25, 2015 - link

Any chance you could run a few tests on the i7 + i5 with hyperthreading off?WaltC - Saturday, September 26, 2015 - link

(I pasted this comment I made in another forum--didn't feel like repeating myself...;))Exactly how many "DX12" features are we looking at here...?

Was async-compute turned on/off for the AMD cards--we know it's off for Maxwell, so that goes without saying. Does this game even use async compute? AnandTech says, "The engine itself draws on DX12 explicit features such as ‘asynchronous compute, manual resource barrier tracking, and explicit memory management’ that either allow the application to better take advantage of available hardware or open up options that allow developers to better manage multi-threaded applications and GPU memory resources respectively. "

If that's true then the nVidia drivers for this bench must turn it off--since nVidia admits to not supporting it.

But sadly, not even that description is very informative at all. Uh, I'm not too convinced here about the DX12 part--more like Dx11...this looks suspiciously like nVidia's behind-the-scenes "revenge" setup for their embarrassing admission that Maxwell doesn't support async compute...! (What a setup... It's really cutthroat isn't it?)

Nvidia says its Maxwell chips can support async compute in hardware—"it's just not enabled yet."

Come on... nVidia's pulled this before...;) I remember when they pulled it with 8-bit palletized texture support with the TNT versus 3dfx years ago...they said it was there but not turned on. The product came and went and finally nVidia said, "OOOOps! We tried, but couldn't do it. We feel real bad about that." Yea...;)

Sure thing. Seriously, you don't actually believe that at this late date if Maxwell had async compute that nVidia would have turned it *off* in the drivers, do you? If so, why? They don't say, of course. The denial does not compute, especially since BBurke has been loudly representing that Maxwell supports 100% of d3d12--(except for async compute we know now--and what else, I wonder?)

I've looked at these supposed "DX12" Fable benchmark results on a variety of sites, and unfortunately none of them seem very informative as to what "DX12" features we're actually looking at. Indeed, the whole thing looks like a dog & pony PR frame-rate show for nVidia's benefit. There's almost nothing about DX12 apparent.

We seem to be approaching new lows in the industry...:/

TheJian - Saturday, September 26, 2015 - link

"but both are above the bare minimum of 30 FPS no matter what the CPU."You're kidding right? 30fps AVERAGE is not above the min of 30fps. If you are averaging 30fps, your gaming will suck. While I HOPE they will improve things over time, the point is NEITHER is currently where any of us would like to play at 4K...LOL.

At least we can now stop blabbing about stardock proving AMD wins in DX12 now...ROFL. At best we now have "more work needs to be done before a victor is decided", which everyone should have known already.

ruthan - Sunday, September 27, 2015 - link

Where is mighty DX12 promise about both different GPU with different architecture together? Where is DX11 comparison, maybe numbers are so nice?If it will be not delivered real soon, we could easily stay with OpenGL and hope in Vulkan destiny.. and there is also no reason to upgrade to Windows 10, we could probably survive with Win7 64b. before Android x86 or maybe, maybe other Linux take a lead.

Mugur - Monday, September 28, 2015 - link

I see some people complaining because there wasn't a DX11 vs. DX12 comparison, like in the Ashes benchmark. I hope everybody realizes that this is completely useless. Why should AMD optimize its cards for DX11 in a DX12 enabled game when the cards are supporting DX12 and DX12 will definitely be better for AMD in an apples to apples comparison?Even in Ashes, it's stupid to compare the DX11 path with the DX12 one for a AMD card, since AMD only optimized for DX12 there.

Also, generally speaking, DX11 and DX12 visuals in a game may not be the same and that's another reason why you cannot draw any conclusion from such comparison (besides a sanity check maybe). That's why, we will definitely see, on some games, that in DX12 the cards will perform poorer that in DX11, unless the target graphics is exactly the same.

All in all, I'm happy that DX12 brings at least a low cpu overhead. The fact that AMD benefits more from this is obvious, but that's just a "collateral" effect IMHO. I doubt that it's only because their DX11 drivers were poor, it must be also a consequence of their architecture (the high cpu overhead, I mean).

I also hope that Windows 10 adoption will be good, because, at this point, that's the only reason for a developer not to go full DX12 for a triple A title.

Oxford Guy - Monday, September 28, 2015 - link

"According to Kollock, the idea that there’s some break between Oxide Games and Nvidia is fundamentally incorrect. He (or she) describes the situation as follows: 'I believe the initial confusion was because Nvidia PR was putting pressure on us to disable certain settings in the benchmark, when we refused, I think they took it a little too personally.'Kollock goes on to state that Oxide has been working quite closely with Nvidia, particularly over this past summer. According to them, Nvidia was 'actually a far more active collaborator over the summer then AMD was, if you judged from email traffic and code-checkins, you’d draw the conclusion we were working closer with Nvidia rather than AMD ;)'

According to Kollock, the only vendor-specific code in Ashes was implemented for Nvidia, because attempting to use asynchronous compute under DX12 with an Nvidia card currently causes tremendous performance problems."

"Oxide has published a lengthy blog explaining its position, and dismissing Nvidia's implication that the current build of Ashes of the Singularity is alpha code, likely to receive significant optimisations before release:

'It should not be considered that because the game is not yet publicly out, it's not a legitimate test,' Oxide's Dan Baker said. 'While there are still optimisations to be had, the Ashes of the Singularity in its pre-beta stage is as or more optimised as most released games.'"

joshjaks - Monday, September 28, 2015 - link

I really like the CPU scaling tests that are done, even though it looks like multiple cores aren't hugely beneficial at the moment. I'm wondering though, do you think there could be the possibility of a DirectX 12 test that focuses on FX CPUs versus i3, i5, and i7s? Being an 8350 owner myself, it'd be nice to know if FX made any improvements in DirectX 12 as well. (Not holding my breath though)Oxford Guy - Monday, September 28, 2015 - link

"Being an 8350 owner myself, it'd be nice to know if FX made any improvements in DirectX 12 as well. (Not holding my breath though)."PCPER made the effort to actually do this testing:

With the FX 8370 in Ashes: "NVIDIA’s GTX 980 sees consistent DX11 to DX12 scaling of about 13-16% while AMD’s R9 390X scales by 50%. This puts the R9 390X ahead of the GTX 980, a much more expensive GPU based on today’s sale prices."

FX 8370 and 1600p (in frames per second)

R9 390X DX 12 high: 36.4

GTX 980 DX 12 high: 34.3

R9 390X DX 11 high: 23.8

GTX 980 DX 11 high: 31.1

with i7 6700K and 1600p

R9 390X DX 12 high: 48.7

GTX 980 DX 12 high: 42.3

R9 390X DX 11 high: 38.1

GTX 980 DX 11 high: 48.1

Oxford Guy - Monday, September 28, 2015 - link

So, with typical 4.5 GHz overclocking that chip should be able to match the i7 6700K's performance with a 390X at 1600p under DX11 — using a 390X with DX12.That's quite a value gain, considering the price difference. I got an 8320E with an 8 phase motherboard for a total of $133.75 from Microcenter. Coupled with a $20 cooler (Zalman sale via slickdeals) which needed some extra fans, I was able to get it comfortably to 4.5 GHz.

The drawback in the PCPER results is that an i3 4330 is actually faster under DX12 with Ashes and the 390X than the 8370 is. It made much bigger gains under DX12 than the 8370 did.

i3 DX 12: 40.6

DX 11: 28.0

Ashes is a real-time strategy which tends to be CPU-heavy so it seems odd that an i3 could outperform an 8 core chip.

Iamthebeast - Monday, September 28, 2015 - link

The game is probably not utilizing all cores. The funny thing with the fable benchmark is once you hit 1080p the two core CPUs perform on par with their larger core counterparts and beat them in 4k. Makes me wonder if this trend is going to continue. The funny thing for me is I thought dx12 was going to make using more cores easier but from the two test we have so far it actually looks like GPU it taking a lot more of the load away from the CPU, like cutting it out of the loop.If this trend continues it actually looks like the best processor for gaming might be the Pentium g3580