The Western Digital WD Black 3D NAND SSD Review: EVO Meets Its Match

by Ganesh T S & Billy Tallis on April 5, 2018 9:45 AM EST- Posted in

- SSDs

- Storage

- Western Digital

- SanDisk

- NVMe

- Extreme Pro

- WD Black

AnandTech Storage Bench - Light

Our Light storage test has relatively more sequential accesses and lower queue depths than The Destroyer or the Heavy test, and it's by far the shortest test overall. It's based largely on applications that aren't highly dependent on storage performance, so this is a test more of application launch times and file load times. This test can be seen as the sum of all the little delays in daily usage, but with the idle times trimmed to 25ms it takes less than half an hour to run. Details of the Light test can be found here. As with the ATSB Heavy test, this test is run with the drive both freshly erased and empty, and after filling the drive with sequential writes.

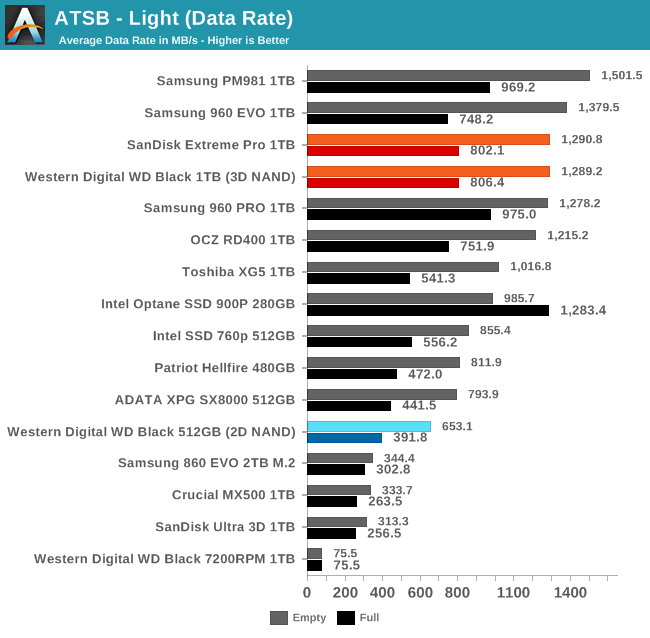

The WD Black's average data rates on the Light test are slightly slower than the Samsung 960 EVO when the test is run on an empty drive, and a bit faster when the drive is full. The Samsung PM981 is the only drive that has a clear lead in both cases, and even then it isn't a very big margin. The worst-case performance here from the new WD Black is substantially faster than the best-case from last year's WD Black.

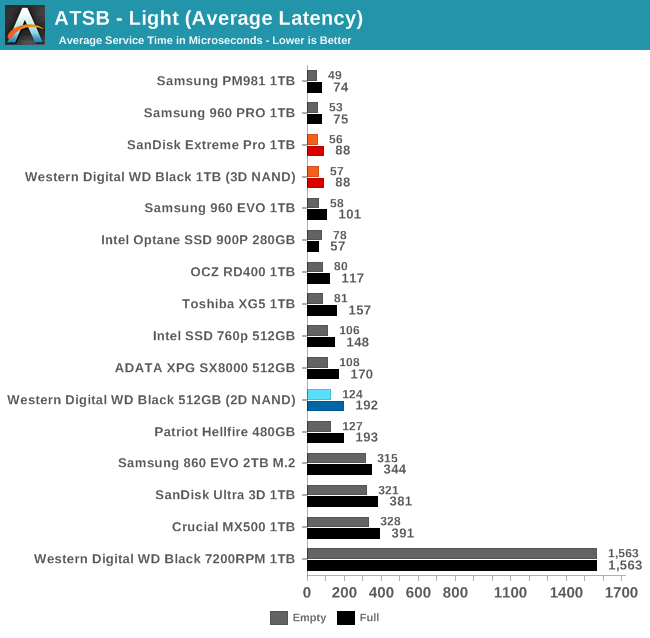

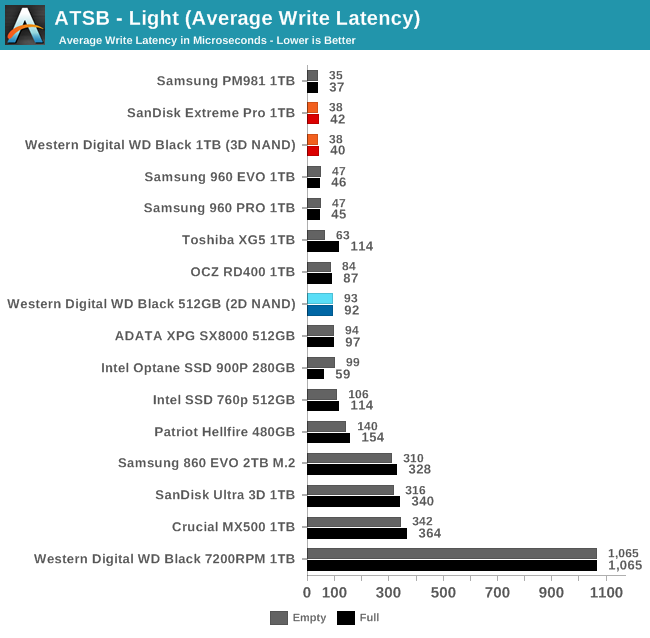

The average latencies from the WD Black during the Light test are as low as any SSD offers. The 99th percentile latencies are not quite as fast as Samsung's best drives offer, except that the full-drive performance is better than the 960 EVO.

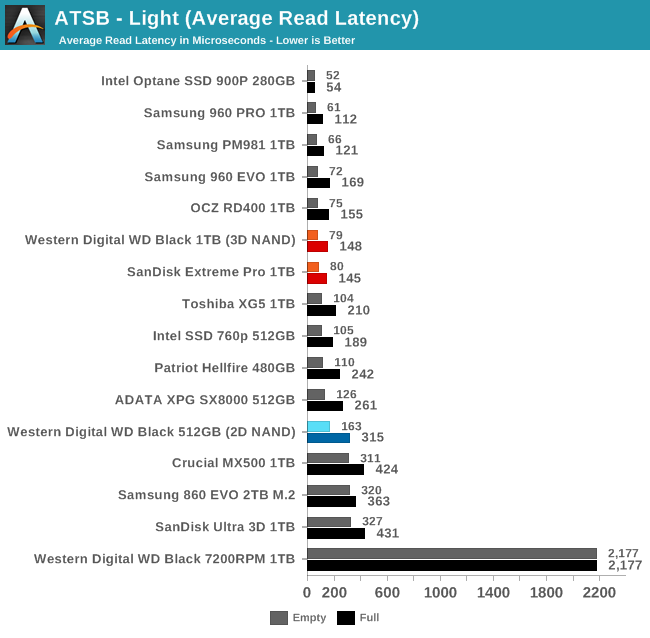

There are quite a few SSDs with average read latency scores that are close to or slightly better than the WD Black, and even the low-end NVMe SSDs keep the average read latency down to a fraction of a millisecond on the Light test. The average write latencies from the WD Black are essentially tied for first place with Samsung's drives.

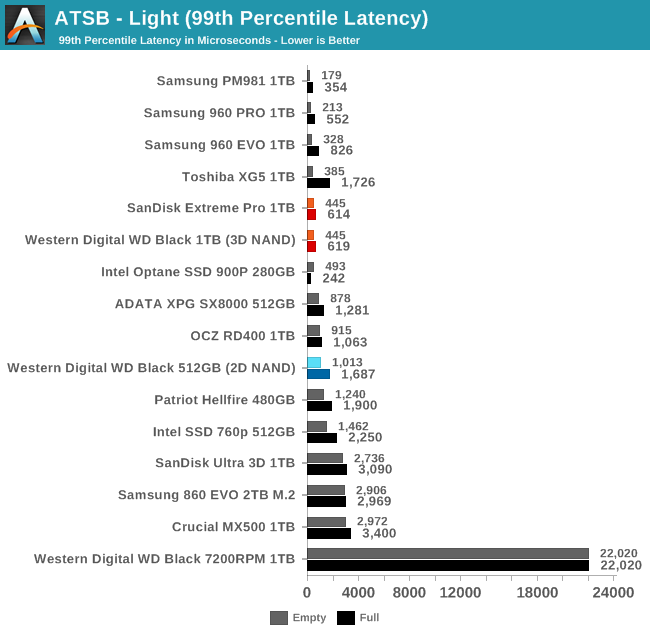

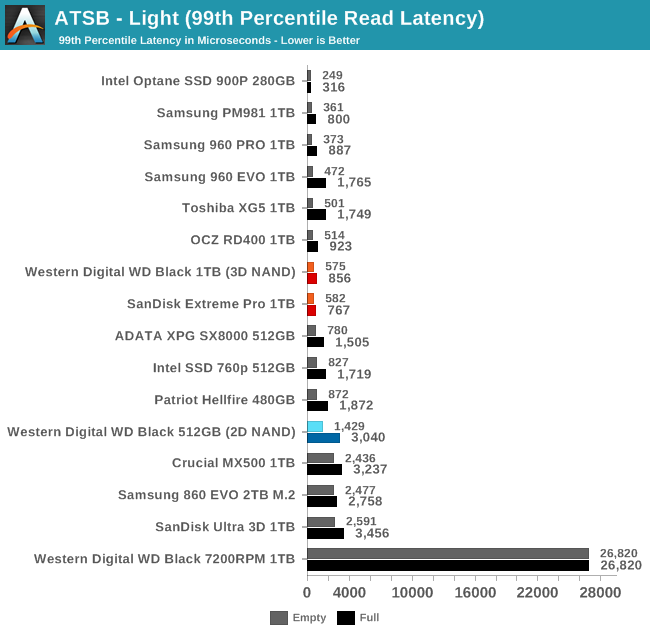

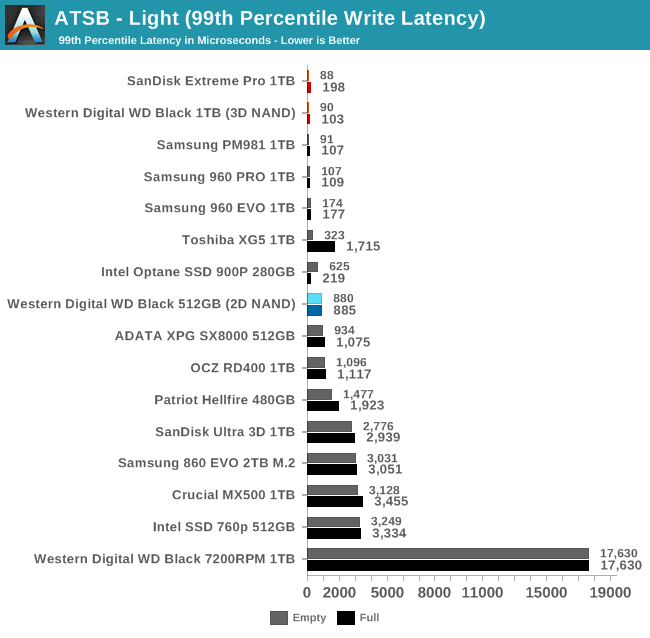

The WD Black offers great 99th percentile write latency on the Light test as its SLC cache never fills. The 99th percentile read latency doesn't rank quite as high, but the full-drive score is very good.

The WD Black offers great 99th percentile write latency on the Light test as its SLC cache never fills. The 99th percentile read latency doesn't rank quite as high, but the full-drive score is very good.

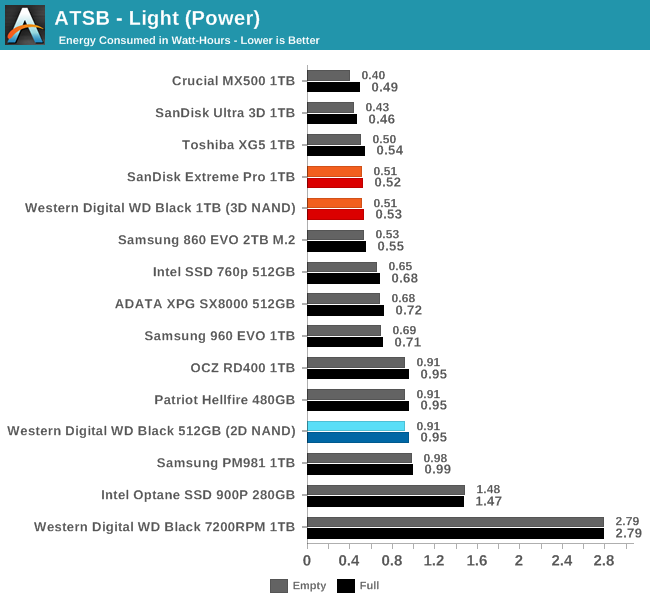

As with the Heavy test, the only NVMe SSD we've tested that can match the WD Black's power efficiency is the Toshiba XG5. These drives get the job done much faster than a SATA drive without using any more energy.

69 Comments

View All Comments

Chaitanya - Thursday, April 5, 2018 - link

Nice to see some good competition to Samsung products in SSD space. Would like to see durability testing on these drives.HStewart - Thursday, April 5, 2018 - link

Yes it nice to have competition in this area and important thing to notice here a long time disk drive manufacture is changes it technology to meet changes in storage technology.Samus - Thursday, April 5, 2018 - link

Looks like WD's purchase of SanDisk is showing some payoff. If only Toshiba would have taken advantage of OCZ (who purchased Indilinx) in-house talent. The Barefoot controller showed a lot of promise and could have easily been updated to support low power states and TLC NAND. But they shelved it. I don't really know why Toshiba bought OCZ.haukionkannel - Friday, April 6, 2018 - link

Indeed! Samsung did have too long time performance supremesy and that did make the company to upp the prices (natural development thought).Hopefully this better situation help uss customers in reasonable time frame. Too much bad news to consumers last years considering the prices.

XabanakFanatik - Thursday, April 5, 2018 - link

Whatever happened to performance consistency testing?Billy Tallis - Thursday, April 5, 2018 - link

The steady state QD32 random write test doesn't say anything meaningful about how modern SSDs will behave on real client workloads. It used to be a half-decent test before everything was TLC with SLC caching and the potential for thermal throttling on M.2 NVMe drives. Now, it's impossible to run a sustained workload for an hour and claim that it tells you something about how your drive will handle a bursty real world workload. The only purpose that benchmark can serve today is to tell you how suitable a consumer drive is for (ab)use as an enterprise drive.iter - Thursday, April 5, 2018 - link

Most of the tests don't say anything meaningful about "how modern SSDs will behave on real client workloads". You can spend 400% more money on storage that will only get you 4% of performance improvement in real world tasks.So why not omit synthetic tests altogether while you are at it?

Billy Tallis - Thursday, April 5, 2018 - link

You're alluding to the difference between storage performance and whole system/application performance. A storage benchmark doesn't necessarily give you a direct measurement of whole system or application performance, but done properly it will tell you about how the choice of an SSD will affect the portion of your workload that is storage-dependent. Much like Amdahl's law, speeding up storage doesn't affect the non-storage bottlenecks in your workload.That's not the problem with the steady-state random write test. The problem with the steady state random write test is that real world usage doesn't put the drive in steady state, and the steady state behavior is completely different from the behavior when writing in bursts to the SLC cache. So that benchmark isn't even applicable to the 5% or 1% of your desktop usage that is spent waiting on storage.

On the other hand, I have tried to ensure that the synthetic benchmarks I include actually are representative of real-world client storage workloads, by focusing primarily on low queue depths and limiting the benchmark duration to realistic quantities of data transferred and giving the drive idle time instead of running everything back to back. Synthetic benchmarks don't have to be the misleading marketing tests designed to produce the biggest numbers possible.

MrSpadge - Thursday, April 5, 2018 - link

Good answer, Billy. It won't please everyone here, but that's impossible anyway.iter - Thursday, April 5, 2018 - link

People do want to see how much time it takes before cache gives out. Don't presume to know what all people do with their systems.As I mentioned 99% of the tests are already useless when it comes to indicating overall system performance. 99% of the people don't need anything above mainstream SATA SSD. So your point on excluding that one test is rather moot.

All in all, it seems you are intentionally hiding the weakness of certain products. Not cool. Run the tests, post the numbers, that's what you get paid for, I don't think it is unreasonable to expect that you do your job. Two people pointed out the absence of that tests, which is two more than those who explicitly stated they don't care about it, much less have anything against it. Statistically speaking, the test is of interest, and I highly doubt it will kill you to include it.