The Kingston DC500 Series Enterprise SATA SSDs Review: Making a Name In a Commodity Market

by Billy Tallis on June 25, 2019 8:00 AM EST- Posted in

- SSDs

- Storage

- Kingston

- SATA

- Enterprise

- Phison

- Enterprise SSDs

- 3D TLC

- PS3112-S12

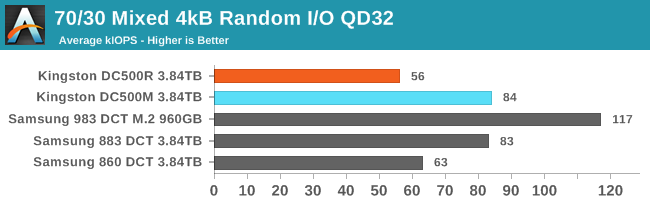

Mixed Random Performance

Real-world storage workloads usually aren't pure reads or writes but a mix of both. It is completely impractical to test and graph the full range of possible mixed I/O workloads—varying the proportion of reads vs writes, sequential vs random and differing block sizes leads to far too many configurations. Instead, we're going to focus on just a few scenarios that are most commonly referred to by vendors, when they provide a mixed I/O performance specification at all. We tested a range of 4kB random read/write mixes at queue depth 32, the maximum supported by SATA SSDs. This gives us a good picture of the maximum throughput these drives can sustain for mixed random I/O, but in many cases the queue depth will be far higher than necessary, so we can't draw meaningful conclusions about latency from this test. As with our tests of pure random reads or writes, we are using 32 threads each issuing one read or write request at a time. This spreads the work over many CPU cores, and for NVMe drives it also spreads the I/O across the drive's several queues.

The full range of read/write mixes is graphed below, but we'll primarily focus on the 70% read, 30% write case that is a fairly common stand-in for moderately read-heavy mixed workloads.

|

|||||||||

| Power Efficiency in MB/s/W | Average Power in W | ||||||||

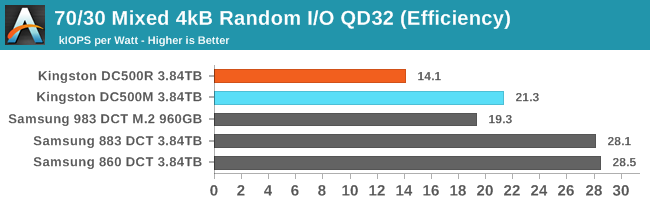

The Kingston and Samsung SATA drives are rather evenly matched for performance with a 70% read/30% write random IO workload: the DC500M is tied with the 883 DCT, and the DC500R is a bit slower than the 860 DCT. The two Kingston drives use the same amount of power, so the slower DC500R has a much worse efficiency score. The two Samsung drives have roughly the same great efficiency score; the slower 860 DCT also uses less power.

|

|||||||||

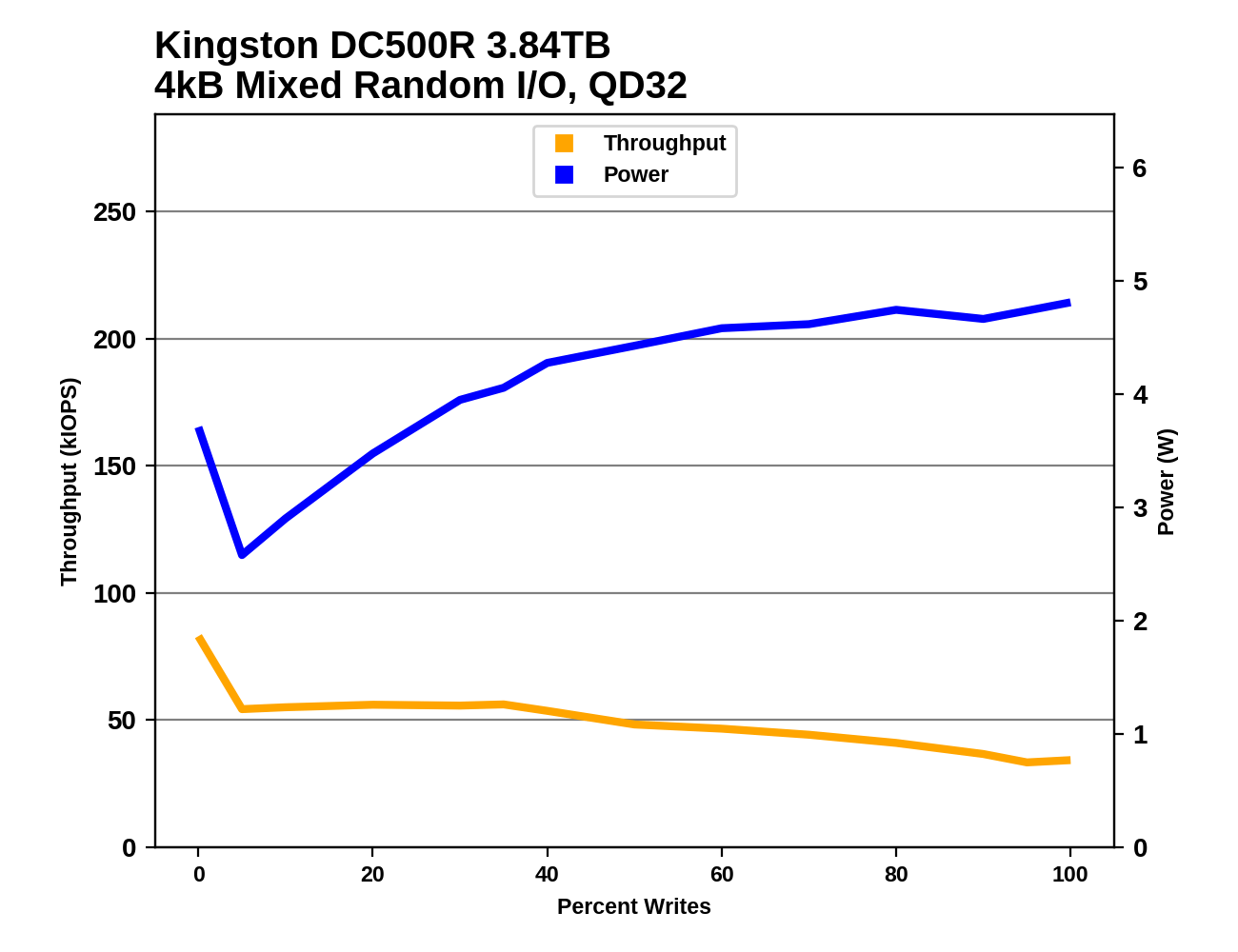

The Kingston DC500M and Samsung 883 DCT perform similarly for read-heavy mixes, but once the workload is more than about 30% writes, the Samsung falls behind. Their power consumption is very different: the Samsung plateaus at just over 3W while the DC500M starts at 3W and steadily climbs to over 5W as more writes are added to the mix.

Between the DC500R and the 860 DCT, the Samsung drive has better performance until the workload has shifted to be much more write-heavy than either drive is intended for. The Samsung drive's power consumption also never gets as high as the lowest power draw recorded from the DC500R during this test.

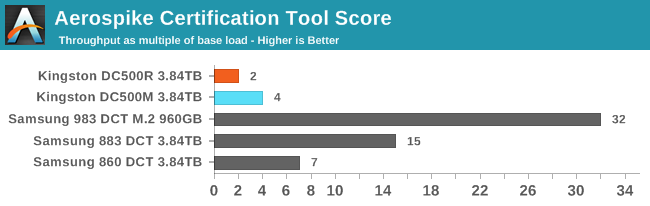

Aerospike Certification Tool

Aerospike is a high-performance NoSQL database designed for use with solid state storage. The developers of Aerospike provide the Aerospike Certification Tool (ACT), a benchmark that emulates the typical storage workload generated by the Aerospike database. This workload consists of a mix of large-block 128kB reads and writes, and small 1.5kB reads. When the ACT was initially released back in the early days of SATA SSDs, the baseline workload was defined to consist of 2000 reads per second and 1000 writes per second. A drive is considered to pass the test if it meets the following latency criteria:

- fewer than 5% of transactions exceed 1ms

- fewer than 1% of transactions exceed 8ms

- fewer than 0.1% of transactions exceed 64ms

Drives can be scored based on the highest throughput they can sustain while satisfying the latency QoS requirements. Scores are normalized relative to the baseline 1x workload, so a score of 50 indicates 100,000 reads per second and 50,000 writes per second. Since this test uses fixed IO rates, the queue depths experienced by each drive will depend on their latency, and can fluctuate during the test run if the drive slows down temporarily for a garbage collection cycle. The test will give up early if it detects the queue depths growing excessively, or if the large block IO threads can't keep up with the random reads.

We used the default settings for queue and thread counts and did not manually constrain the benchmark to a single NUMA node, so this test produced a total of 64 threads scheduled across all 72 virtual (36 physical) cores.

The usual runtime for ACT is 24 hours, which makes determining a drive's throughput limit a long process. For fast NVMe SSDs, this is far longer than necessary for drives to reach steady-state. In order to find the maximum rate at which a drive can pass the test, we start at an unsustainably high rate (at least 150x) and incrementally reduce the rate until the test can run for a full hour, and the decrease the rate further if necessary to get the drive under the latency limits.

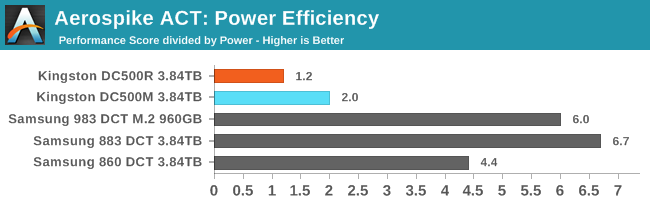

The Kingston drives don't handle the Aerospike test well at all. The DC500R can only pass the test at twice the throughput of a baseline standard that was set years ago, and DC500M's score of 4x the base load is still much worse than even the Samsung 860 DCT. The Kingston drives can provide comparable throughput to the Samsung SATA drives (as seen on the 70/30 test above), but they don't pass the strict latency QoS requirements imposed by the Aerospike test. This test is more write-intensive than the above 70/30 test and is definitely beyond what the DC500R is intended to be used for, but the DC500M should be able to do better.

|

|||||||||

| Power Efficiency | Average Power in W | ||||||||

The Kingston drives don't draw any more power than the Samsung drives for once, but since they are running at much lower throughput their efficiency scores are a fraction of what the Samsung drives earn.

28 Comments

View All Comments

Umer - Tuesday, June 25, 2019 - link

I know it may not be a huge deal to many, but Kingston, as a brand, left a really sour taste in my mouth after V300 fiasco since I bought those SSDs in a bulk for a new build back then.Death666Angel - Tuesday, June 25, 2019 - link

Let's put it this way: they have to be quite a bit cheaper than the nearest, known competitor (Crucial, Corsair, Adata, Samsung, Intel...) to be considered as a purchase by me.mharris127 - Thursday, July 25, 2019 - link

I don't expect Kingston to be any less expensive than ADATA as Kingston serves the mid price market and ADATA the low priced one. Samsung is a supposed premium product with a premium price tag to match. I haven't used a Kingston SSD but have some of their other products and haven't had a problem with any of them that wasn't caused by me. As far as picking my next SSD, I have had one ADATA SSD fail, they replaced it once I filled out some paperwork, they sent an RMA and I sent the defective drive back to them, the second one and a third one I bought a couple of months ago are working fine so far. My Crucial SSDs work fine. I have a Team Group SSD that works wonderfully after a year of service. I think my money is on either Crucial or Team Group the next time I buy that product.Notmyusualid - Tuesday, June 25, 2019 - link

...wasn't it OCZ that released the 'worst known' SSD?I had almost forgotten about those days.

I believe the only customers that got any value out of them where those on PERC and other known RAID controllers, which were not writing < 128kB blocks - and I wasn't one of them. I RMA'd & insta-sold the return, and bought X25M.

What a 'mare that was.

Dragonstongue - Tuesday, June 25, 2019 - link

Sandforce controller was ahead of it's time, not in the most positive ways all the time either...I had an Agility 3 60gb, used for just over 2 years for my system, mom used now an additional over 2.5 years, however it was either starting to have issues, or the way mom was using caused it to "forget" things now and then.

I fixed with a crucial mx100 or 200 (forget LOL) that still has over 90% life either way, the Agility 3 was "warning" though still showed as over 75% life left (christmas '18-19) .. def massive speed up by swapping to more modern as well as doing some cleaning for it..

SSD have come A LONG way in a short amount of time, sadly the producers of them via memory/controller/flash are the problem bad drivers, poor performance when should not, not work in every system when should etc.

thomasg - Tuesday, June 25, 2019 - link

Interestingly, I still have one of the OCZs with the first pre-production SandForce, the Vertex Limited 100 GB, which has been running for many years at high throughput and many many Terabytes of Writes.Still works perfectly.

I'm not sure I remember correctly, but I think the major issues started showing up for the production SandForce model that was used later on.

Chloiber - Tuesday, June 25, 2019 - link

I still have a Supertalent Ultadrive MX 32GB - not working properly anymore, but I don't even remember how many firmware updates I put that one through :)They just had really bad, buggy firmwares throughout.

leexgx - Wednesday, June 26, 2019 - link

main problem with sandforce is the compressed layer and the nand layer was not ever managed correctly with made trim ineffective and GC was having to be used on new writes resulting in high access times and half speed writes after 1 full drive of writes , you note did not have to be filled.if it was a 240gb ssd all you had to do to slow it down permanently was write over time 240-300gb(due to compression to get an actual full 1DWP) of data only way to reset it was secure erase (unsure if that was ever fixed on the SandForce SF3000 , seagate enterprise SSDs and ironwolf nas SSD)other issue which was more or less fixed was rare BSOD (more or less 2 systems i managed did not like them) or well the drive eating it self and becoming a 0Mb drive (extremely rare but did happen) the 0mb bug i think was fixed if you owned a intel drive but the BSOD fix was limited success

Gunbuster - Tuesday, June 25, 2019 - link

Indeed. Not going to support a company with a track record of shady practices.kpxgq - Tuesday, June 25, 2019 - link

The V300 fiasco is nothing compared to the Crucial V4 fisaco... quite possible the worst SSD drives ever made right along with the early OCZ Vertex drives. Over half the ones I bought for a project just completely stopped working a month in. I bought them trusting the Crucial brand name alone.