Synology DS2015xs Review: An ARM-based 10G NAS

by Ganesh T S on February 27, 2015 8:20 AM EST- Posted in

- NAS

- Storage

- Arm

- 10G Ethernet

- Synology

- Enterprise

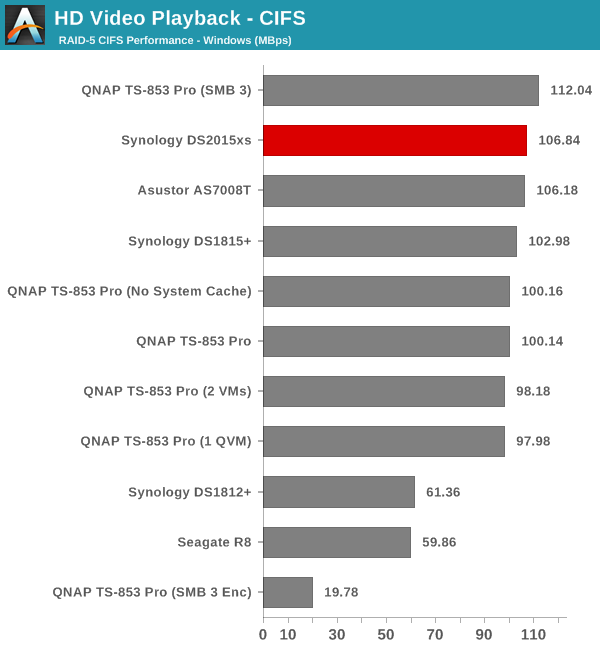

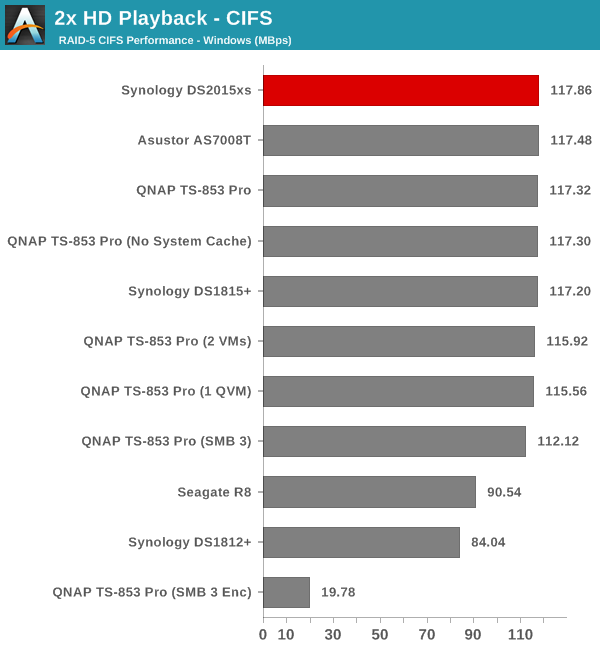

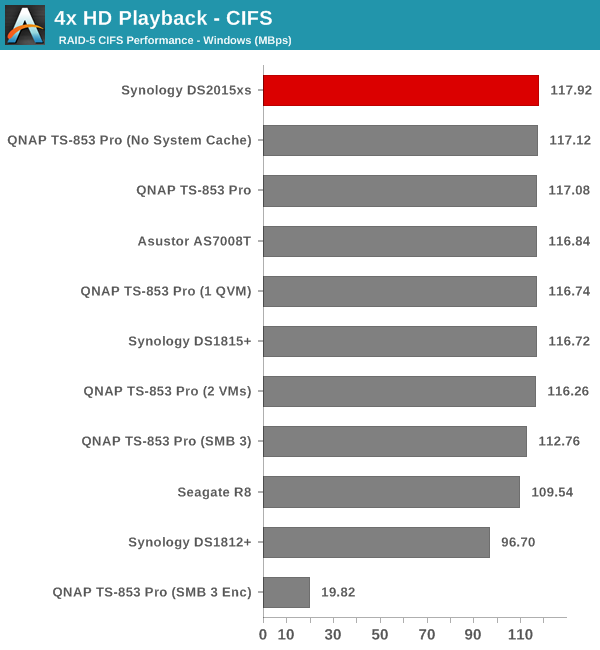

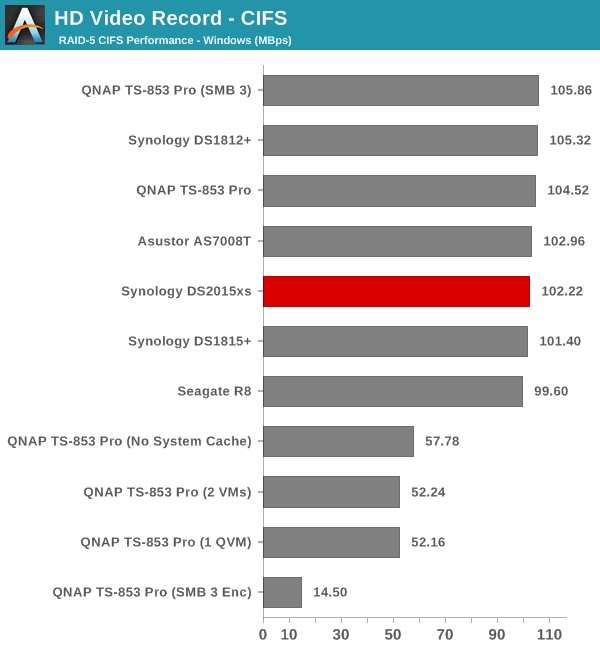

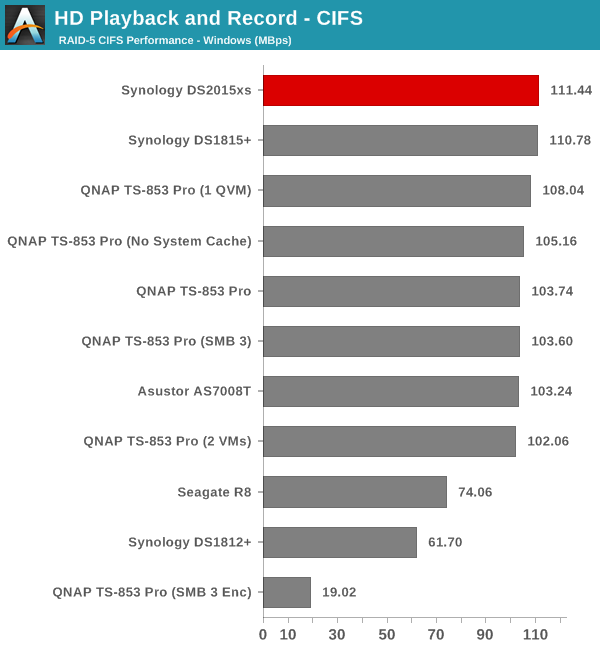

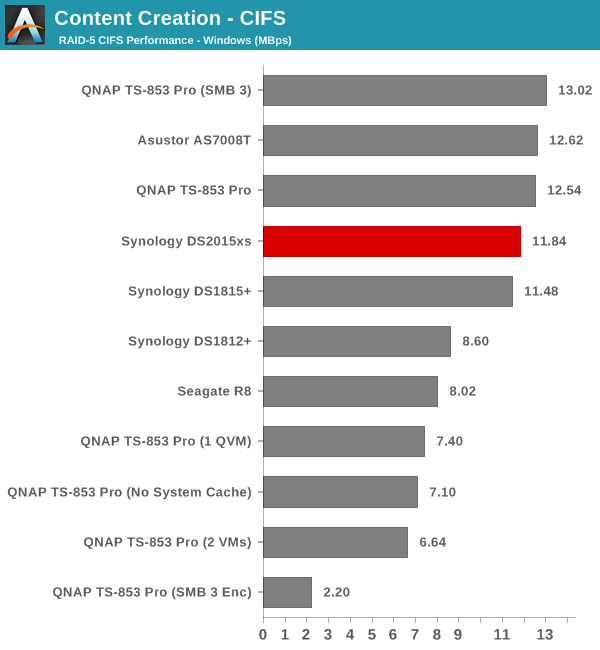

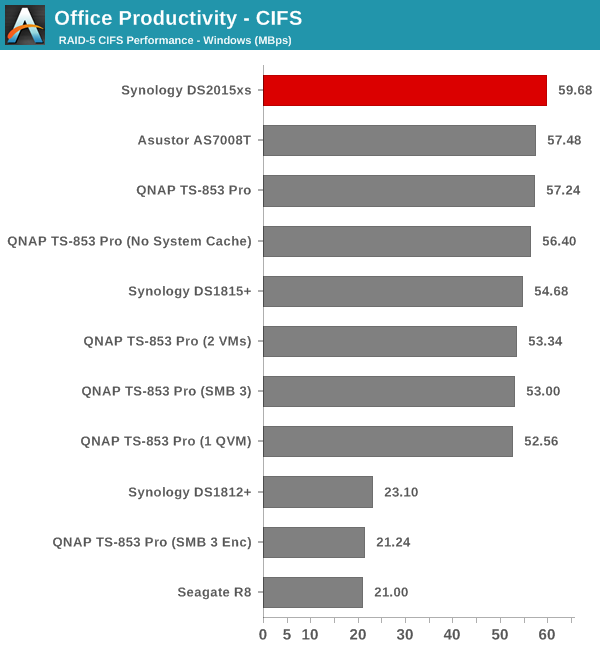

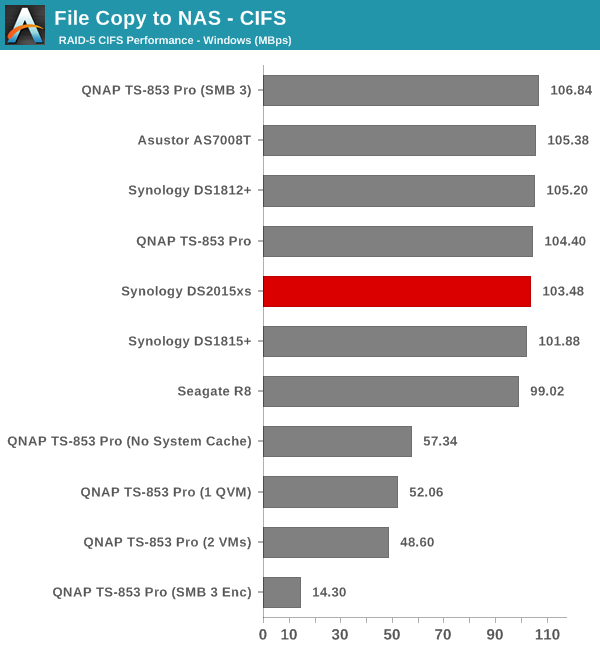

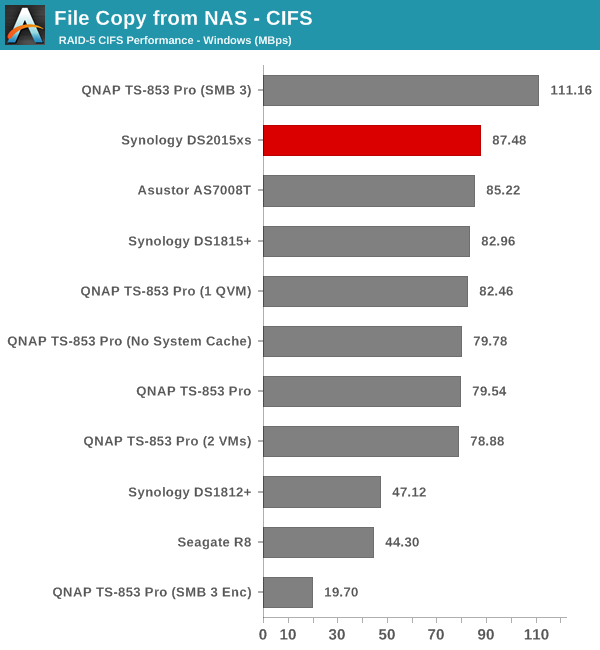

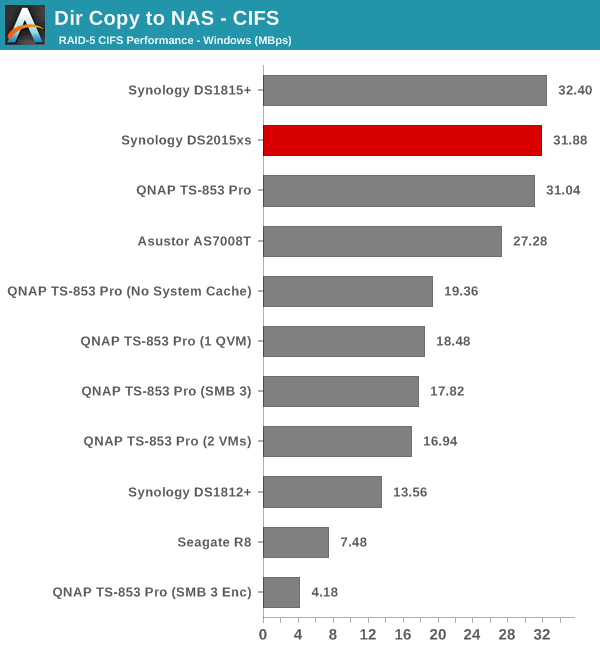

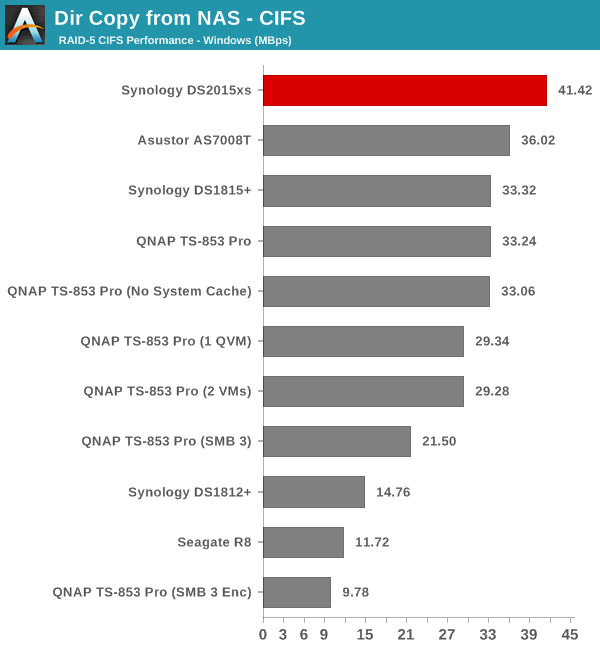

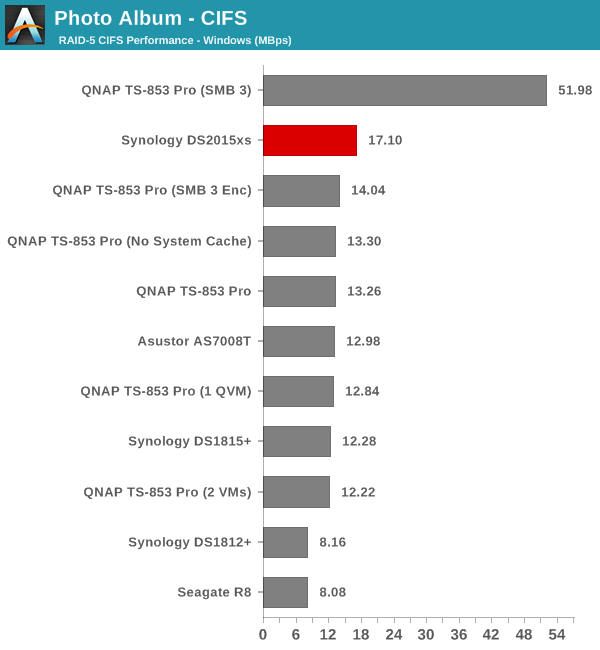

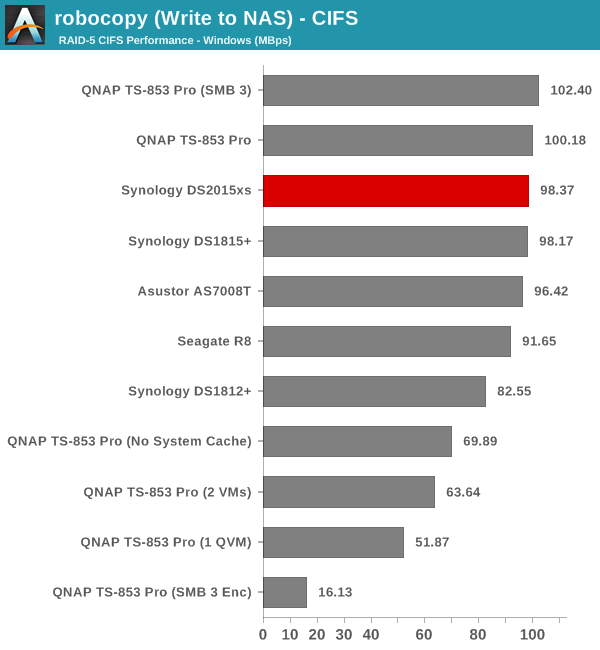

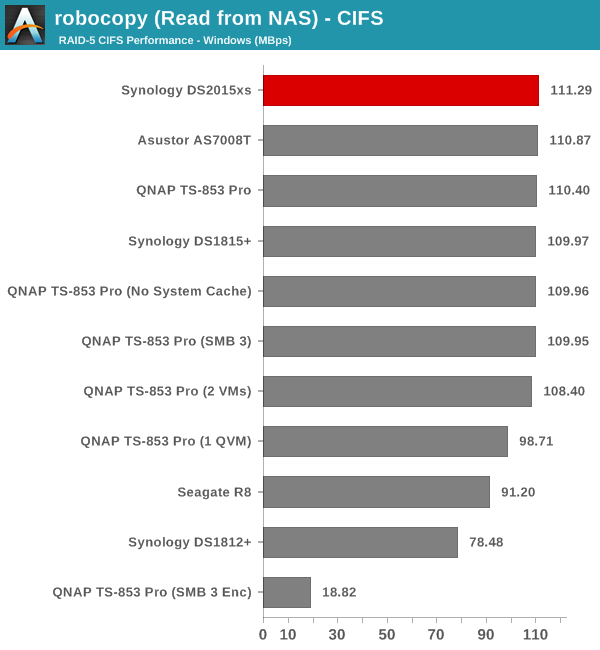

Single Client Performance - CIFS & iSCSI on Windows

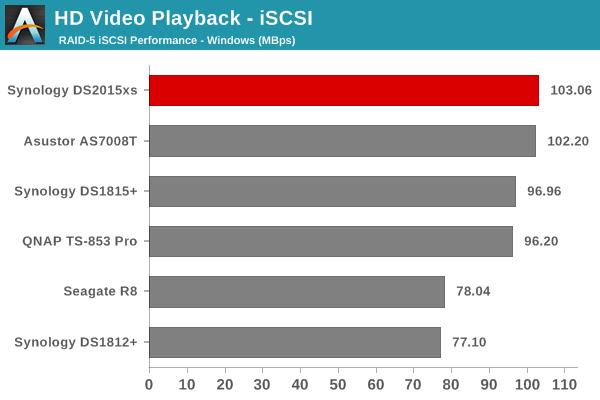

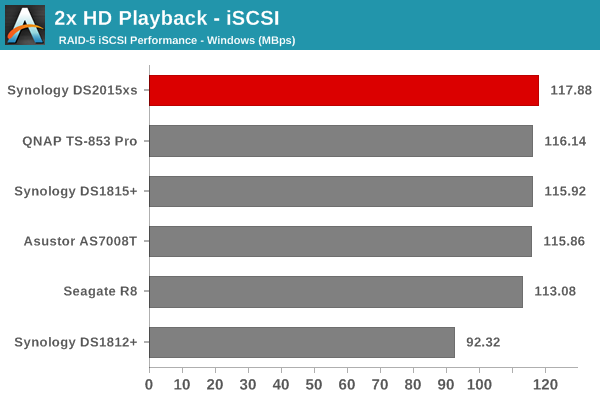

The single client CIFS and iSCSI performance of the Synology DS2015xs was evaluated on the Windows platforms using Intel NASPT and our standard robocopy benchmark. This was run from one of the virtual machines in our NAS testbed. All data for the robocopy benchmark on the client side was put in a RAM disk (created using OSFMount) to ensure that the client's storage system shortcomings wouldn't affect the benchmark results. It must be noted that all the shares / iSCSI LUNs are created in a RAID-5 volume. One important aspect to note here is that only the Synology DS2015xs uses SSDs. The other NAS units in each of the graphs below were benchmarked with hard drives.

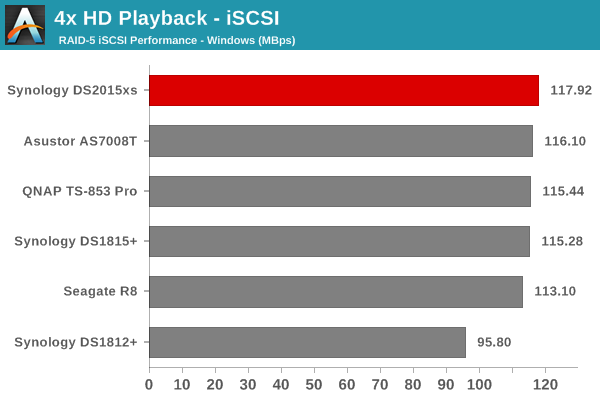

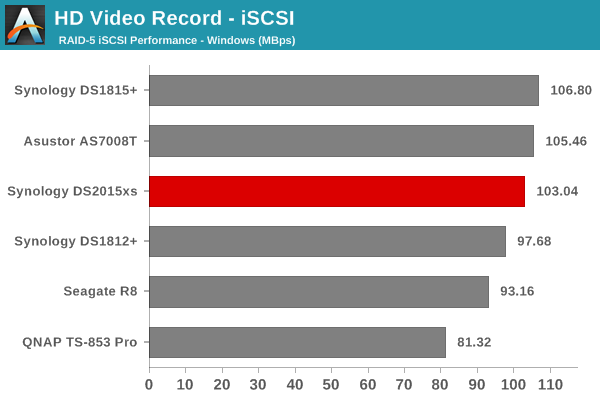

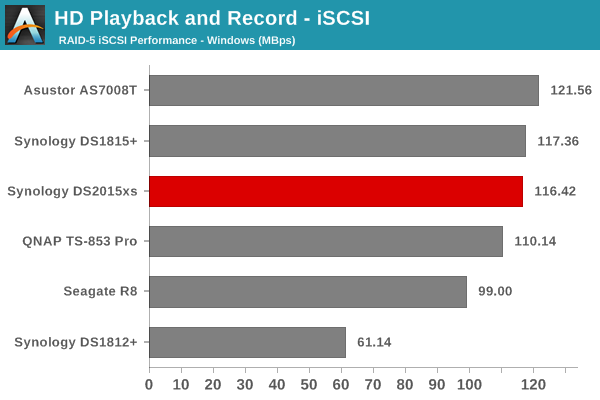

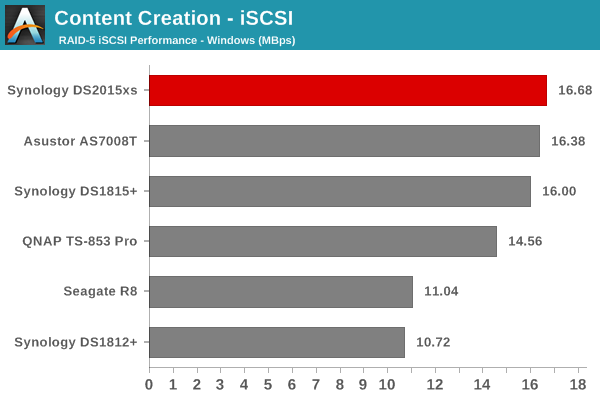

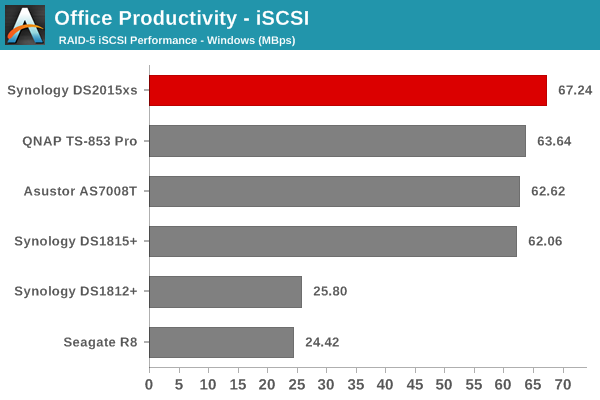

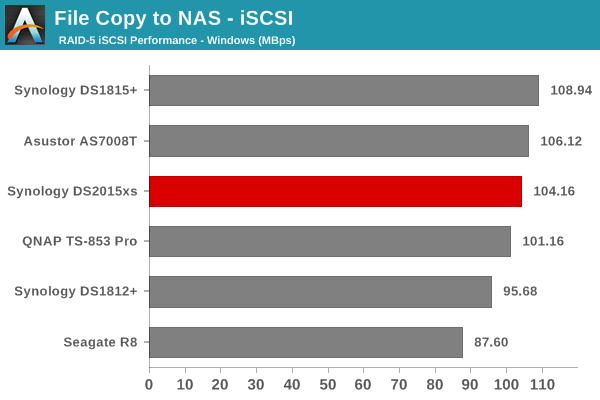

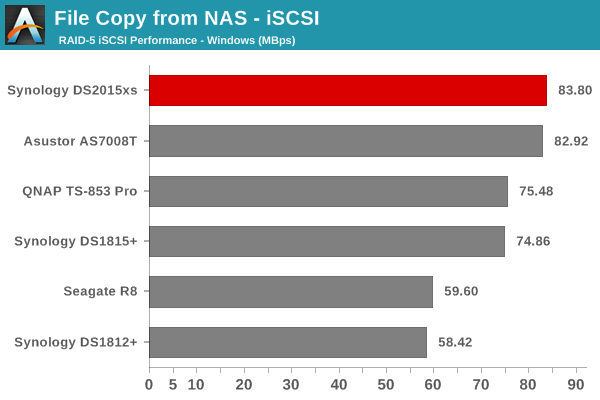

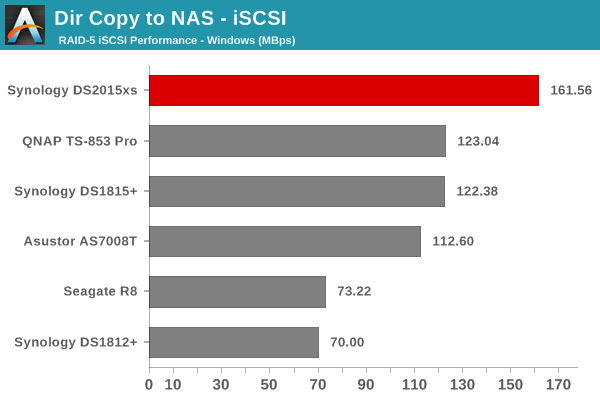

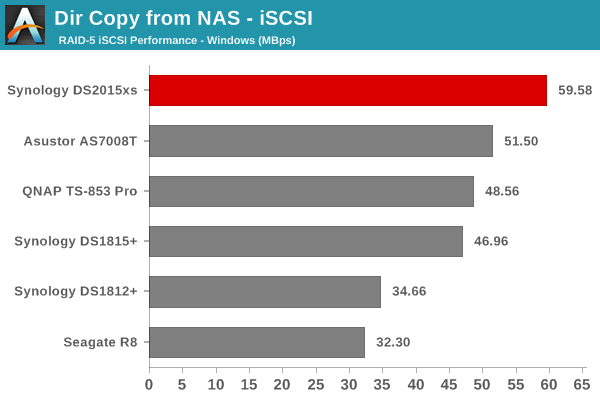

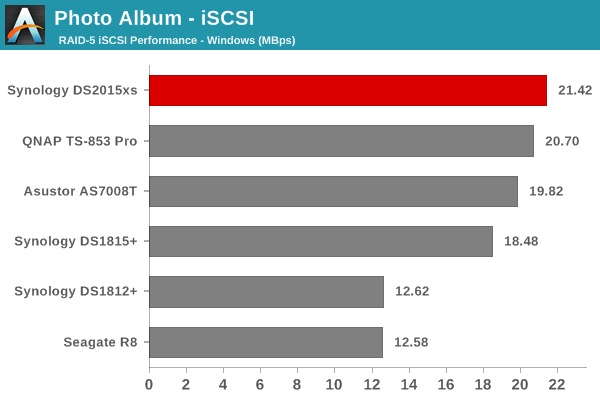

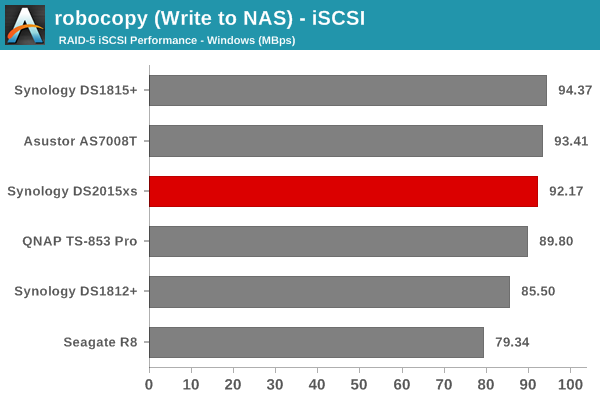

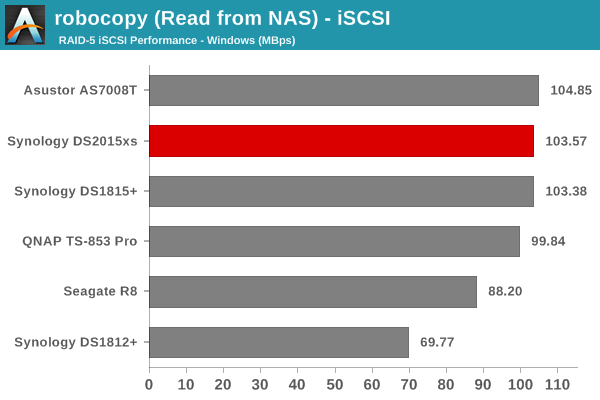

We created a 250 GB iSCSI LUN / target and mapped it on to a Windows VM in our testbed. The same NASPT benchmarks were run and the results are presented below. The observations we had in the CIFS subsection above hold true here too.

The DS2015xs loses out to the Asustor AS7008T and sometimes, even the DS1815+, when it comes to certain benchmarks. This shows that when it comes to access from a single GbE-equipped client, x86-based units can perform better despite being handicapped by hard drives.

49 Comments

View All Comments

chrysrobyn - Friday, February 27, 2015 - link

Is there one of these COTS boxes that runs any flavor of ZFS?SirGCal - Friday, February 27, 2015 - link

They run Syn's own format...But I still don't understand why one would use RAID 5 only on an 8 drive setup. To me the point is all about data protection on site (most secure going off site) but that still screams for RAID 6 or RAIDZ2 at least for 8 drive configurations. And using SSDs for performance fine but if that was the requirement, there are M.2 drives out now doing 2M/sec transfers... These fall to storage which I want performance with 4, 6, 8 TB drives in double parity protection formats.

Kevin G - Friday, February 27, 2015 - link

I think you mean 2 GB/s transfers. Though the M.2 cards capable of doing so are currently OEM only with retail availability set for around May.Though I'll second your ideas about RAID6 or RAIDZ2: rebuild times can take days and that is a significant amount of time to be running without any redundancy with so many drives.

SirGCal - Friday, February 27, 2015 - link

Yes I did mean 2G, thanks for the corrections. It was early.JKJK - Monday, March 2, 2015 - link

My Areca 1882 ix-16 raid controller uses ~12 hours to rebuild a 15x4TB raid with WD RE4 drives. I'm quite dissappointed with the performance of most "prouser" nas boxes. Even enterprise qnaps can't compete with a decent areca controller.It's time some one built som real NAS boxes, not this crap we're seeing today.

JKJK - Monday, March 2, 2015 - link

Forgot to mention it's a Raid 6vol7ron - Friday, February 27, 2015 - link

From what I've read (not what I've seen), I can confirm that RAID-6 is the best option for large drives these days.If I recall correctly, during a rebuild after a drive failure (new drive added) there have been reports of bad reads from another "good" drive. This means that the parity drive is not deep enough to recover the lost data. Adding more redundancy, will permit you to have more failures and recover when an unexpected one appears.

I think the finding was also that as drives increase in size (more terabytes), the chance of errors and bad sectors on "good" drives increases significantly. So even if a drive hasn't failed, it's data is no longer captured and the benefit of the redundancy is lost.

Lesson learned: increase the parity depth and replace drives when experiencing bad sectors/reads, not just when drives "fail".

Romulous - Sunday, March 1, 2015 - link

Another benefit of RAID 6 besides 2 drives being able to die, is the prevention of bit rot. In Raid 5, if i have a corrupt block, and one block of parity data, it wont know which one is correct. However since RAID 6 has 2 parity blocks for the same data block, its got a better chance if figuring it out.802.11at - Friday, February 27, 2015 - link

RAID5 is evil. RAID10 is where it's at. ;-)seanleeforever - Friday, February 27, 2015 - link

802.11at:cannot tell whether you are serious or not. but

RAID 10 can survive a single disk failure, RAID 6 can survive a failure of two member disks. personally i would NEVER use raid 10 because your chance of losing data is much greater than any raid that doesn't involve 0 (RAID 0 was a afterthought, it was never intended, thus called 0).

RAID 6 or RAID DP are the only ones used in datacenter for EMC or Netapp.